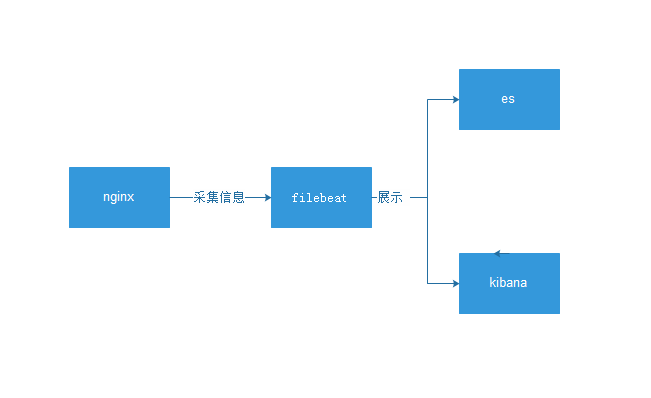

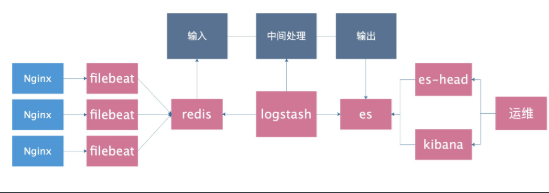

ELk簡介 基本架構圖 傳統日誌分析需求(面試) 1.找出訪問網站頻次最高的 IP 排名前十 2.找出訪問網站排名前十的 URL 3.找出中午 10 點到 2 點之間 www 網站訪問頻次最高的 IP 4.對比昨天這個時間段和今天這個時間段訪問頻次有什麼變化 5.對比上周這個時間和今天這個時間的區別 ...

ELk簡介

E: elasticsearch 存儲數據 java

L: logstash 收集,過濾,轉發,匹配,大,啟動慢,中間角色 java

K: kibana 過濾,分析,圖形展示 java

F: filebeat 收集日誌,過濾 go基本架構圖

傳統日誌分析需求(面試)

1.找出訪問網站頻次最高的 IP 排名前十

2.找出訪問網站排名前十的 URL

3.找出中午 10 點到 2 點之間 www 網站訪問頻次最高的 IP

4.對比昨天這個時間段和今天這個時間段訪問頻次有什麼變化

5.對比上周這個時間和今天這個時間的區別

6.找出特定的頁面被訪問了多少次

7.找出有問題的 IP 地址,並告訴我這個 IP 地址都訪問了什麼頁面,在對比前幾天他來過嗎?他從什麼時間段開

始訪問的,什麼時間段走了

8.找出來訪問最慢的前十個頁面並統計平均響應時間,對比昨天這也頁面訪問也這麼慢嗎?

9.找出搜索引擎今天各抓取了多少次?抓取了哪些頁面?響應時間如何?

10.找出偽造成搜索引擎的 IP 地址

yum provides host

安裝軟體包

host +ip 我可以看到ip是否偽裝11.5 分鐘之內告訴我結果

日誌收集分類

代理層: nginx haproxy

web層: nginx tomcat java php

db層: mysql mongo redis es

系統層: message secure

存儲層:nfs gfs部署

ELK安裝部署

準備單機環境

db01 nginx filebet es kibana es-head

db02 nginx filebet

1.es單機環境準備

cat >/etc/elasticsearch/elasticsearch.yml <<EOF

node.name: node-1

path.data: /var/lib/elasticsearch

path.logs: /var/log/elasticsearch

network.host: 10.0.0.51,127.0.0.1

http.port: 9200

EOF

systemctl stop elasticsearch

rm -rf /var/lib/elasticsearch/*

rm -rf /var/lib/kibana/*

systemctl start elasticsearch

systemctl start kibana

tail -f /var/log/elasticsearch/elasticsearch.log

netstat -lntup|egrep "5601|9200"filebet收集nginx普通格式日誌

ES

kibana

es-head

0.更新系統時間

ntpdate time1.aliyun.com

1.安裝Nginx

cat >/etc/yum.repos.d/nginx.repo <<EOF

[nginx-stable]

name=nginx stable repo

baseurl=http://nginx.org/packages/centos/\$releasever/\$basearch/

gpgcheck=1

enabled=1

gpgkey=https://nginx.org/keys/nginx_signing.key

module_hotfixes=true

[nginx-mainline]

name=nginx mainline repo

baseurl=http://nginx.org/packages/mainline/centos/\$releasever/\$basearch/

gpgcheck=1

enabled=0

gpgkey=https://nginx.org/keys/nginx_signing.key

module_hotfixes=true

EOF

yum install nginx -y

systemctl start nginx

curl 127.0.0.1

2.配置Nginx並創建測試頁面

rm -rf /etc/nginx/conf.d/default.conf

cat >/etc/nginx/conf.d/www.conf<<EOF

server {

listen 80;

server_name localhost;

location / {

root /code/www;

index index.html index.htm;

}

}

EOF

mkdir /code/www/ -p

echo "db01-www" > /code/www/index.html

nginx -t

systemctl restart nginx

curl 127.0.0.1

tail -f /var/log/nginx/access.log

2.安裝filebeat

rpm -ivh filebeat-6.6.0-x86_64.rpm

rpm -qc filebeat

3.配置filebeat

[root@db-01 /data/soft]# cat /etc/filebeat/filebeat.yml

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

output.elasticsearch:

hosts: ["10.0.0.51:9200"]

4.啟動並檢查

systemctl start filebeat

tail -f /var/log/filebeat/filebeat

5.查看日誌結果

es-head查看

curl -s 127.0.0.1:9200/_cat/indices|awk '{print $3}'

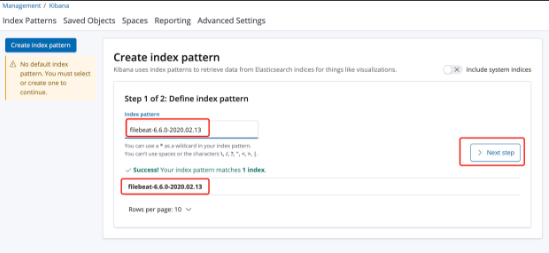

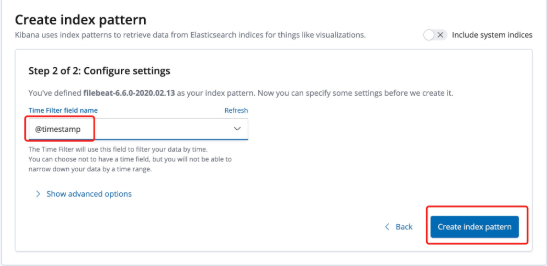

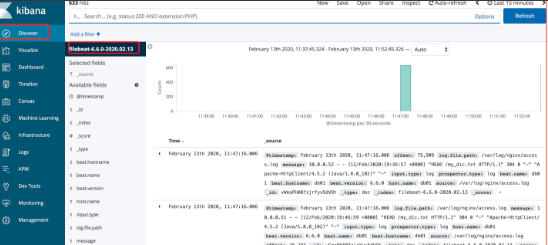

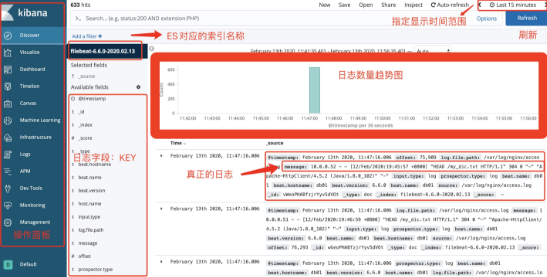

6.kibana添加索引

Management-->kibana-->Index Patterns-->filebeat-6.6.0-2020.02.13kibana界面

kibana區域介紹

filebeat收集nginx的json各式日誌

1.普通Nginx日誌不足的地方:

- 日誌都在一個value里,不能拆分單獨顯示和搜索

- 索引名稱沒有意義

2.理想中的情況

{

$remote_addr : 192.168.12.254

- : -

$remote_user : -

[$time_local]: [10/Sep/2019:10:52:08 +0800]

$request: GET /jhdgsjfgjhshj HTTP/1.0

$status : 404

$body_bytes_sent : 153

$http_referer : -

$http_user_agent :ApacheBench/2.3

$http_x_forwarded_for:-

}

3.目標

將Nginx日誌轉換成json格式

4.修改nginx配置文件使日誌轉換成json

vim /etc/nginx/nginx.conf

log_format json '{ "time_local": "$time_local", '

'"remote_addr": "$remote_addr", '

'"referer": "$http_referer", '

'"request": "$request", '

'"status": $status, '

'"bytes": $body_bytes_sent, '

'"agent": "$http_user_agent", '

'"x_forwarded": "$http_x_forwarded_for", '

'"up_addr": "$upstream_addr",'

'"up_host": "$upstream_http_host",'

'"upstream_time": "$upstream_response_time",'

'"request_time": "$request_time"'

' }';

access_log /var/log/nginx/access.log json;

清空舊日誌

> /var/log/nginx/access.log

檢查並重啟nginx

nginx -t

systemctl restart nginx

5.修改filebeat配置文件

cat >/etc/filebeat/filebeat.yml<<EOF

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

json.keys_under_root: true

json.overwrite_keys: true

output.elasticsearch:

hosts: ["10.0.0.51:9200"]

EOF

6.刪除舊的ES索引

es-head >> filebeat-6.6.0-2019.11.15 >> 動作 >>刪除

7.重啟filebeat

systemctl restart filebeat

curl 127.0.0.1生成新的日誌,

8,刪除kibana自定義索引名稱

filebeat自定義ES索引名稱

1.理想中的索引名稱

filebeat-6.6.0-2020.02.13

nginx-6.6.0-2019.11.15

2.filebeat配置

cat >/etc/filebeat/filebeat.yml<<EOF

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

json.keys_under_root: true

json.overwrite_keys: true

output.elasticsearch:

hosts: ["10.0.0.51:9200"]

index: "nginx-%{[beat.version]}-%{+yyyy.MM}"

setup.template.name: "nginx"

setup.template.pattern: "nginx-*"

setup.template.enabled: false

setup.template.overwrite: true

EOF

3.重啟filebeat

systemctl restart filebeat

4.生成新日誌並檢查

curl 127.0.0.1

es-head里索引名稱

nginx-6.6.0-2020.02

5.kibana添加按日誌分類存儲

filebeat按照服務類型拆分索引

1.理想中的情況:

nginx-access-6.6.0-2020.02

nginx-error-6.6.0-2020.02

2.filebeat配置

第一種方法:

cat >/etc/filebeat/filebeat.yml <<EOF

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

json.keys_under_root: true

json.overwrite_keys: true

- type: log

enabled: true

paths:

- /var/log/nginx/error.log

output.elasticsearch:

hosts: ["10.0.0.51:9200"]

indices:

- index: "nginx-access-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

source: "/var/log/nginx/access.log"

- index: "nginx-error-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

source: "/var/log/nginx/error.log"

setup.template.name: "nginx"

setup.template.pattern: "nginx-*"

setup.template.enabled: false

setup.template.overwrite: true

EOF

第二種方法:

cat >/etc/filebeat/filebeat.yml <<EOF

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

json.keys_under_root: true

json.overwrite_keys: true

tags: ["access"]

- type: log

enabled: true

paths:

- /var/log/nginx/error.log

tags: ["error"]

output.elasticsearch:

hosts: ["10.0.0.51:9200"]

indices:

- index: "nginx-access-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

tags: "access"

- index: "nginx-error-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

tags: "error"

setup.template.name: "nginx"

setup.template.pattern: "nginx-*"

setup.template.enabled: false

setup.template.overwrite: true

EOF

3.重啟filebeat

systemctl restart filebeat

4.生成測試數據

curl 127.0.0.1/zhangya

5.檢查是否生成對應的索引

nginx-access-6.6.0-2020.02

nginx-error-6.6.0-2020.02收集多台nginx日誌

1.安裝nginx

yum install nginx

2.複製db01配置文件

scp 10.0.0.51:/etc/nginx/nginx.conf /etc/nginx/nginx.conf

scp 10.0.0.51:/etc/nginx/conf.d/www.conf /etc/nginx/conf.d/

3.創建測試頁面

mkdir /code/www/ -p

echo "db02-www" > /code/www/index.html

4.重啟nginx

>/var/log/nginx/access.log

>/var/log/nginx/error.log

nginx -t

systemctl restart nginx

5.生成測試頁面

curl 127.0.0.1/22222222222222

6.安裝filebeat

rpm -ivh filebeat...

7.複製filebeat配置文件

scp 10.0.0.51:/etc/filebeat/filebeat.yml /etc/filebeat/

8.啟動filebeat

systemctl restart filebeatfilebeat原理

類似tail -f

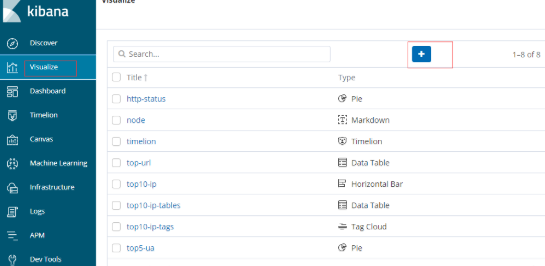

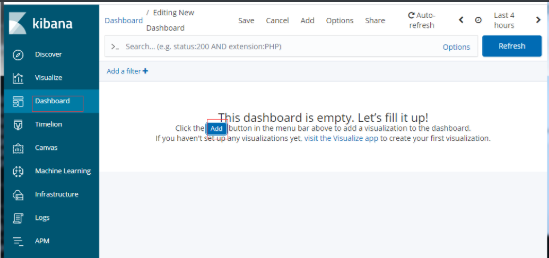

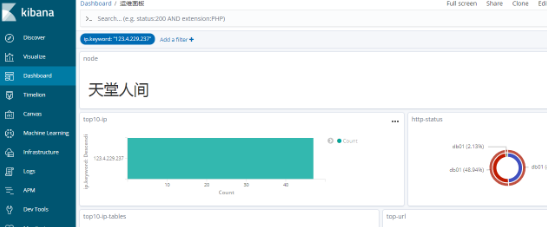

實時讀取,會記錄上一次已經傳送的位置點自定義kibana圖形

組合面板

過濾查看

收集tomcat的json日誌

1.安裝tomcat

yum install tomcat tomcat-webapps tomcat-admin-webapps tomcat-docs-webapp tomcat-javadoc -y

filebeat收集tomcat的json日誌

1.安裝tomcat

yum install tomcat -y

systemctl start tomcat

tail -f /var/log/tomcat/localhost_access_log.2020-02-14.txt

2.修改tomcat配置將日誌轉換為json格式

cp /etc/tomcat/server.xml /opt/

vim /etc/tomcat/server.xml

pattern="{"clientip":"%h","ClientUser":"%l","authenticated":"%u","AccessTime":"%t","method":"%r","status":"%s","SendBytes":"%b","Query?string":"%q","partner":"%{Referer}i","AgentVersion":"%{User-Agent}i"}"/>

3.清空日誌並重啟

> /var/log/tomcat/localhost_access_log.2020-02-14.txt

systemctl restart tomcat

4.訪問並查看日誌是否為json格式

curl 127.0.0.1:8080

tail -f /var/log/tomcat/localhost_access_log.2020-02-14.txt

5.創建filebeat配置文件

cat >/etc/filebeat/filebeat.yml <<EOF

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

json.keys_under_root: true

json.overwrite_keys: true

tags: ["access"]

- type: log

enabled: true

paths:

- /var/log/nginx/error.log

tags: ["error"]

- type: log

enabled: true

paths:

- /var/log/tomcat/localhost_access_log.*.txt

json.keys_under_root: true

json.overwrite_keys: true

tags: ["tomcat"]

output.elasticsearch:

hosts: ["10.0.0.51:9200"]

indices:

- index: "nginx-access-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

tags: "access"

- index: "nginx-error-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

tags: "error"

- index: "tomcat-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

tags: "tomcat"

setup.template.name: "nginx"

setup.template.pattern: "nginx-*"

setup.template.enabled: false

setup.template.overwrite: true

EOF

6.重啟filebeat並檢查

systemctl restart filebeat收集JAVA日誌

https://www.elastic.co/guide/en/beats/filebeat/6.6/multiline-examples.html

java日誌的特點:

1.報錯信息巨多

2.報錯信息巨多還是一個事件.不能分開看

一段java報錯日誌如下:

[2019-09-10T16:15:41,630][ERROR][o.e.b.Bootstrap ] [CcJTI28] Exception

java.lang.IllegalArgumentException: unknown setting [nnode.name] did you mean [node.name]?

at org.elasticsearch.common.settings.AbstractScopedSettings.validate(AbstractScopedSettings.java:482) ~[elasticsearch-6.6.0.jar:6.6.0]

at org.elasticsearch.common.settings.AbstractScopedSettings.validate(AbstractScopedSettings.java:427) ~[elasticsearch-6.6.0.jar:6.6.0]

at org.elasticsearch.common.settings.AbstractScopedSettings.validate(AbstractScopedSettings.java:398) ~[elasticsearch-6.6.0.jar:6.6.0]

at org.elasticsearch.common.settings.AbstractScopedSettings.validate(AbstractScopedSettings.java:369) ~[elasticsearch-6.6.0.jar:6.6.0]

at org.elasticsearch.common.settings.SettingsModule.<init>(SettingsModule.java:148) ~[elasticsearch-6.6.0.jar:6.6.0]

[2019-09-10T16:18:16,742][INFO ][o.e.c.m.MetaDataIndexTemplateService] [node-1] adding template [kibana_index_template:.kibana] for index patterns [.kibana]

[2019-09-10T16:18:17,981][INFO ][o.e.c.m.MetaDataIndexTemplateService] [node-1] adding template [kibana_index_template:.kibana] for index patterns [.kibana]

[2019-09-10T16:18:33,417][INFO ][o.e.c.m.MetaDataIndexTemplateService] [node-1] adding template [kibana_index_template:.kibana] for index patterns [.kibana]

匹配思路:

1.java報錯日誌特點

正常日誌是以[日期]開頭的

報錯日誌行數多,但是不是以[

2.匹配以[開頭的行,一直到下一個以[開頭的行,中間所有的數據屬於一個事件,放在一起發給ES

filebeat收集java多行匹配模式

1.filebeat配置文件

cat >/etc/filebeat/filebeat.yml<<EOF

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/elasticsearch/elasticsearch.log

multiline.pattern: '^\['

multiline.negate: true

multiline.match: after

output.elasticsearch:

hosts: ["10.0.0.51:9200"]

index: "es-%{[beat.version]}-%{+yyyy.MM}"

setup.template.name: "es"

setup.template.pattern: "es-*"

setup.template.enabled: false

setup.template.overwrite: true

EOF

2.重啟filebeat

systemctl restart filebeat

3.檢查java報錯日誌是否合併成一行了

kibana添加索引然後搜索關鍵詞 at org

filbeat模塊module

作用:

可以將特定的服務的普通日誌轉成json格式

filbeat使用模塊收集nginx日誌

1.清空並把nginx日誌恢覆成普通格式

#清空日誌

>/var/log/nginx/access.log

#編輯配置文件

vi /etc/nginx/nginx.conf

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

#檢查並重啟

nginx -t

systemctl restart nginx

2.訪問並檢查日誌是否為普通格式

curl 127.0.0.1

tail -f /var/log/nginx/access.log

3.配置filebeat配置文件支持模塊

vim /etc/filebeat/filebeat.yml

filebeat.config.modules:

path: ${path.config}/modules.d/*.yml

reload.enabled: true

reload.period: 10s

output.elasticsearch:

hosts: ["10.0.0.51:9200"]

indices:

- index: "nginx-access-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

event.dataset: "nginx.access"

- index: "nginx-error-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

event.dataset: "nginx.error"

setup.template.name: "nginx"

setup.template.pattern: "nginx-*"

setup.template.enabled: false

setup.template.overwrite: true

4.激活filebeat的nginx模塊

filebeat modules enable nginx

filebeat modules list

5.配置filebeat的nginx模塊配置文件

cat >/etc/filebeat/modules.d/nginx.yml <<EOF

- module: nginx

access:

enabled: true

var.paths: ["/var/log/nginx/access.log"]

error:

enabled: true

var.paths: ["/var/log/nginx/error.log"]

EOF

6.es安裝filebeat的nginx模塊必要插件並重啟

cd /usr/share/elasticsearch/:

./bin/elasticsearch-plugin install file:///root/ingest-geoip-6.6.0.zip

./bin/elasticsearch-plugin install file:///root/ingest-user-agent-6.6.0.zip

systemctl restart elasticsearch

7.重啟filebeat

systemctl restart filebeat

filebeat使用模塊收集mysql日誌

1.配置mysql錯誤日誌和慢日誌路徑

編輯my.cnf

log-error=錯誤日誌路徑

slow_query_log=ON

slow_query_log_file=慢日誌日誌路徑

long_query_time=3

2.重啟mysql並製造慢日誌

systemctl restart mysql

慢日誌製造語句

select sleep(2) user,host from mysql.user ;

3.確認慢日誌和錯誤日誌確實有生成

4.激活filebeat的mysql模塊

filebeat module enable mysql

5.配置mysql的模塊

- module: mysql

error:

enabled: true

var.paths: ["錯誤日誌路徑"]

slowlog:

enabled: true

var.paths: ["慢日誌日誌路徑"]

6.配置filebeat根據日誌類型做判斷

filebeat.config.modules:

path: ${path.config}/modules.d/*.yml

reload.enabled: true

reload.period: 10s

output.elasticsearch:

hosts: ["10.0.0.51:9200"]

indices:

- index: "nginx_access-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

fileset.module: "nginx"

fileset.name: "access"

- index: "nginx_error-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

fileset.module: "nginx"

fileset.name: "error"

- index: "mysql_slowlog-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

fileset.module: "mysql"

fileset.name: "slowlog"

- index: "mysql_error-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

fileset.module: "mysql"

fileset.name: "error"

setup.template.name: "nginx"

setup.template.pattern: "nginx_*"

setup.template.enabled: false

setup.template.overwrite: true

7.重啟filebeat

systemctl restart filebeat使用input的docker類型收集docker日誌

docker

1.安裝dockder

yum install -y yum-utils device-mapper-persistent-data lvm2

wget -O /etc/yum.repos.d/docker-ce.repo https://download.docker.com/linux/centos/docker-ce.repo

sed -i 's+download.docker.com+mirrors.tuna.tsinghua.edu.cn/docker-ce+' /etc/yum.repos.d/docker-ce.repo

yum makecache fast

yum install docker-ce -y

mkdir -p /etc/docker

tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://ig2l319y.mirror.aliyuncs.com"]

}

EOF

systemctl daemon-reload

systemctl restart docker

2.啟動2個Nginx容器並訪問測試

docker run -d -p 80:80 nginx

docker run -d -p 8080:80 nginx

curl 10.0.0.52

curl 10.0.0.52:8080

3.配置filebeat

[root@db02 ~]# cat /etc/filebeat/filebeat.yml

filebeat.inputs:

- type: docker

containers.ids:

- '*'

output.elasticsearch:

hosts: ["10.0.0.51:9200"]

index: "docker-%{[beat.version]}-%{+yyyy.MM}"

setup.template.name: "docker"

setup.template.pattern: "docker-*"

setup.template.enabled: false

setup.template.overwrite: true

4.重啟filebeat

systemctl restart filebeat

5.訪問生成測試數據

curl 10.0.0.52/1111111111

curl 10.0.0.52:8080/2222222222使用docker-compose啟動docker容器

場景:

容器1: nginx

容器2: mysql

filebeat收集docker日誌可以早下班版

1.假設的場景

nginx容器 80埠

mysql容器 8080埠

2.理想中的索引名稱

docker-nginx-6.6.0-2020.02

docker-mysql-6.6.0-2020.02

3.理想的日誌記錄格式

nginx容器日誌:

{

"log": "xxxxxx",

"stream": "stdout",

"time": "xxxx",

"service": "nginx"

}

mysql容器日誌:

{

"log": "xxxxxx",

"stream": "stdout",

"time": "xxxx",

"service": "mysql"

}

4.docker-compose配置

yum install docker-compose -y

cat >docker-compose.yml<<EOF

version: '3'

services:

nginx:

image: nginx:latest

labels:

service: nginx

logging:

options:

labels: "service"

ports:

- "80:80"

db:

image: nginx:latest

labels:

service: db

logging:

options:

labels: "service"

ports:

- "8080:80"

EOF

5.刪除舊的容器

docker stop $(docker ps -q)

docker rm $(docker ps -qa)

6.啟動容器

docker-compose up -d

7.配置filebeat

cat >/etc/filebeat/filebeat.yml <<EOF

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/lib/docker/containers/*/*-json.log

json.keys_under_root: true

json.overwrite_keys: true

output.elasticsearch:

hosts: ["10.0.0.51:9200"]

indices:

- index: "docker-nginx-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

attrs.service: "nginx"

- index: "docker-db-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

attrs.service: "db"

setup.template.name: "docker"

setup.template.pattern: "docker-*"

setup.template.enabled: false

setup.template.overwrite: true

EOF

8.重啟filebeat

systemctl restart filebeat

9.生成訪問日誌

curl 127.0.0.1/nginxxxxxxxxxxx

curl 127.0.0.1:8080/dbbbbbbbbb根據服務分類

目前不完善的地方

正常日誌和報錯日誌放在一個索引里了

2.理想中的索引名稱

docker-nginx-access-6.6.0-2020.02

docker-nginx-error-6.6.0-2020.02

docker-db-access-6.6.0-2020.02

docker-db-error-6.6.0-2020.02

3.filebeat配置文件

cat >/etc/filebeat/filebeat.yml <<EOF

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/lib/docker/containers/*/*-json.log

json.keys_under_root: true

json.overwrite_keys: true

output.elasticsearch:

hosts: ["10.0.0.51:9200"]

indices:

- index: "docker-nginx-access-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

attrs.service: "nginx"

stream: "stdout"

- index: "docker-nginx-error-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

attrs.service: "nginx"

stream: "stderr"

- index: "docker-db-access-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

attrs.service: "db"

stream: "stdout"

- index: "docker-db-error-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

attrs.service: "db"

stream: "stderr"

setup.template.name: "docker"

setup.template.pattern: "docker-*"

setup.template.enabled: false

setup.template.overwrite: true

EOF

4.重啟filebeat

systemctl restart filebeat

5.生成測試數據

curl 127.0.0.1/nginxxxxxxxxxxx

curl 127.0.0.1:8080/dbbbbbbbbb收集docker日誌漲薪版

1.需求分析

json格式並且按照下列索引生成

docker-nginx-access-6.6.0-2020.02

docker-db-access-6.6.0-2020.02

docker-db-error-6.6.0-2020.02

docker-nginx-error-6.6.0-2020.02

2.停止並且刪除以前的容器

docker stop $(docker ps -qa)

docker rm $(docker ps -qa)

3.創建新容器

docker run -d -p 80:80 -v /opt/nginx:/var/log/nginx/ nginx

docker run -d -p 8080:80 -v /opt/mysql:/var/log/nginx/ nginx

4.準備json格式的nginx配置文件

scp 10.0.0.51:/etc/nginx/nginx.conf /root/

[root@db02 ~]# grep "access_log" nginx.conf

access_log /var/log/nginx/access.log json;

5.拷貝到容器里並重啟

docker cp nginx.conf Nginx容器的ID:/etc/nginx/

docker cp nginx.conf mysql容器的ID:/etc/nginx/

docker stop $(docker ps -qa)

docker start Nginx容器的ID

docker start mysql容器的ID

6.刪除ES已經存在的索引

7.配置filebeat配置文件

cat >/etc/filebeat/filebeat.yml <<EOF

filebeat.inputs:

- type: log

enabled: true

paths:

- /opt/nginx/access.log

json.keys_under_root: true

json.overwrite_keys: true

tags: ["nginx_access"]

- type: log

enabled: true

paths:

- /opt/nginx/error.log

tags: ["nginx_err"]

- type: log

enabled: true

paths:

- /opt/mysql/access.log

json.keys_under_root: true

json.overwrite_keys: true

tags: ["db_access"]

- type: log

enabled: true

paths:

- /opt/mysql/error.log

tags: ["db_err"]

output.elasticsearch:

hosts: ["10.0.0.51:9200"]

indices:

- index: "docker-nginx-access-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

tags: "nginx_access"

- index: "docker-nginx-error-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

tags: "nginx_err"

- index: "docker-db-access-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

tags: "db_access"

- index: "docker-db-error-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

tags: "db_err"

setup.template.name: "docker"

setup.template.pattern: "docker-*"

setup.template.enabled: false

setup.template.overwrite: true

EOF

8.重啟filebeat

systemctl restart filebeat

9.訪問並測試

curl 127.0.0.1/oldboy

curl 127.0.0.1:8080/oldboy

cat /opt/nginx/access.log

cat /opt/mysql/access.log

es-head查看第二種方案

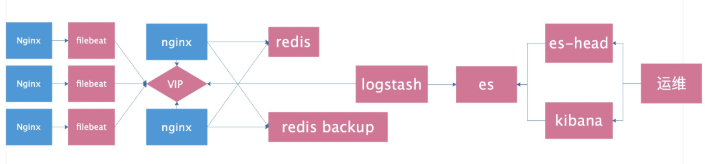

使用緩存服務來緩解ES壓力

架構圖

引入redis緩存

1.安裝redis

yum install redis

sed -i 's#^bind 127.0.0.1#bind 127.0.0.1 10.0.0.51#' /etc/redis.conf

systemctl start redis

netstat -lntup|grep redis

redis-cli -h 10.0.0.51

2.停止docker容器

docker stop $(docker ps -q)

3.停止filebeat

systemctl stop filebeat

4.刪除舊的ES索引

5.確認nginx日誌為json格式

grep "access_log" nginx.conf

6.修改filebeat配置文件

cat >/etc/filebeat/filebeat.yml <<EOF

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

json.keys_under_root: true

json.overwrite_keys: true

tags: ["access"]

- type: log

enabled: true

paths:

- /var/log/nginx/error.log

tags: ["error"]

output.redis:

hosts: ["10.0.0.51"]

keys:

- key: "nginx_access"

when.contains:

tags: "access"

- key: "nginx_error"

when.contains:

tags: "error"

setup.template.name: "nginx"

setup.template.pattern: "nginx_*"

setup.template.enabled: false

setup.template.overwrite: true

EOF

7.重啟filebaet和nginx

systemctl restart nginx

systemctl restart filebeat

8.生成測試數據

curl 127.0.0.1/haha

9.檢查

redis-cli -h 10.0.0.51

keys *

TYPE nginx_access

LLEN nginx_access

LRANGE nginx_access 0 -1

確認是否為json格式

10.安裝logstash

rpm -ivh jdk-8u102-linux-x64.rpm

rpm -ivh logstash-6.6.0.rpm

11.配置logstash

cat >/etc/logstash/conf.d/redis.conf<<EOF

input {

redis {

host => "10.0.0.51"

port => "6379"

db => "0"

key => "nginx_access"

data_type => "list"

}

redis {

host => "10.0.0.51"

port => "6379"

db => "0"

key => "nginx_error"

data_type => "list"

}

}

filter {

mutate {

convert => ["upstream_time", "float"]

convert => ["request_time", "float"]

}

}

output {

stdout {}

if "access" in [tags] {

elasticsearch {

hosts => "http://10.0.0.51:9200"

manage_template => false

index => "nginx_access-%{+yyyy.MM}"

}

}

if "error" in [tags] {

elasticsearch {

hosts => "http://10.0.0.51:9200"

manage_template => false

index => "nginx_error-%{+yyyy.MM}"

}

}

}

EOF

12.前臺啟動測試

/usr/share/logstash/bin/logstash -f /etc/logstash/conf.d/redis.conf

13.檢查

logstash輸出的內容有沒有解析成json

es-head上有沒有索引生成

redis里的列表數據有沒有在減少

14.將logstash放在後臺運行

ctrl+c

systemctl start logstash

聽風扇聲音,開始轉的時候表示logstash啟動了filebeat引入redis完善方案

filebeat引入redis完善方案

1.前提條件

- filebeat不支持傳輸給redis哨兵或集群

- logstash也不支持從redis哨兵或集群里讀取數據

2.安裝配置redis

yum install redis -y

sed -i 's#^bind 127.0.0.1#bind 127.0.0.1 10.0.0.51#' /etc/redis.conf

systemctl start redis

3.安裝配置nginx

配置官方源

yum install nginx -y

放在nginx.conf最後一行的}後面,不要放在conf.d裡面

stream {

upstream redis {

server 10.0.0.51:6379 max_fails=2 fail_timeout=10s;

server 10.0.0.52:6379 max_fails=2 fail_timeout=10s backup;

}

server {

listen 6380;

proxy_connect_timeout 1s;

proxy_timeout 3s;

proxy_pass redis;

}

}

nginx -t

systemctl start nginx

4.安裝配置keepalived

yum install keepalived -y

db01的配置

global_defs {

router_id db01

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 50

priority 150

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

10.0.0.100

}

}

db02的配置

global_defs {

router_id db02

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

virtual_router_id 50

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

10.0.0.100

}

}

systemctl start keepalived

ip a

5.測試訪問能否代理到redis

redis-cli -h 10.0.0.100 -p 6380

把db01的redis停掉,測試還能不能連接redis

6.配置filebeat

cat >/etc/filebeat/filebeat.yml <<EOF

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

json.keys_under_root: true

json.overwrite_keys: true

tags: ["access"]

- type: log

enabled: true

paths:

- /var/log/nginx/error.log

tags: ["error"]

output.redis:

hosts: ["10.0.0.100:6380"]

keys:

- key: "nginx_access"

when.contains:

tags: "access"

- key: "nginx_error"

when.contains:

tags: "error"

setup.template.name: "nginx"

setup.template.pattern: "nginx_*"

setup.template.enabled: false

setup.template.overwrite: true

EOF

7.測試訪問filebeat能否傳輸到redis

curl 10.0.0.51/haha

redis-cli -h 10.0.0.51 #應該有數據

redis-cli -h 10.0.0.52 #應該沒數據

redis-cli -h 10.0.0.100 -p 6380 #應該有數據

8.配置logstash

cat >/etc/logstash/conf.d/redis.conf<<EOF

input {

redis {

host => "10.0.0.100"

port => "6380"

db => "0"

key => "nginx_access"

data_type => "list"

}

redis {

host => "10.0.0.100"

port => "6380"

db => "0"

key => "nginx_error"

data_type => "list"

}

}

filter {

mutate {

convert => ["upstream_time", "float"]

convert => ["request_time", "float"]

}

}

output {

stdout {}

if "access" in [tags] {

elasticsearch {

hosts => "http://10.0.0.51:9200"

manage_template => false

index => "nginx_access-%{+yyyy.MM}"

}

}

if "error" in [tags] {

elasticsearch {

hosts => "http://10.0.0.51:9200"

manage_template => false

index => "nginx_error-%{+yyyy.MM}"

}

}

}

EOF

9.啟動測試

/usr/share/logstash/bin/logstash -f /etc/logstash/conf.d/redis.conf

10.最終測試

ab -n 10000 -c 100 10.0.0.100/

檢查es-head上索引條目是否為10000條

關閉db01的redis,在訪問,測試logstash正不正常

恢復db01的redis,再測試filbeat引入redis優化方案

1.新增加一個日誌路徑需要修改4個地方:

- filebat 2個位置

- logstash 2個位置

2.優化之後需要修改的地方2個地方

- filebat 1個位置

- logstash 1個位置

3.filebeat配置文件

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

json.keys_under_root: true

json.overwrite_keys: true

tags: ["access"]

- type: log

enabled: true

paths:

- /var/log/nginx/error.log

tags: ["error"]

output.redis:

hosts: ["10.0.0.100:6380"]

key: "nginx_log"

setup.template.name: "nginx"

setup.template.pattern: "nginx_*"

setup.template.enabled: false

setup.template.overwrite: true

4.優化後的logstash

input {

redis {

host => "10.0.0.100"

port => "6380"

db => "0"

key => "nginx_log"

data_type => "list"

}

}

filter {

mutate {

convert => ["upstream_time", "float"]

convert => ["request_time", "float"]

}

}

output {

stdout {}

if "access" in [tags] {

elasticsearch {

hosts => "http://10.0.0.51:9200"

manage_template => false

index => "nginx_access-%{+yyyy.MM}"

}

}

if "error" in [tags] {

elasticsearch {

hosts => "http://10.0.0.51:9200"

manage_template => false

index => "nginx_error-%{+yyyy.MM}"

}

}

}

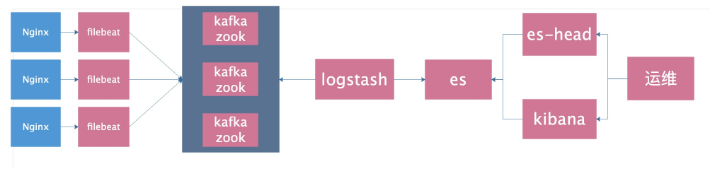

使用kafka作為緩存

1.配置hosts

10.0.0.51 kafka51

10.0.0.52 kafka52

10.0.0.53 kafka532.安裝配置zookeeper

cd /data/soft/

tar zxf zookeeper-3.4.11.tar.gz -C /opt/

ln -s /opt/zookeeper-3.4.11/ /opt/zookeeper

mkdir -p /data/zookeeper

cp /opt/zookeeper/conf/zoo_sample.cfg /opt/zookeeper/conf/zoo.cfg

cat >/opt/zookeeper/conf/zoo.cfg<<EOF

tickTime=2000

initLimit=10

syncLimit=5

dataDir=/data/zookeeper

clientPort=2181

server.1=10.0.0.51:2888:3888

server.2=10.0.0.52:2888:3888

server.3=10.0.0.53:2888:3888

EOF註意!ID每台機器不一樣

echo "1" > /data/zookeeper/myid

cat /data/zookeeper/myid3.啟動zookeeper

所有節點都啟動

/opt/zookeeper/bin/zkServer.sh start4.每個節點都檢查

/opt/zookeeper/bin/zkServer.sh status5.測試zookeeper

在一個節點上執行,創建一個頻道

/opt/zookeeper/bin/zkCli.sh -server 10.0.0.51:2181

create /test "hello"在其他節點上看能否接收到

/opt/zookeeper/bin/zkCli.sh -server 10.0.0.52:2181

get /test

查看進程

ps -aux | grep 'zookeeper'6.安裝部署kafka

db01操作

cd /data/soft/

tar zxf kafka_2.11-1.0.0.tgz -C /opt/

ln -s /opt/kafka_2.11-1.0.0/ /opt/kafka

mkdir /opt/kafka/logs

cat >/opt/kafka/config/server.properties<<EOF

broker.id=1

listeners=PLAINTEXT://10.0.0.51:9092

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=102400

socket.receive.buffer.bytes=102400

socket.request.max.bytes=104857600

log.dirs=/opt/kafka/logs

num.partitions=1

num.recovery.threads.per.data.dir=1

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1

log.retention.hours=24

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

zookeeper.connect=10.0.0.51:2181,10.0.0.52:2181,10.0.0.53:2181

zookeeper.connection.timeout.ms=6000

group.initial.rebalance.delay.ms=0

EOF db02操作

cd /data/soft/

tar zxf kafka_2.11-1.0.0.tgz -C /opt/

ln -s /opt/kafka_2.11-1.0.0/ /opt/kafka

mkdir /opt/kafka/logs

cat >/opt/kafka/config/server.properties<<EOF

broker.id=2

listeners=PLAINTEXT://10.0.0.52:9092

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=102400

socket.receive.buffer.bytes=102400

socket.request.max.bytes=104857600

log.dirs=/opt/kafka/logs

num.partitions=1

num.recovery.threads.per.data.dir=1

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1

log.retention.hours=24

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

zookeeper.connect=10.0.0.51:2181,10.0.0.52:2181,10.0.0.53:2181

zookeeper.connection.timeout.ms=6000

group.initial.rebalance.delay.ms=0

EOFdb03操作

cd /data/soft/

tar zxf kafka_2.11-1.0.0.tgz -C /opt/

ln -s /opt/kafka_2.11-1.0.0/ /opt/kafka

mkdir /opt/kafka/logs

cat >/opt/kafka/config/server.properties<<EOF

broker.id=3

listeners=PLAINTEXT://10.0.0.53:9092

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=102400

socket.receive.buffer.bytes=102400

socket.request.max.bytes=104857600

log.dirs=/opt/kafka/logs

num.partitions=1

num.recovery.threads.per.data.dir=1

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1

log.retention.hours=24

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

zookeeper.connect=10.0.0.51:2181,10.0.0.52:2181,10.0.0.53:2181

zookeeper.connection.timeout.ms=6000

group.initial.rebalance.delay.ms=0

EOF7.前臺啟動測試

/opt/kafka/bin/kafka-server-start.sh /opt/kafka/config/server.properties

看最後有沒有start8.驗證進程

jps

出現3個進程9.測試創建topic

/opt/kafka/bin/kafka-topics.sh --create --zookeeper 10.0.0.51:2181,10.0.0.52:2181,10.0.0.53:2181 --partitions 3 --replication-factor 3 --topic kafkatest10.測試獲取toppid

/opt/kafka/bin/kafka-topics.sh --describe --zookeeper 10.0.0.51:2181,10.0.0.52:2181,10.0.0.53:2181 --topic kafkatest11.測試刪除topic

/opt/kafka/bin/kafka-topics.sh --delete --zookeeper 10.0.0.51:2181,10.0.0.52:2181,10.0.0.53:2181 --topic kafkatest12.kafka測試命令發送消息

創建命令

/opt/kafka/bin/kafka-topics.sh --create --zookeeper 10.0.0.51:2181,10.0.0.52:2181,10.0.0.53:2181 --partitions 3 --replication-factor 3 --topic messagetest測試發送消息

/opt/kafka/bin/kafka-console-producer.sh --broker-list 10.0.0.51:9092,10.0.0.52:9092,10.0.0.53:9092 --topic messagetest

出現尖角號其他節點測試接收

/opt/kafka/bin/kafka-console-consumer.sh --zookeeper 10.0.0.51:2181,10.0.0.52:2181,10.0.0.53:2181 --topic messagetest --from-beginning

測試獲取所有的頻道

/opt/kafka/bin/kafka-topics.sh --list --zookeeper 10.0.0.51:2181,10.0.0.52:2181,10.0.0.53:2181

13.測試成功之後,可以放在後臺啟動

/opt/kafka/bin/kafka-server-start.sh -daemon /opt/kafka/config/server.properties

14.修改filebeat配置文件

cat >/etc/filebeat/filebeat.yml <<EOF

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

json.keys_under_root: true

json.overwrite_keys: true

tags: ["access"]

- type: log

enabled: true

paths:

- /var/log/nginx/error.log

tags: ["error"]

output.kafka:

hosts: ["10.0.0.51:9092", "10.0.0.52:9092", "10.0.0.53:9092"]

topic: 'filebeat'

setup.template.name: "nginx"

setup.template.pattern: "nginx_*"

setup.template.enabled: false

setup.template.overwrite: true

EOF

重啟filebeat

systemctl restart filebeat

11.訪問並檢查kafka里有沒有收到日誌

curl 10.0.0.51

/opt/kafka/bin/kafka-topics.sh --list --zookeeper 10.0.0.51:2181,10.0.0.52:2181,10.0.0.53:2181

/opt/kafka/bin/kafka-console-consumer.sh --zookeeper 10.0.0.51:2181,10.0.0.52:2181,10.0.0.53:2181 --topic filebeat --from-beginning15.修改logstash配置文件

cat >/etc/logstash/conf.d/kafka.conf <<EOF

input {

kafka{

bootstrap_servers=>["10.0.0.51:9092,10.0.0.52:9092,10.0.0.53:9092"]

topics=>["filebeat"]

#group_id=>"logstash"

codec => "json"

}

}

filter {

mutate {

convert => ["upstream_time", "float"]

convert => ["request_time", "float"]

}

}

output {

stdout {}

if "access" in [tags] {

elasticsearch {

hosts => "http://10.0.0.51:9200"

manage_template => false

index => "nginx_access-%{+yyyy.MM}"

}

}

if "error" in [tags] {

elasticsearch {

hosts => "http://10.0.0.51:9200"

manage_template => false

index => "nginx_error-%{+yyyy.MM}"

}

}

}

EOF16.啟動logstash並測試

1.前臺啟動

/usr/share/logstash/bin/logstash -f /etc/logstash/conf.d/kafka.conf

2.後臺啟動

systemctl start logstash

17.logstash移除不需要的欄位

在filter區塊里添加remove_field欄位即可

filter {

mutate {

convert => ["upstream_time", "float"]

convert => ["request_time", "float"]

remove_field => [ "beat" ]

}

}kafka與zookeeper 的關係

zookeeper中存儲的信息有broker,consumer等重要znode信息。

可以感知到,每個kafka節點會在zookeeper中註冊該機器的配置信息。

然後註冊完的kafka節點的topic信息會存在topics目錄下麵。

根據zookeeper目錄列表可以看到,zookeeper存儲了kafka集群的所有信息,那麼發送和接收消息是怎樣的流程呢?

kafka的發送與接收

發送:kafka的發送程式(代碼)會指定broker服務地址,那麼消息的發送會直接發送到broker提供的地址中。

如果地址是列表(指定了多個broker地址),那麼則隨機選擇一個可用的發送。接收到消息的kafka機器會向zookeeper查詢擁有該topic下partition決定權(leader)的機器,然後由該leader選擇機器存儲數據,最終存儲數據。

接收:kafka的接收會指定zookeeper地址,那麼接收到消費任務的zookeeper將任務報告給該topic下partition的leader,由該leader指定follower完成數據的獲取並返回。

Zookeeper上的細節:

1. 每個broker啟動後會在zookeeper上註冊一個臨時的broker registry,包含broker的ip地址和埠號,所存儲的topics和partitions信息。

2. 每個consumer啟動後會在zookeeper上註冊一個臨時的consumer registry:包含consumer所屬的consumer group以及訂閱的topics。

3. 每個consumer group關聯一個臨時的owner registry和一個持久的offset registry。

對於被訂閱的每個partition包含一個owner registry,內容為訂閱這個partition的consumer id。

同時包含一個offset registry,內容為上一次訂閱的offset。如何在公司推廣ELK

- 優先表達對別人的好處,可以讓別人早下班

- 實驗環境準備充足,可以隨時打開演示,數據和畫圖豐富一些

- 開發組,後端組,前端組,運維組,DBA組 單獨定製面板

- 單獨找組長,說優先給咱們組解決問題

- 你看,你有問題還得這麼麻煩跑過來,我給你調好之後,你直接點點滑鼠就可以了,如果還有問題,您一句話,我過去