一 glusterfs存儲集群部署 註意:以下為簡略步驟,詳情參考《附009.Kubernetes永久存儲之GlusterFS獨立部署》。 1.1 架構示意 略 1.2 相關規劃 主機 IP 磁碟 備註 k8smaster01 172.24.8.71 —— Kubernetes Master節點 H ...

一 glusterfs存儲集群部署

註意:以下為簡略步驟,詳情參考《附009.Kubernetes永久存儲之GlusterFS獨立部署》。1.1 架構示意

略1.2 相關規劃

1.3 安裝glusterfs

# yum -y install centos-release-gluster # yum -y install glusterfs-server # systemctl start glusterd # systemctl enable glusterd 提示:建議所有節點安裝。1.4 添加信任池

[root@k8snode01 ~]# gluster peer probe k8snode02 [root@k8snode01 ~]# gluster peer probe k8snode03 [root@k8snode01 ~]# gluster peer status #查看信任池狀態 [root@k8snode01 ~]# gluster pool list #查看信任池列表 提示:僅需要在glusterfs任一節點執行一次即可。1.5 安裝heketi

[root@k8smaster01 ~]# yum -y install heketi heketi-client1.6 配置heketi

[root@k8smaster01 ~]# vi /etc/heketi/heketi.json1 { 2 "_port_comment": "Heketi Server Port Number", 3 "port": "8080", 4 5 "_use_auth": "Enable JWT authorization. Please enable for deployment", 6 "use_auth": true, 7 8 "_jwt": "Private keys for access", 9 "jwt": { 10 "_admin": "Admin has access to all APIs", 11 "admin": { 12 "key": "admin123" 13 }, 14 "_user": "User only has access to /volumes endpoint", 15 "user": { 16 "key": "xianghy" 17 } 18 }, 19 20 "_glusterfs_comment": "GlusterFS Configuration", 21 "glusterfs": { 22 "_executor_comment": [ 23 "Execute plugin. Possible choices: mock, ssh", 24 "mock: This setting is used for testing and development.", 25 " It will not send commands to any node.", 26 "ssh: This setting will notify Heketi to ssh to the nodes.", 27 " It will need the values in sshexec to be configured.", 28 "kubernetes: Communicate with GlusterFS containers over", 29 " Kubernetes exec api." 30 ], 31 "executor": "ssh", 32 33 "_sshexec_comment": "SSH username and private key file information", 34 "sshexec": { 35 "keyfile": "/etc/heketi/heketi_key", 36 "user": "root", 37 "port": "22", 38 "fstab": "/etc/fstab" 39 }, 40 41 "_db_comment": "Database file name", 42 "db": "/var/lib/heketi/heketi.db", 43 44 "_loglevel_comment": [ 45 "Set log level. Choices are:", 46 " none, critical, error, warning, info, debug", 47 "Default is warning" 48 ], 49 "loglevel" : "warning" 50 } 51 }

1.7 配置免秘鑰

[root@k8smaster01 ~]# ssh-keygen -t rsa -q -f /etc/heketi/heketi_key -N "" [root@k8smaster01 ~]# chown heketi:heketi /etc/heketi/heketi_key [root@k8smaster01 ~]# ssh-copy-id -i /etc/heketi/heketi_key.pub root@k8snode01 [root@k8smaster01 ~]# ssh-copy-id -i /etc/heketi/heketi_key.pub root@k8snode02 [root@k8smaster01 ~]# ssh-copy-id -i /etc/heketi/heketi_key.pub root@k8snode031.8 啟動heketi

[root@k8smaster01 ~]# systemctl enable heketi.service [root@k8smaster01 ~]# systemctl start heketi.service [root@k8smaster01 ~]# systemctl status heketi.service [root@k8smaster01 ~]# curl http://localhost:8080/hello #測試訪問1.9 配置Heketi拓撲

[root@k8smaster01 ~]# vi /etc/heketi/topology.json1 { 2 "clusters": [ 3 { 4 "nodes": [ 5 { 6 "node": { 7 "hostnames": { 8 "manage": [ 9 "k8snode01" 10 ], 11 "storage": [ 12 "172.24.8.74" 13 ] 14 }, 15 "zone": 1 16 }, 17 "devices": [ 18 "/dev/sdb" 19 ] 20 }, 21 { 22 "node": { 23 "hostnames": { 24 "manage": [ 25 "k8snode02" 26 ], 27 "storage": [ 28 "172.24.8.75" 29 ] 30 }, 31 "zone": 1 32 }, 33 "devices": [ 34 "/dev/sdb" 35 ] 36 }, 37 { 38 "node": { 39 "hostnames": { 40 "manage": [ 41 "k8snode03" 42 ], 43 "storage": [ 44 "172.24.8.76" 45 ] 46 }, 47 "zone": 1 48 }, 49 "devices": [ 50 "/dev/sdb" 51 ] 52 } 53 ] 54 } 55 ] 56 }[root@k8smaster01 ~]# echo "export HEKETI_CLI_SERVER=http://k8smaster01:8080" >> /etc/profile.d/heketi.sh [root@k8smaster01 ~]# echo "alias heketi-cli='heketi-cli --user admin --secret admin123'" >> .bashrc [root@k8smaster01 ~]# source /etc/profile.d/heketi.sh [root@k8smaster01 ~]# source .bashrc [root@k8smaster01 ~]# echo $HEKETI_CLI_SERVER http://k8smaster01:8080 [root@k8smaster01 ~]# heketi-cli --server $HEKETI_CLI_SERVER --user admin --secret admin123 topology load --json=/etc/heketi/topology.json

1.10 集群管理及測試

[root@heketi ~]# heketi-cli cluster list #集群列表 [root@heketi ~]# heketi-cli node list #捲信息 [root@heketi ~]# heketi-cli volume list #捲信息 [root@k8snode01 ~]# gluster volume info #通過glusterfs節點查看1.11 創建StorageClass

[root@k8smaster01 study]# vi heketi-secret.yaml1 apiVersion: v1 2 kind: Secret 3 metadata: 4 name: heketi-secret 5 namespace: heketi 6 data: 7 key: YWRtaW4xMjM= 8 type: kubernetes.io/glusterfs[root@k8smaster01 study]# kubectl create ns heketi [root@k8smaster01 study]# kubectl create -f heketi-secret.yaml #創建heketi [root@k8smaster01 study]# kubectl get secrets -n heketi [root@k8smaster01 study]# vim gluster-heketi-storageclass.yaml #正式創建StorageClass

1 apiVersion: storage.k8s.io/v1 2 kind: StorageClass 3 metadata: 4 name: ghstorageclass 5 parameters: 6 resturl: "http://172.24.8.71:8080" 7 clusterid: "ad0f81f75f01d01ebd6a21834a2caa30" 8 restauthenabled: "true" 9 restuser: "admin" 10 secretName: "heketi-secret" 11 secretNamespace: "heketi" 12 volumetype: "replicate:3" 13 provisioner: kubernetes.io/glusterfs 14 reclaimPolicy: Delete[root@k8smaster01 study]# kubectl create -f gluster-heketi-storageclass.yaml 註意:storageclass資源創建後不可變更,如修改只能刪除後重建。 [root@k8smaster01 heketi]# kubectl get storageclasses #查看確認 NAME PROVISIONER AGE gluster-heketi-storageclass kubernetes.io/glusterfs 85s [root@k8smaster01 heketi]# kubectl describe storageclasses ghstorageclass

二 集群監控Metrics

註意:以下為簡略步驟,詳情參考《049.集群管理-集群監控Metrics》。2.1 開啟聚合層

開機聚合層功能,使用kubeadm預設已開啟此功能,可如下查看驗證。 [root@k8smaster01 ~]# cat /etc/kubernetes/manifests/kube-apiserver.yaml2.2 獲取部署文件

[root@k8smaster01 ~]# git clone https://github.com/kubernetes-incubator/metrics-server.git [root@k8smaster01 ~]# cd metrics-server/deploy/1.8+/ [root@k8smaster01 1.8+]# vi metrics-server-deployment.yaml1 …… 2 image: mirrorgooglecontainers/metrics-server-amd64:v0.3.6 #修改為國內源 3 command: 4 - /metrics-server 5 - --metric-resolution=30s 6 - --kubelet-insecure-tls 7 - --kubelet-preferred-address-types=InternalIP,Hostname,InternalDNS,ExternalDNS,ExternalIP #添加如上command 8 ……

2.3 正式部署

[root@k8smaster01 1.8+]# kubectl apply -f . [root@k8smaster01 1.8+]# kubectl -n kube-system get pods -l k8s-app=metrics-server [root@k8smaster01 1.8+]# kubectl -n kube-system logs -l k8s-app=metrics-server -f #可查看部署日誌2.4 確認驗證

[root@k8smaster01 ~]# kubectl top nodes [root@k8smaster01 ~]# kubectl top pods --all-namespaces三 Prometheus部署

註意:以下為簡略步驟,詳情參考《050.集群管理-Prometheus+Grafana監控方案》。3.1 獲取部署文件

[root@k8smaster01 ~]# git clone https://github.com/prometheus/prometheus3.2 創建命名空間

[root@k8smaster01 ~]# cd prometheus/documentation/examples/ [root@k8smaster01 examples]# vi monitor-namespace.yaml1 apiVersion: v1 2 kind: Namespace 3 metadata: 4 name: monitoring[root@k8smaster01 examples]# kubectl create -f monitor-namespace.yaml

3.3 創建RBAC

[root@k8smaster01 examples]# vi rbac-setup.yml1 apiVersion: rbac.authorization.k8s.io/v1beta1 2 kind: ClusterRole 3 metadata: 4 name: prometheus 5 rules: 6 - apiGroups: [""] 7 resources: 8 - nodes 9 - nodes/proxy 10 - services 11 - endpoints 12 - pods 13 verbs: ["get", "list", "watch"] 14 - apiGroups: 15 - extensions 16 resources: 17 - ingresses 18 verbs: ["get", "list", "watch"] 19 - nonResourceURLs: ["/metrics"] 20 verbs: ["get"] 21 --- 22 apiVersion: v1 23 kind: ServiceAccount 24 metadata: 25 name: prometheus 26 namespace: monitoring #僅需修改命名空間 27 --- 28 apiVersion: rbac.authorization.k8s.io/v1beta1 29 kind: ClusterRoleBinding 30 metadata: 31 name: prometheus 32 roleRef: 33 apiGroup: rbac.authorization.k8s.io 34 kind: ClusterRole 35 name: prometheus 36 subjects: 37 - kind: ServiceAccount 38 name: prometheus 39 namespace: monitoring #僅需修改命名空間[root@k8smaster01 examples]# kubectl create -f rbac-setup.yml

3.4 創建Prometheus ConfigMap

[root@k8smaster01 examples]# cat prometheus-kubernetes.yml | grep -v ^$ | grep -v "#" >> prometheus-config.yaml [root@k8smaster01 examples]# vi prometheus-config.yaml1 apiVersion: v1 2 kind: ConfigMap 3 metadata: 4 name: prometheus-server-conf 5 labels: 6 name: prometheus-server-conf 7 namespace: monitoring #修改命名空間 8 ……[root@k8smaster01 examples]# kubectl create -f prometheus-config.yaml

3.5 創建持久PVC

[root@k8smaster01 examples]# vi prometheus-pvc.yaml1 apiVersion: v1 2 kind: PersistentVolumeClaim 3 metadata: 4 name: prometheus-pvc 5 namespace: monitoring 6 annotations: 7 volume.beta.kubernetes.io/storage-class: ghstorageclass 8 spec: 9 accessModes: 10 - ReadWriteMany 11 resources: 12 requests: 13 storage: 5Gi[root@k8smaster01 examples]# kubectl create -f prometheus-pvc.yaml

3.6 Prometheus部署

[root@k8smaster01 examples]# vi prometheus-deployment.yml1 apiVersion: apps/v1beta2 2 kind: Deployment 3 metadata: 4 labels: 5 name: prometheus-deployment 6 name: prometheus-server 7 namespace: monitoring 8 spec: 9 replicas: 1 10 selector: 11 matchLabels: 12 app: prometheus-server 13 template: 14 metadata: 15 labels: 16 app: prometheus-server 17 spec: 18 containers: 19 - name: prometheus-server 20 image: prom/prometheus:v2.14.0 21 command: 22 - "/bin/prometheus" 23 args: 24 - "--config.file=/etc/prometheus/prometheus.yml" 25 - "--storage.tsdb.path=/prometheus/" 26 - "--storage.tsdb.retention=72h" 27 ports: 28 - containerPort: 9090 29 protocol: TCP 30 volumeMounts: 31 - name: prometheus-config-volume 32 mountPath: /etc/prometheus/ 33 - name: prometheus-storage-volume 34 mountPath: /prometheus/ 35 serviceAccountName: prometheus 36 imagePullSecrets: 37 - name: regsecret 38 volumes: 39 - name: prometheus-config-volume 40 configMap: 41 defaultMode: 420 42 name: prometheus-server-conf 43 - name: prometheus-storage-volume 44 persistentVolumeClaim: 45 claimName: prometheus-pvc[root@k8smaster01 examples]# kubectl create -f prometheus-deployment.yml

3.7 創建Prometheus Service

[root@k8smaster01 examples]# vi prometheus-service.yaml1 apiVersion: v1 2 kind: Service 3 metadata: 4 labels: 5 app: prometheus-service 6 name: prometheus-service 7 namespace: monitoring 8 spec: 9 type: NodePort 10 selector: 11 app: prometheus-server 12 ports: 13 - port: 9090 14 targetPort: 9090 15 nodePort: 30001[root@k8smaster01 examples]# kubectl create -f prometheus-service.yaml [root@k8smaster01 examples]# kubectl get all -n monitoring

3.8 確認驗證Prometheus

瀏覽器直接訪問:http://172.24.8.100:30001/

四 部署grafana

註意:以下為簡略步驟,詳情參考《050.集群管理-Prometheus+Grafana監控方案》。4.1 獲取部署文件

[root@k8smaster01 ~]# git clone https://github.com/liukuan73/kubernetes-addons [root@k8smaster01 ~]# cd /root/kubernetes-addons/monitor/prometheus+grafana4.2 創建持久PVC

[root@k8smaster01 prometheus+grafana]# vi grafana-data-pvc.yaml1 apiVersion: v1 2 kind: PersistentVolumeClaim 3 metadata: 4 name: grafana-data-pvc 5 namespace: monitoring 6 annotations: 7 volume.beta.kubernetes.io/storage-class: ghstorageclass 8 spec: 9 accessModes: 10 - ReadWriteOnce 11 resources: 12 requests: 13 storage: 5Gi[root@k8smaster01 prometheus+grafana]# kubectl create -f grafana-data-pvc.yaml

4.3 grafana部署

[root@k8smaster01 prometheus+grafana]# vi grafana.yaml1 apiVersion: extensions/v1beta1 2 kind: Deployment 3 metadata: 4 name: monitoring-grafana 5 namespace: monitoring 6 spec: 7 replicas: 1 8 template: 9 metadata: 10 labels: 11 task: monitoring 12 k8s-app: grafana 13 spec: 14 containers: 15 - name: grafana 16 image: grafana/grafana:6.5.0 17 imagePullPolicy: IfNotPresent 18 ports: 19 - containerPort: 3000 20 protocol: TCP 21 volumeMounts: 22 - mountPath: /var/lib/grafana 23 name: grafana-storage 24 env: 25 - name: INFLUXDB_HOST 26 value: monitoring-influxdb 27 - name: GF_SERVER_HTTP_PORT 28 value: "3000" 29 - name: GF_AUTH_BASIC_ENABLED 30 value: "false" 31 - name: GF_AUTH_ANONYMOUS_ENABLED 32 value: "true" 33 - name: GF_AUTH_ANONYMOUS_ORG_ROLE 34 value: Admin 35 - name: GF_SERVER_ROOT_URL 36 value: / 37 readinessProbe: 38 httpGet: 39 path: /login 40 port: 3000 41 volumes: 42 - name: grafana-storage 43 persistentVolumeClaim: 44 claimName: grafana-data-pvc 45 nodeSelector: 46 node-role.kubernetes.io/master: "true" 47 tolerations: 48 - key: "node-role.kubernetes.io/master" 49 effect: "NoSchedule" 50 --- 51 apiVersion: v1 52 kind: Service 53 metadata: 54 labels: 55 kubernetes.io/cluster-service: 'true' 56 kubernetes.io/name: monitoring-grafana 57 annotations: 58 prometheus.io/scrape: 'true' 59 prometheus.io/tcp-probe: 'true' 60 prometheus.io/tcp-probe-port: '80' 61 name: monitoring-grafana 62 namespace: monitoring 63 spec: 64 type: NodePort 65 ports: 66 - port: 80 67 targetPort: 3000 68 nodePort: 30002 69 selector: 70 k8s-app: grafana[root@k8smaster01 prometheus+grafana]# kubectl label nodes k8smaster01 node-role.kubernetes.io/master=true [root@k8smaster01 prometheus+grafana]# kubectl label nodes k8smaster02 node-role.kubernetes.io/master=true [root@k8smaster01 prometheus+grafana]# kubectl label nodes k8smaster03 node-role.kubernetes.io/master=true [root@k8smaster01 prometheus+grafana]# kubectl create -f grafana.yaml [root@k8smaster01 examples]# kubectl get all -n monitoring

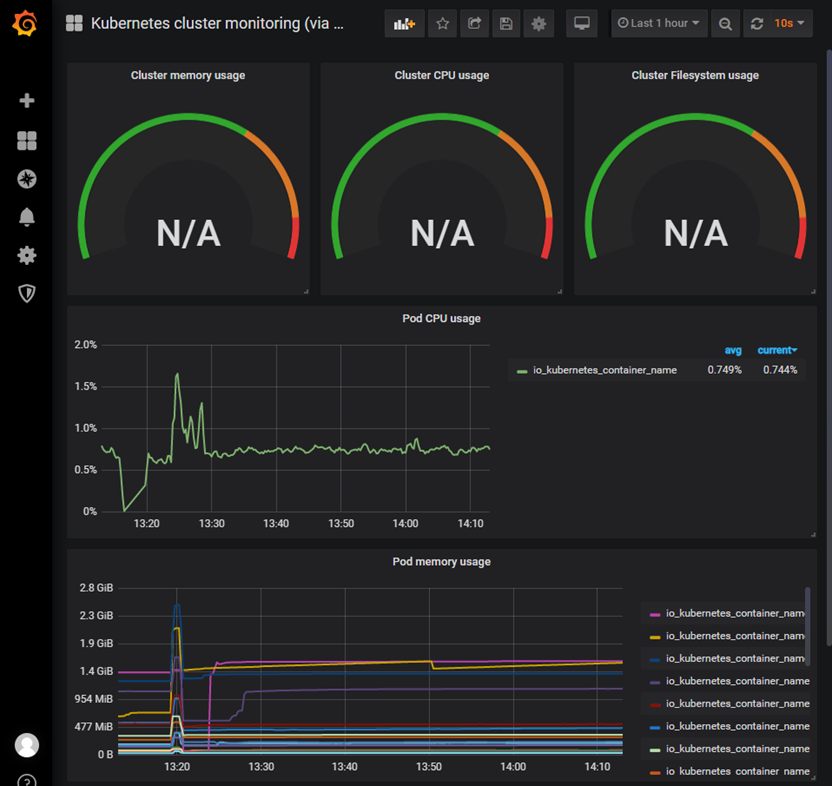

4.4 確認驗證Prometheus

瀏覽器直接訪問:http://172.24.8.100:30002/4.4 grafana配置

- 添加數據源:略

- 創建用戶:略

4.5 查看監控

瀏覽器再次訪問:http://172.24.8.100:30002/