利用tensorflow實現數據的線性回歸 導入相關庫 import tensorflow as tf import numpy import matplotlib.pyplot as plt rng = numpy.random 參數設置 learning_rate = 0.01 training ...

利用tensorflow實現數據的線性回歸

導入相關庫

import tensorflow as tf

import numpy

import matplotlib.pyplot as plt

rng = numpy.random參數設置

learning_rate = 0.01

training_epochs = 1000

display_step = 50訓練數據

train_X = numpy.asarray([3.3,4.4,5.5,6.71,6.93,4.168,9.779,6.182,7.59,2.167,

7.042,10.791,5.313,7.997,5.654,9.27,3.1])

train_Y = numpy.asarray([1.7,2.76,2.09,3.19,1.694,1.573,3.366,2.596,2.53,1.221,

2.827,3.465,1.65,2.904,2.42,2.94,1.3])

n_samples = train_X.shape[0]tf圖輸入

X = tf.placeholder("float")

Y = tf.placeholder("float")設置權重和偏置

W = tf.Variable(rng.randn(), name="weight")

b = tf.Variable(rng.randn(), name="bias")構建線性模型

pred = tf.add(tf.multiply(X, W), b)均方誤差

cost = tf.reduce_sum(tf.pow(pred-Y, 2))/(2*n_samples)梯度下降

optimizer = tf.train.GradientDescentOptimizer(learning_rate).minimize(cost)初始化變數

init = tf.global_variables_initializer()開始訓練

with tf.Session() as sess:

sess.run(init)

# 適合所有訓練數據

for epoch in range(training_epochs):

for (x, y) in zip(train_X, train_Y):

sess.run(optimizer, feed_dict={X: x, Y: y})

# 顯示每個紀元步驟的日誌

if (epoch+1) % display_step == 0:

c = sess.run(cost, feed_dict={X: train_X, Y:train_Y})

print("Epoch:", '%04d' % (epoch+1), "cost=", "{:.9f}".format(c), \

"W=", sess.run(W), "b=", sess.run(b))

print("Optimization Finished!")

training_cost = sess.run(cost, feed_dict={X: train_X, Y: train_Y})

print("Training cost=", training_cost, "W=", sess.run(W), "b=", sess.run(b), '\n')

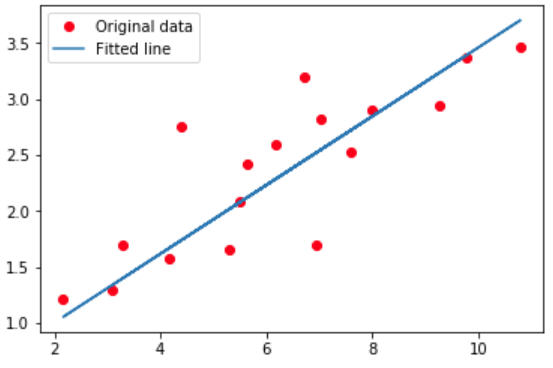

# 畫圖顯示

plt.plot(train_X, train_Y, 'ro', label='Original data')

plt.plot(train_X, sess.run(W) * train_X + sess.run(b), label='Fitted line')

plt.legend()

plt.show()結果展示

Epoch: 0050 cost= 0.183995649 W= 0.43250677 b= -0.5143978

Epoch: 0100 cost= 0.171630666 W= 0.42162812 b= -0.43613702

Epoch: 0150 cost= 0.160693780 W= 0.41139638 b= -0.36253116

Epoch: 0200 cost= 0.151019916 W= 0.40177315 b= -0.2933027

Epoch: 0250 cost= 0.142463341 W= 0.39272234 b= -0.22819161

Epoch: 0300 cost= 0.134895071 W= 0.3842099 b= -0.16695316

Epoch: 0350 cost= 0.128200993 W= 0.37620357 b= -0.10935676

Epoch: 0400 cost= 0.122280121 W= 0.36867347 b= -0.055185713

Epoch: 0450 cost= 0.117043234 W= 0.36159125 b= -0.004236537

Epoch: 0500 cost= 0.112411365 W= 0.3549302 b= 0.04368245

Epoch: 0550 cost= 0.108314596 W= 0.34866524 b= 0.08875148

Epoch: 0600 cost= 0.104691163 W= 0.34277305 b= 0.13114017

Epoch: 0650 cost= 0.101486407 W= 0.33723122 b= 0.17100765

Epoch: 0700 cost= 0.098651998 W= 0.33201888 b= 0.20850417

Epoch: 0750 cost= 0.096145160 W= 0.32711673 b= 0.24377018

Epoch: 0800 cost= 0.093927994 W= 0.32250607 b= 0.27693948

Epoch: 0850 cost= 0.091967128 W= 0.31816947 b= 0.308136

Epoch: 0900 cost= 0.090232961 W= 0.31409115 b= 0.33747625

Epoch: 0950 cost= 0.088699281 W= 0.31025505 b= 0.36507198

Epoch: 1000 cost= 0.087342896 W= 0.30664718 b= 0.39102668

Optimization Finished!

Training cost= 0.087342896 W= 0.30664718 b= 0.39102668

參考:

Author: Aymeric Damien

Project: https://github.com/aymericdamien/TensorFlow-Examples/