Hive作為大數據環境下的數據倉庫工具,支持基於hadoop以sql的方式執行mapreduce的任務,非常適合對大量的數據進行全量的查詢分析。 本文主要講述下hive載cli中如何導入導出數據: 導入數據 第一種方式,直接從本地文件系統導入數據 我的本機有一個test1.txt文件,這個文件中有三 ...

Hive作為大數據環境下的數據倉庫工具,支持基於hadoop以sql的方式執行mapreduce的任務,非常適合對大量的數據進行全量的查詢分析。

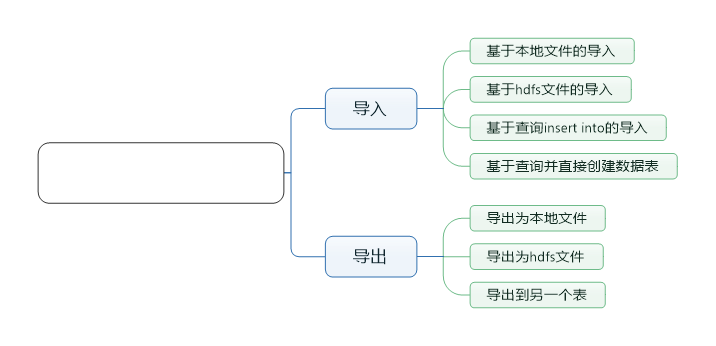

本文主要講述下hive載cli中如何導入導出數據:

導入數據

第一種方式,直接從本地文件系統導入數據

我的本機有一個test1.txt文件,這個文件中有三列數據,並且每列都是以'\t'為分隔

[root@localhost conf]# cat /usr/tmp/test1.txt

1 a1 b1

2 a2 b2

3 a3 b3

4 a4 b創建數據表:

>create table test1(a string,b string,c string)

>row format delimited

>fields terminated by '\t'

>stored as textfile;導入數據:

load data local inpath '/usr/tmp/test1.txt' overwrite into table test1;其中local inpath,表明路徑為本機路徑

overwrite表示載入的數據會覆蓋原來的內容

第二種,從hdfs文件中導入數據

首先上傳數據到hdfs中

hadoop fs -put /usr/tmp/test1.txt /test1.txt在hive中查看test1.txt文件

hive> dfs -cat /test1.txt;

1 a1 b1

2 a2 b2

3 a3 b3

4 a4 b4

創建數據表,與前面一樣。導入數據的命令有些差異:

load data inpath '/test1.txt' overwrite into table test2;第三種,基於查詢insert into導入

首先定義數據表,這裡直接創建帶有分區的表

hive> create table test3(a string,b string,c string) partitioned by (d string) row format delimited fields terminated by '\t' stored as textfile;

OK

Time taken: 0.109 seconds

hive> describe test3;

OK

a string

b string

c string

d string

# Partition Information

# col_name data_type comment

d string

Time taken: 0.071 seconds, Fetched: 9 row(s)通過查詢直接導入數據到固定的分區表中:

hive> insert into table test3 partition(d='aaaaaa') select * from test2;

WARNING: Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

Query ID = root_20160823212718_9cfdbea4-42fa-4267-ac46-9ac2c357f944

Total jobs = 3

Launching Job 1 out of 3

Number of reduce tasks is set to 0 since there's no reduce operator

Job running in-process (local Hadoop)

2016-08-23 21:27:21,621 Stage-1 map = 100%, reduce = 0%

Ended Job = job_local1550375778_0001

Stage-4 is selected by condition resolver.

Stage-3 is filtered out by condition resolver.

Stage-5 is filtered out by condition resolver.

Moving data to directory hdfs://localhost:8020/user/hive/warehouse/test.db/test3/d=aaaaaa/.hive-staging_hive_2016-08-23_21-27-18_739_4058721562930266873-1/-ext-10000

Loading data to table test.test3 partition (d=aaaaaa)

MapReduce Jobs Launched:

Stage-Stage-1: HDFS Read: 248 HDFS Write: 175 SUCCESS

Total MapReduce CPU Time Spent: 0 msec

OK

Time taken: 3.647 seconds通過查詢觀察結果

hive> select * from test3;

OK

1 a1 b1 aaaaaa

2 a2 b2 aaaaaa

3 a3 b3 aaaaaa

4 a4 b4 aaaaaa

Time taken: 0.264 seconds, Fetched: 4 row(s)PS:也可以直接通過動態分區插入數據:

insert into table test4 partition(c) select * from test2;分區會以文件夾命名的方式存儲:

hive> dfs -ls /user/hive/warehouse/test.db/test4/;

Found 4 items

drwxr-xr-x - root supergroup 0 2016-08-23 21:33 /user/hive/warehouse/test.db/test4/c=b1

drwxr-xr-x - root supergroup 0 2016-08-23 21:33 /user/hive/warehouse/test.db/test4/c=b2

drwxr-xr-x - root supergroup 0 2016-08-23 21:33 /user/hive/warehouse/test.db/test4/c=b3

drwxr-xr-x - root supergroup 0 2016-08-23 21:33 /user/hive/warehouse/test.db/test4/c=b4第四種,直接基於查詢創建數據表

直接通過查詢創建數據表:

hive> create table test5 as select * from test4;

WARNING: Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

Query ID = root_20160823213944_03672168-bc56-43d7-aefb-cac03a6558bf

Total jobs = 3

Launching Job 1 out of 3

Number of reduce tasks is set to 0 since there's no reduce operator

Job running in-process (local Hadoop)

2016-08-23 21:39:46,030 Stage-1 map = 100%, reduce = 0%

Ended Job = job_local855333165_0003

Stage-4 is selected by condition resolver.

Stage-3 is filtered out by condition resolver.

Stage-5 is filtered out by condition resolver.

Moving data to directory hdfs://localhost:8020/user/hive/warehouse/test.db/.hive-staging_hive_2016-08-23_21-39-44_259_5484795730585321098-1/-ext-10002

Moving data to directory hdfs://localhost:8020/user/hive/warehouse/test.db/test5

MapReduce Jobs Launched:

Stage-Stage-1: HDFS Read: 600 HDFS Write: 466 SUCCESS

Total MapReduce CPU Time Spent: 0 msec

OK

Time taken: 2.184 seconds查看結果

hive> select * from test5;

OK

1 a1 b1

2 a2 b2

3 a3 b3

4 a4 b4

Time taken: 0.147 seconds, Fetched: 4 row(s)導出數據

導出到本地文件

執行導出本地文件命令:

hive> insert overwrite local directory '/usr/tmp/export' select * from test1;

WARNING: Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

Query ID = root_20160823221655_05b05863-6273-4bdd-aad2-e80d4982425d

Total jobs = 1

Launching Job 1 out of 1

Number of reduce tasks is set to 0 since there's no reduce operator

Job running in-process (local Hadoop)

2016-08-23 22:16:57,028 Stage-1 map = 100%, reduce = 0%

Ended Job = job_local8632460_0005

Moving data to local directory /usr/tmp/export

MapReduce Jobs Launched:

Stage-Stage-1: HDFS Read: 794 HDFS Write: 498 SUCCESS

Total MapReduce CPU Time Spent: 0 msec

OK

Time taken: 1.569 seconds

hive>

在本地文件查看內容:

[root@localhost export]# ll

total 4

-rw-r--r--. 1 root root 32 Aug 23 22:16 000000_0

[root@localhost export]# cat 000000_0

1a1b1

2a2b2

3a3b3

4a4b4

[root@localhost export]# pwd

/usr/tmp/export

[root@localhost export]#

導出到hdfs

hive> insert overwrite directory '/usr/tmp/test' select * from test1;

WARNING: Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

Query ID = root_20160823214217_e8c71bb9-a147-4518-8353-81f9adc54183

Total jobs = 3

Launching Job 1 out of 3

Number of reduce tasks is set to 0 since there's no reduce operator

Job running in-process (local Hadoop)

2016-08-23 21:42:19,257 Stage-1 map = 100%, reduce = 0%

Ended Job = job_local628523792_0004

Stage-3 is selected by condition resolver.

Stage-2 is filtered out by condition resolver.

Stage-4 is filtered out by condition resolver.

Moving data to directory hdfs://localhost:8020/usr/tmp/test/.hive-staging_hive_2016-08-23_21-42-17_778_6818164305996247644-1/-ext-10000

Moving data to directory /usr/tmp/test

MapReduce Jobs Launched:

Stage-Stage-1: HDFS Read: 730 HDFS Write: 498 SUCCESS

Total MapReduce CPU Time Spent: 0 msec

OK

Time taken: 1.594 seconds導出成功,查看導出的hdfs文件

hive> dfs -cat /usr/tmp/test;

cat: `/usr/tmp/test': Is a directory

Command failed with exit code = 1

Query returned non-zero code: 1, cause: null

hive> dfs -ls /usr/tmp/test;

Found 1 items

-rwxr-xr-x 3 root supergroup 32 2016-08-23 21:42 /usr/tmp/test/000000_0

hive> dfs -cat /usr/tmp/test/000000_0;

1a1b1

2a2b2

3a3b3

4a4b4

hive>

導出到另一個表

樣例可以參考前面數據導入的部分:

insert into table test3 select * from test1;