keepalived高可用(nginx) keepalived簡介 keepalived官網 Keepalived 軟體起初是專為LVS負載均衡軟體設計的,用來管理並監控LVS集群系統中各個服務節點的狀態,後來又加入了可以實現高可用的VRRP功能。因此,Keepalived除了能夠管理LVS軟體外, ...

keepalived高可用(nginx)

目錄

keepalived簡介

keepalived官網

Keepalived 軟體起初是專為LVS負載均衡軟體設計的,用來管理並監控LVS集群系統中各個服務節點的狀態,後來又加入了可以實現高可用的VRRP功能。因此,Keepalived除了能夠管理LVS軟體外,還可以作為其他服務(例如:Nginx、Haproxy、MySQL等)的高可用解決方案軟體。

Keepalived軟體主要是通過VRRP協議實現高可用功能的。VRRP是Virtual Router RedundancyProtocol(虛擬路由器冗餘協議)的縮寫,VRRP出現的目的就是為瞭解決靜態路由單點故障問題的,它能夠保證當個別節點宕機時,整個網路可以不間斷地運行。

所以,Keepalived 一方面具有配置管理LVS的功能,同時還具有對LVS下麵節點進行健康檢查的功能,另一方面也可實現系統網路服務的高可用功能。

keepalived的重要功能

eepalived 有三個重要的功能,分別是:

管理LVS負載均衡軟體

實現LVS集群節點的健康檢查

作為系統網路服務的高可用性(failover)

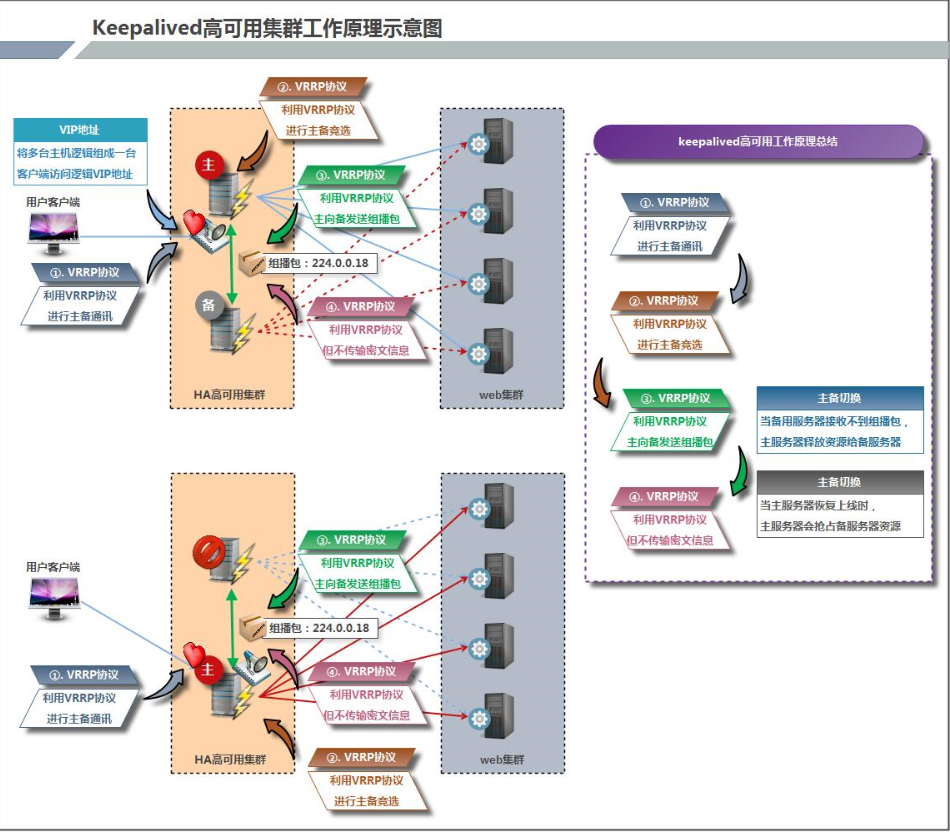

keepalived高可用架構圖

keepalived工作原理描述

Keepalived高可用對之間是通過VRRP通信的,因此,我們從 VRRP開始瞭解起:

- VRRP,全稱 Virtual Router Redundancy Protocol,中文名為虛擬路由冗餘協議,VRRP的出現是為瞭解決靜態路由的單點故障。

- VRRP是通過一種竟選協議機制來將路由任務交給某台 VRRP路由器的。

- VRRP用 IP多播的方式(預設多播地址(224.0_0.18))實現高可用對之間通信。

- 工作時主節點發包,備節點接包,當備節點接收不到主節點發的數據包的時候,就啟動接管程式接管主節點的開源。備節點可以有多個,通過優先順序競選,但一般 Keepalived系統運維工作中都是一對。

- VRRP使用了加密協議加密數據,但Keepalived官方目前還是推薦用明文的方式配置認證類型和密碼。

介紹完 VRRP,接下來我再介紹一下 Keepalived服務的工作原理:

Keepalived高可用是通過 VRRP 進行通信的, VRRP是通過競選機制來確定主備的,主的優先順序高於備,因此,工作時主會優先獲得所有的資源,備節點處於等待狀態,當主掛了的時候,備節點就會接管主節點的資源,然後頂替主節點對外提供服務。

在 Keepalived 服務之間,只有作為主的伺服器會一直發送 VRRP 廣播包,告訴備它還活著,此時備不會槍占主,當主不可用時,即備監聽不到主發送的廣播包時,就會啟動相關服務接管資源,保證業務的連續性.接管速度最快可以小於1秒。

keepalived實現nginx負載均衡機高可用

環境說明:

| 系統信息 | 主機名 | IP |

|---|---|---|

| centos 8.5 | master | 192.168.222.138 |

| centos 8.5 | backup | 192.168.222.139 |

本次高可用虛擬IP(VIP)地址暫定為192.168.222.133

keepalived安裝

阿裡雲官網

配置主keepalived

關閉防火牆:

[root@master ~]# systemctl stop firewalld.service

[root@master ~]# vim /etc/selinux/config

SELINUX=disabled

[root@master ~]# setenforce 0

[root@master ~]# systemctl disable --now firewalld.service

Removed /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

配置網路源:

[root@master ~]# dnf -y install wget

[root@master ~]# cd /etc/yum.repos.d/

[root@master yum.repos.d]# wget -O /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-vault-8.5.2111.repo

[root@master yum.repos.d]#sed -i -e '/mirrors.cloud.aliyuncs.com/d' -e '/mirrors.aliyuncs.com/d' /etc/yum.repos.d/CentOS-Base.repo

安裝epel源:

[root@master yum.repos.d]#dnf install -y https://mirrors.aliyun.com/epel/epel-release-latest-8.noarch.rpm

[root@master yum.repos.d]#sed -i 's|^#baseurl=https://download.example/pub|baseurl=https://mirrors.aliyun.com|' /etc/yum.repos.d/epel*

[root@master yum.repos.d]#sed -i 's|^metalink|#metalink|' /etc/yum.repos.d/epel*

[root@master yum.repos.d]# ls

CentOS-Base.repo epel-next-testing.repo epel-playground.repo epel-testing.repo

epel-modular.repo epel-next.repo epel-testing-modular.repo epel.repo

查找keepalived:

[root@master yum.repos.d]# cd

[root@master ~]# dnf list all |grep keepalived

Failed to set locale, defaulting to C.UTF-8

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

keepalived.x86_64 2.1.5-6.el8 AppStream

安裝keepalived:

[root@master ~]# dnf -y install keepalived

查看配置文件:

[root@master ~]# ls /etc/keepalived/

keepalived.conf

查看安裝生成的文件:

[root@master ~]# rpm -ql keepalived

/etc/keepalived //配置目錄

/etc/keepalived/keepalived.conf //此為主配置文件

/etc/sysconfig/keepalived

/usr/bin/genhash

/usr/lib/.build-id

/usr/lib/.build-id/0a

/usr/lib/.build-id/0a/410997e11c666114ca6d785e58ff0cc248744e

/usr/lib/.build-id/6f

/usr/lib/.build-id/6f/ba0d6bad6cb5ff7b074e703849ed93bebf4a0f

/usr/lib/systemd/system/keepalived.service //此為服務控制文件

/usr/libexec/keepalived

/usr/sbin/keepalived

/usr/share/doc/keepalived

/usr/share/doc/keepalived/AUTHOR

/usr/share/doc/keepalived/CONTRIBUTORS

/usr/share/doc/keepalived/COPYING

/usr/share/doc/keepalived/ChangeLog

/usr/share/doc/keepalived/README

/usr/share/doc/keepalived/TODO

/usr/share/doc/keepalived/keepalived.conf.HTTP_GET.port

/usr/share/doc/keepalived/keepalived.conf.IPv6

/usr/share/doc/keepalived/keepalived.conf.PING_CHECK

/usr/share/doc/keepalived/keepalived.conf.SMTP_CHECK

/usr/share/doc/keepalived/keepalived.conf.SSL_GET

/usr/share/doc/keepalived/keepalived.conf.SYNOPSIS

/usr/share/doc/keepalived/keepalived.conf.UDP_CHECK

/usr/share/doc/keepalived/keepalived.conf.conditional_conf

/usr/share/doc/keepalived/keepalived.conf.fwmark

/usr/share/doc/keepalived/keepalived.conf.inhibit

/usr/share/doc/keepalived/keepalived.conf.misc_check

/usr/share/doc/keepalived/keepalived.conf.misc_check_arg

/usr/share/doc/keepalived/keepalived.conf.quorum

/usr/share/doc/keepalived/keepalived.conf.sample

/usr/share/doc/keepalived/keepalived.conf.status_code

/usr/share/doc/keepalived/keepalived.conf.track_interface

/usr/share/doc/keepalived/keepalived.conf.virtual_server_group

/usr/share/doc/keepalived/keepalived.conf.virtualhost

/usr/share/doc/keepalived/keepalived.conf.vrrp

/usr/share/doc/keepalived/keepalived.conf.vrrp.localcheck

/usr/share/doc/keepalived/keepalived.conf.vrrp.lvs_syncd

/usr/share/doc/keepalived/keepalived.conf.vrrp.routes

/usr/share/doc/keepalived/keepalived.conf.vrrp.rules

/usr/share/doc/keepalived/keepalived.conf.vrrp.scripts

/usr/share/doc/keepalived/keepalived.conf.vrrp.static_ipaddress

/usr/share/doc/keepalived/keepalived.conf.vrrp.sync

/usr/share/man/man1/genhash.1.gz

/usr/share/man/man5/keepalived.conf.5.gz

/usr/share/man/man8/keepalived.8.gz

/usr/share/snmp/mibs/KEEPALIVED-MIB.txt

/usr/share/snmp/mibs/VRRP-MIB.txt

/usr/share/snmp/mibs/VRRPv3-MIB.txt

用同樣的方法在備伺服器上安裝keepalived

關閉防火牆:

[root@backup ~]# systemctl stop firewalld.service

[root@backup ~]# vim /etc/selinux/config

SELINUX=disabled

[root@backup ~]# setenforce 0

[root@backup ~]# systemctl disable --now firewalld.service

Removed /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

配置網路源:

[root@backup ~]# dnf -y install wget

[root@backup ~]# cd /etc/yum.repos.d/

[root@backup yum.repos.d]# wget -O /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-vault-8.5.2111.repo

[root@backup yum.repos.d]#sed -i -e '/mirrors.cloud.aliyuncs.com/d' -e '/mirrors.aliyuncs.com/d' /etc/yum.repos.d/CentOS-Base.repo

安裝epel源

[root@backup yum.repos.d]#dnf install -y https://mirrors.aliyun.com/epel/epel-release-latest-8.noarch.rpm

[root@backup yum.repos.d]#sed -i 's|^#baseurl=https://download.example/pub|baseurl=https://mirrors.aliyun.com|' /etc/yum.repos.d/epel*

[root@backup yum.repos.d]#sed -i 's|^metalink|#metalink|' /etc/yum.repos.d/epel*

[root@backup yum.repos.d]# ls

CentOS-Base.repo epel-next-testing.repo epel-playground.repo epel-testing.repo

epel-modular.repo epel-next.repo epel-testing-modular.repo epel.repo

查找keepalived:

[root@backup yum.repos.d]# cd

[root@backup ~]# dnf list all |grep keepalived

Failed to set locale, defaulting to C.UTF-8

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

keepalived.x86_64 2.1.5-6.el8 AppStream

安裝keepalived:

[root@backup ~]# dnf -y install keepalived

查看配置文件:

[root@backup ~]# ls /etc/keepalived/

keepalived.conf

查看安裝生成的文件:

[root@backup ~]# rpm -ql keepalived

/etc/keepalived //配置目錄

/etc/keepalived/keepalived.conf //此為主配置文件

/etc/sysconfig/keepalived

/usr/bin/genhash

/usr/lib/.build-id

/usr/lib/.build-id/0a

/usr/lib/.build-id/0a/410997e11c666114ca6d785e58ff0cc248744e

/usr/lib/.build-id/6f

/usr/lib/.build-id/6f/ba0d6bad6cb5ff7b074e703849ed93bebf4a0f

/usr/lib/systemd/system/keepalived.service //此為服務控制文件

/usr/libexec/keepalived

/usr/sbin/keepalived

/usr/share/doc/keepalived

/usr/share/doc/keepalived/AUTHOR

/usr/share/doc/keepalived/CONTRIBUTORS

/usr/share/doc/keepalived/COPYING

/usr/share/doc/keepalived/ChangeLog

/usr/share/doc/keepalived/README

/usr/share/doc/keepalived/TODO

/usr/share/doc/keepalived/keepalived.conf.HTTP_GET.port

/usr/share/doc/keepalived/keepalived.conf.IPv6

/usr/share/doc/keepalived/keepalived.conf.PING_CHECK

/usr/share/doc/keepalived/keepalived.conf.SMTP_CHECK

/usr/share/doc/keepalived/keepalived.conf.SSL_GET

/usr/share/doc/keepalived/keepalived.conf.SYNOPSIS

/usr/share/doc/keepalived/keepalived.conf.UDP_CHECK

/usr/share/doc/keepalived/keepalived.conf.conditional_conf

/usr/share/doc/keepalived/keepalived.conf.fwmark

/usr/share/doc/keepalived/keepalived.conf.inhibit

/usr/share/doc/keepalived/keepalived.conf.misc_check

/usr/share/doc/keepalived/keepalived.conf.misc_check_arg

/usr/share/doc/keepalived/keepalived.conf.quorum

/usr/share/doc/keepalived/keepalived.conf.sample

/usr/share/doc/keepalived/keepalived.conf.status_code

/usr/share/doc/keepalived/keepalived.conf.track_interface

/usr/share/doc/keepalived/keepalived.conf.virtual_server_group

/usr/share/doc/keepalived/keepalived.conf.virtualhost

/usr/share/doc/keepalived/keepalived.conf.vrrp

/usr/share/doc/keepalived/keepalived.conf.vrrp.localcheck

/usr/share/doc/keepalived/keepalived.conf.vrrp.lvs_syncd

/usr/share/doc/keepalived/keepalived.conf.vrrp.routes

/usr/share/doc/keepalived/keepalived.conf.vrrp.rules

/usr/share/doc/keepalived/keepalived.conf.vrrp.scripts

/usr/share/doc/keepalived/keepalived.conf.vrrp.static_ipaddress

/usr/share/doc/keepalived/keepalived.conf.vrrp.sync

/usr/share/man/man1/genhash.1.gz

/usr/share/man/man5/keepalived.conf.5.gz

/usr/share/man/man8/keepalived.8.gz

/usr/share/snmp/mibs/KEEPALIVED-MIB.txt

/usr/share/snmp/mibs/VRRP-MIB.txt

/usr/share/snmp/mibs/VRRPv3-MIB.txt

在主備機上分別安裝nginx

在master上安裝nginx

[root@master ~]# dnf -y install nginx

[root@master ~]# cd /usr/share/nginx/html/

[root@master html]# ls

404.html 50x.html index.html nginx-logo.png poweredby.png

[root@master html]# echo 'master' > index.html

[root@master html]# systemctl start nginx

[root@master html]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

LISTEN 0 128 0.0.0.0:111 0.0.0.0:*

LISTEN 0 128 0.0.0.0:80 0.0.0.0:*

LISTEN 0 32 192.168.122.1:53 0.0.0.0:*

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 [::]:111 [::]:*

LISTEN 0 128 [::]:80 [::]:*

LISTEN 0 128 [::]:22 [::]:*

[root@master html]# systemctl enable nginx

Created symlink /etc/systemd/system/multi-user.target.wants/nginx.service → /usr/lib/systemd/system/nginx.service.

//在主節點這邊需要設置開機自啟

在backup上安裝nginx

[root@backup ~]# dnf -y install nginx

[root@backup ~]# cd /usr/share/nginx/html/

[root@backup html]# ls

404.html 50x.html index.html nginx-logo.png poweredby.png

[root@backup html]# echo 'backup' > index.html

root@backup html]# systemctl start nginx

[root@backup html]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 0.0.0.0:80 0.0.0.0:*

LISTEN 0 128 [::]:22 [::]:*

LISTEN 0 128 [::]:80 [::]:*

//在備節點這邊不需要設置開機自啟

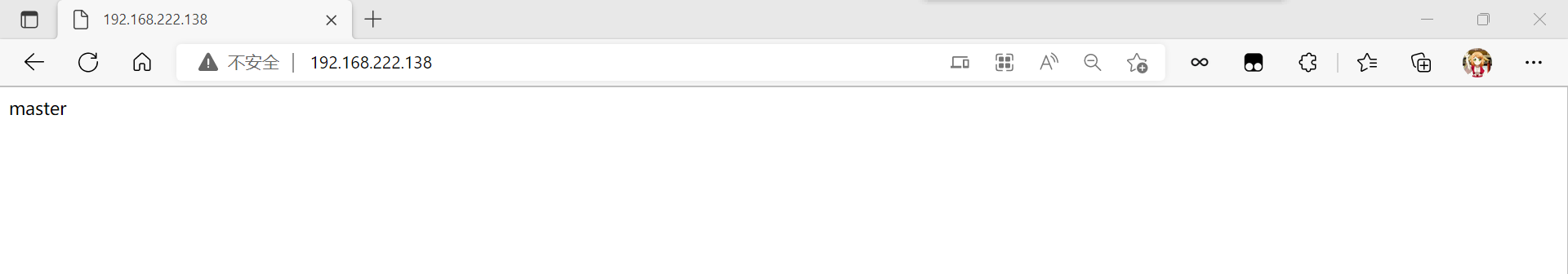

在瀏覽器上訪問試試,確保master上的nginx服務能夠正常訪問

keepalived配置

配置主keepalived

[root@master html]# cd /etc/keepalived/

[root@master keepalived]# ls

keepalived.conf

[root@master keepalived]# mv keepalived.conf{,-bak}

[root@master keepalived]# ls

keepalived.conf-bak //備份一下配置文件

[root@master keepalived]# dnf -y install vim

[root@master keepalived]# vim keepalived.conf //編輯一個新配置文件

[root@master keepalived]# cat keepalived.conf

! Configuration File for keepalived

global_defs {

router_id lb01

}

vrrp_instance VI_1 { //這裡主備節點需要一致

state BACKUP

interface ens33 //網卡

virtual_router_id 51

priority 100 //這裡比備節點的高

advert_int 1

authentication {

auth_type PASS

auth_pass tushanbu //密碼(可以隨機生成)

}

virtual_ipaddress {

192.168.222.133 //高可用虛擬IP(VIP)地址

}

}

virtual_server 192.168.222.133 80 {

delay_loop 6

lb_algo rr

lb_kind DR

persistence_timeout 50

protocol TCP

real_server 192.168.222.138 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

real_server 192.168.222.139 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

}

[root@master keepalived]# ls

keepalived.conf keepalived.conf-bak

[root@master keepalived]# systemctl enable --now keepalived

Created symlink /etc/systemd/system/multi-user.target.wants/keepalived.service → /usr/lib/systemd/system/keepalived.service.

[root@master keepalived]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:f6:83:57 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.138/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.222.133/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fef6:8357/64 scope link

valid_lft forever preferred_lft forever

3: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

4: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc fq_codel master virbr0 state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

//此時備節點的keepalived還沒有啟動

[root@master keepalived]# scp keepalived.conf 192.168.222.139:/etc/keepalived

The authenticity of host '192.168.222.139 (192.168.222.139)' can't be established.

ECDSA key fingerprint is SHA256:anVVbTlEIzA1E8rB7IbLzaf7t9oQjB0qFP6Dd/ijnJI.

Are you sure you want to continue connecting (yes/no/[fingerprint])? yes

Warning: Permanently added '192.168.222.139' (ECDSA) to the list of known hosts.

[email protected]'s password:

keepalived.conf 100% 875 905.2KB/s 00:00

//將創建的這個配置文件傳到備節點上去,因為主,備節點的這個配置文件基本上都是一樣的只需要改一點點

配置備keepalived

[root@backup html]# cd /etc/keepalived/

[root@backup keepalived]# ls

keepalived.conf

[root@backup keepalived]# mv keepalived.conf{,-bak}

[root@backup keepalived]# ls

keepalived.conf-bak //備份一下配置文件

[root@backup keepalived]# dnf -y install vim

[root@backup keepalived]# ls //接收到主節點傳過來的配置文件

keepalived.conf keepalived.conf-bak

[root@backup keepalived]# vim keepalived.conf //進行修改一下

[root@backup keepalived]# cat keepalived.conf

! Configuration File for keepalived

global_defs {

router_id lb02

}

vrrp_instance VI_1 { //這裡主備節點需要一致

state BACKUP

interface ens33 //網卡

virtual_router_id 51

priority 90 //這裡比主節點的小

advert_int 1

authentication {

auth_type PASS

auth_pass tushanbu //密碼(可以隨機生成)

}

virtual_ipaddress {

192.168.222.133 //高可用虛擬IP(VIP)地址

}

}

virtual_server 192.168.222.133 80 {

delay_loop 6

lb_algo rr

lb_kind DR

persistence_timeout 50

protocol TCP

real_server 192.168.222.138 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

real_server 192.168.222.139 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

}

[root@backup keepalived]# systemctl enable --now keepalived

Created symlink /etc/systemd/system/multi-user.target.wants/keepalived.service → /usr/lib/systemd/system/keepalived.service.

查看VIP在哪裡

在MASTER上查看

[root@master keepalived]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:f6:83:57 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.138/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.222.133/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fef6:8357/64 scope link

valid_lft forever preferred_lft forever

3: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

4: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc fq_codel master virbr0 state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

//主節點上面有vip

在BACKUP上查看

[root@backup keepalived]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:31:af:f9 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.139/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe31:aff9/64 scope link

valid_lft forever preferred_lft forever

//備節點上面沒有vip

測試

停掉master的keepalived服務,開啟backup的niginx和keepalived服務然後查看主權情況

master

[root@master keepalived]# systemctl stop keepalived.service

backup:

[root@backup keepalived]# systemctl start nginx.service

[root@backup keepalived]# systemctl start keepalived.service

[root@backup keepalived]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:31:af:f9 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.139/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.222.133/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe31:aff9/64 scope link

valid_lft forever preferred_lft forever

//此時可以看見backup是主

然後再開啟master的keepalived服務再查看主權情況

master

[root@master keepalived]# systemctl start keepalived.service

[root@master keepalived]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:f6:83:57 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.138/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.222.133/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fef6:8357/64 scope link

valid_lft forever preferred_lft forever

3: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

4: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc fq_codel master virbr0 state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

backup

[root@backup keepalived]# systemctl stop nginx.service

//此時測試的時候backup上面的nginx是要進行關閉的

[root@backup keepalived]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 [::]:22 [::]:*

[root@backup keepalived]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:31:af:f9 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.139/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe31:aff9/64 scope link

valid_lft forever preferred_lft forever

//此時可以看見master還是主

讓keepalived監控nginx負載均衡機

keepalived通過腳本來監控nginx負載均衡機的狀態

在master上編寫腳本

[root@master keepalived]# cd

[root@master ~]# mkdir /scripts

[root@master ~]# cd /scripts/

[root@master scripts]# vim check_nginx.sh

[root@master scripts]# cat check_nginx.sh

#!/bin/bash

nginx_status=$(ps -ef|grep -Ev "grep|$0"|grep '\bnginx\b'|wc -l)

if [ $nginx_status -lt 1 ];then

systemctl stop keepalived

fi

[root@master scripts]# chmod +x check_nginx.sh

[root@master scripts]# ll

total 4

-rwxr-xr-x. 1 root root 142 Oct 8 23:21 check_nginx.sh

[root@master scripts]# vim notify.sh

[root@master scripts]# cat notify.sh

#!/bin/bash

case "$1" in

master)

nginx_status=$(ps -ef|grep -Ev "grep|$0"|grep '\bnginx\b'|wc -l)

if [ $nginx_status -lt 1 ];then

systemctl start nginx

fi

;;

backup)

nginx_status=$(ps -ef|grep -Ev "grep|$0"|grep '\bnginx\b'|wc -l)

if [ $nginx_status -gt 0 ];then

systemctl stop nginx

fi

;;

*)

echo "Usage:$0 master|backup VIP"

;;

esac

[root@master scripts]# chmod +x notify.sh

[root@master scripts]# ll

total 8

-rwxr-xr-x. 1 root root 142 Oct 8 23:21 check_nginx.sh

-rwxr-xr-x. 1 root root 383 Oct 8 23:31 notify.sh

[root@master scripts]# scp check_nginx.sh 192.168.222.139:/scripts/

[email protected]'s password:

check_nginx.sh 100% 142 113.6KB/s 00:00

[root@master scripts]# scp notify.sh 192.168.222.139:/scripts/

[email protected]'s password:

notify.sh 100% 383 244.7KB/s 00:00

//將這個腳本傳給備節點上提前創建好的目錄裡面

在backup上編寫腳本

[root@backup keepalived]# cd

[root@backup ~]# mkdir /scripts

[root@backup ~]# cd /scripts/

[root@backup scripts]# ll

total 8

-rwxr-xr-x. 1 root root 142 Oct 8 23:39 check_nginx.sh

-rwxr-xr-x. 1 root root 383 Oct 8 23:36 notify.sh

配置keepalived加入監控腳本的配置

配置主keepalived

[root@master scripts]# cd

[root@master ~]# vim /etc/keepalived/keepalived.conf

[root@master ~]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id lb01

}

vrrp_script nginx_check { //添加這一部分

script "/scripts/check_nginx.sh"

interval 5

weight -20

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass tushanbu

}

virtual_ipaddress {

192.168.222.133

}

track_script { //添加這一部分

nginx_check

}

notify_master "/scripts/notify.sh master 192.168.222.133"

notify_backup "/scripts/notify.sh backup 192.168.222.133"

}

virtual_server 192.168.222.133 80 {

delay_loop 6

lb_algo rr

lb_kind DR

persistence_timeout 50

protocol TCP

real_server 192.168.222.138 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

real_server 192.168.222.139 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

}

[root@master ~]# systemctl restart keepalived.service

[root@master ~]# systemctl restart nginx.service

配置備keepalived

backup無需檢測nginx是否正常,當升級為MASTER時啟動nginx,當降級為BACKUP時關閉

[root@backup scripts]# cd

[root@backup ~]# vim /etc/keepalived/keepalived.conf

[root@backup ~]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id lb02

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

virtual_router_id 51

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass tushanbu

}

virtual_ipaddress {

192.168.222.133

}

notify_master "/scripts/notify.sh master 192.168.222.133" //添加

notify_backup "/scripts/notify.sh backup 192.168.222.133" //添加

}

virtual_server 192.168.222.133 80 {

delay_loop 6

lb_algo rr

lb_kind DR

persistence_timeout 50

protocol TCP

real_server 192.168.222.138 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

real_server 192.168.222.139 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

}

[root@backup ~]# systemctl restart keepalived.service

[root@backup ~]# systemctl restart nginx.service

測試

正常狀態運行查看狀態

master:

[root@master ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:f6:83:57 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.138/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.222.133/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fef6:8357/64 scope link

valid_lft forever preferred_lft forever

3: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

4: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc fq_codel master virbr0 state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

[root@master ~]# curl 192.168.222.133

master

backup:

[root@backup ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:31:af:f9 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.139/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe31:aff9/64 scope link

valid_lft forever preferred_lft forever

停止nginx

[root@master ~]# systemctl stop nginx.service

[root@master ~]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

LISTEN 0 128 0.0.0.0:111 0.0.0.0:*

LISTEN 0 32 192.168.122.1:53 0.0.0.0:*

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 [::]:111 [::]:*

LISTEN 0 128 [::]:22 [::]:*

master上停止nginx後的狀態

master:

[root@master ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:f6:83:57 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.138/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fef6:8357/64 scope link

valid_lft forever preferred_lft forever

3: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

4: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc fq_codel master virbr0 state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

backup:

[root@backup ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:31:af:f9 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.139/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.222.133/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe31:aff9/64 scope link

valid_lft forever preferred_lft forever

[root@backup ~]# curl 192.168.222.133

backup

腦裂

在高可用(HA)系統中,當聯繫2個節點的“心跳線”斷開時,本來為一整體、動作協調的HA系統,就分裂成為2個獨立的個體。由於相互失去了聯繫,都以為是對方出了故障。兩個節點上的HA軟體像“裂腦人”一樣,爭搶“共用資源”、爭起“應用服務”,就會發生嚴重後果——或者共用資源被瓜分、2邊“服務”都起不來了;或者2邊“服務”都起來了,但同時讀寫“共用存儲”,導致數據損壞(常見如資料庫輪詢著的聯機日誌出錯)。

對付HA系統“裂腦”的對策,目前達成共識的的大概有以下幾條:

添加冗餘的心跳線,例如:雙線條線(心跳線也HA),儘量減少“裂腦”發生幾率;

啟用磁碟鎖。正在服務一方鎖住共用磁碟,“裂腦”發生時,讓對方完全“搶不走”共用磁碟資源。但使用鎖磁碟也會有一個不小的問題,如果占用共用盤的一方不主動“解鎖”,另一方就永遠得不到共用磁碟。現實中假如服務節點突然死機或崩潰,就不可能執行解鎖命令。後備節點也就接管不了共用資源和應用服務。於是有人在HA中設計了“智能”鎖。即:正在服務的一方只在發現心跳線全部斷開(察覺不到對端)時才啟用磁碟鎖。平時就不上鎖了。

設置仲裁機制。例如設置參考IP(如網關IP),當心跳線完全斷開時,2個節點都各自ping一下參考IP,不通則表明斷點就出在本端。不僅“心跳”、還兼對外“服務”的本端網路鏈路斷了,即使啟動(或繼續)應用服務也沒有用了,那就主動放棄競爭,讓能夠ping通參考IP的一端去起服務。更保險一些,ping不通參考IP的一方乾脆就自我重啟,以徹底釋放有可能還占用著的那些共用資源

腦裂產生的原因

一般來說,腦裂的發生,有以下幾種原因:

- 高可用伺服器對之間心跳線鏈路發生故障,導致無法正常通信

因心跳線壞了(包括斷了,老化)

因網卡及相關驅動壞了,ip配置及衝突問題(網卡直連)

因心跳線間連接的設備故障(網卡及交換機)

因仲裁的機器出問題(採用仲裁的方案) - 高可用伺服器上開啟了 iptables防火牆阻擋了心跳消息傳輸

- 高可用伺服器上心跳網卡地址等信息配置不正確,導致發送心跳失敗

- 其他服務配置不當等原因,如心跳方式不同,心跳廣插衝突、軟體Bug等

註意:

Keepalived配置里同一 VRRP實例如果 virtual_router_id兩端參數配置不一致也會導致裂腦問題發生。

腦裂的常見解決方案

在實際生產環境中,我們可以從以下幾個方面來防止裂腦問題的發生:

同時使用串列電纜和乙太網電纜連接,同時用兩條心跳線路,這樣一條線路壞了,另一個還是好的,依然能傳送心跳消息

當檢測到裂腦時強行關閉一個心跳節點(這個功能需特殊設備支持,如Stonith、feyce)。相當於備節點接收不到心跳消患,通過單獨的線路發送關機命令關閉主節點的電源

做好對裂腦的監控報警(如郵件及手機簡訊等或值班).在問題發生時人為第一時間介入仲裁,降低損失。例如,百度的監控報警簡訊就有上行和下行的區別。報警消息發送到管理員手機上,管理員可以通過手機回覆對應數字或簡單的字元串操作返回給伺服器.讓伺服器根據指令自動處理相應故障,這樣解決故障的時間更短.

當然,在實施高可用方案時,要根據業務實際需求確定是否能容忍這樣的損失。對於一般的網站常規業務.這個損失是可容忍的

對腦裂進行監控

對腦裂的監控應在備用伺服器上進行,通過添加zabbix自定義監控進行。

監控什麼信息呢?監控備上有無VIP地址

備機上出現VIP有兩種情況:

發生了腦裂

正常的主備切換

監控只是監控發生腦裂的可能性,不能保證一定是發生了腦裂,因為正常的主備切換VIP也是會到備上的。

監控腳本如下:

[root@backup ~]# mkdir -p /scripts && cd /scripts

[root@backup scripts]# vim check_keepalived.sh

#!/bin/bash

if [ `ip a show ens33 |grep 192.168.222.133|wc -l` -ne 0 ]

then

echo "keepalived is error!"

else

echo "keepalived is OK !"

fi

編寫腳本時要註意,網卡要改成你自己的網卡名稱,VIP也要改成你自己的VIP,最後不要忘了給腳本賦予執行許可權,且要修改/scripts目錄的屬主屬組為zabbix

環境

| 主機 | 安裝的服務 | ip |

|---|---|---|

| master | keepalived,nginx | 192.168.222.138 |

| backup | keepalived,nginx,zabbix客戶端 | 192.168.222.139 |

| zabbix | zabbix服務端 | 192.168.222.250 |

VIP:192.168.222.133

zabbix的安裝部署以及一些操作可以看我的博客監控服務zabbix部署,zabbix的基礎使用,

zabbix監控詳解裡面有zabbix安裝的詳細操作

在backup主機安裝zabbix的客戶端,在192.168.222.250主機安裝zabbix服務端用於使用web網頁管理監控

監控出現異常的兩種狀態:

正常情況下master主機nginx和keepalived為啟動狀態,backup主機keepalived為開啟,nginx為關閉

當master主機發生異常時backup主機通過腳本搶奪vip

當出現腦裂時主備的兩台主機都會有vip,虛擬IP

編寫監控腳本

在backup主機或者zabbix客戶端編寫腳本

[root@backup ~]# cd /scripts/

[root@backup scripts]# ls

check_nginx.sh notify.sh

[root@backup scripts]# vim check_keepalived.sh

[root@backup scripts]# cat check_keepalived.sh

#!/bin/bash

if [ `ip a show ens33 |grep 192.168.222.133|wc -l` -ne 0 ]

then

echo "1" //有問題

else

echo "0" //沒問題

fi

[root@backup scripts]# chmod +x check_keepalived.sh

[root@backup scripts]# ls

check_keepalived.sh check_nginx.sh notify.sh

[root@backup scripts]# chown -R zabbix.zabbix /scripts/

[root@backup scripts]# ll

total 12

-rwxr-xr-x. 1 zabbix zabbix 148 Oct 9 21:05 check_keepalived.sh

-rwxr-xr-x. 1 zabbix zabbix 142 Oct 8 23:39 check_nginx.sh

-rwxr-xr-x. 1 zabbix zabbix 383 Oct 8 23:36 notify.sh

[root@backup scripts]# systemctl stop nginx.service

[root@backup scripts]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 0.0.0.0:10050 0.0.0.0:*

LISTEN 0 128 [::]:22 [::]:*

[root@backup scripts]# ./check_keepalived.sh

0

//測試腳本

//正常情況下master主機nginx和keepalived為啟動狀態,backup主機keepalived為開啟,nginx為關閉

[root@backup scripts]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:31:af:f9 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.139/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe31:aff9/64 scope link

valid_lft forever preferred_lft forever

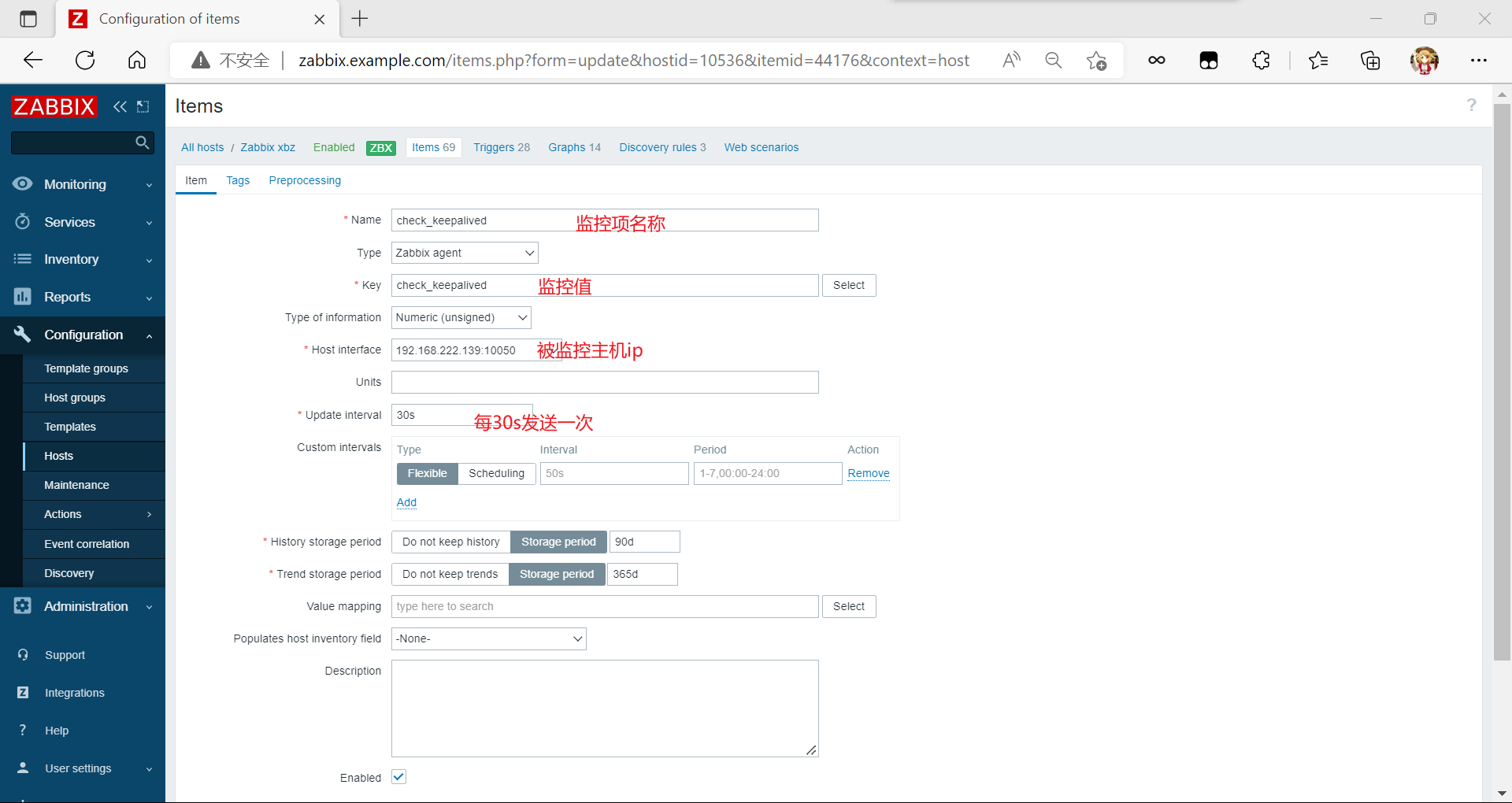

修改backup的zabbix配置文件

[root@backup scripts]# cd

[root@backup ~]# cd /usr/local/etc/

[root@backup etc]# pwd

/usr/local/etc

[root@backup etc]# vim zabbix_agentd.conf

UserParameter=check_keepalived,/bin/bash /scripts/check_keepalived.sh //修改

UnsafeUserParameters=1 //修改

[root@backup ~]# pkill zabbix_agentd

[root@backup ~]# zabbix_agentd

//重啟zabbix

zabbix服務端測試

[root@zabbix ~]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

LISTEN 0 128 0.0.0.0:80 0.0.0.0:*

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 0.0.0.0:10050 0.0.0.0:*

LISTEN 0 128 0.0.0.0:10051 0.0.0.0:*

LISTEN 0 128 127.0.0.1:9000 0.0.0.0:*

LISTEN 0 128 [::]:22 [::]:*

LISTEN 0 70 *:33060 *:*

LISTEN 0 128 *:3306 *:*

[root@zabbix ~]# zabbix_get -s 192.168.222.139 -k check_keepalived

0

查看master狀態

[root@master ~]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

LISTEN 0 128 0.0.0.0:111 0.0.0.0:*

LISTEN 0 128 0.0.0.0:80 0.0.0.0:*

LISTEN 0 32 192.168.122.1:53 0.0.0.0:*

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 [::]:111 [::]:*

LISTEN 0 128 [::]:80 [::]:*

LISTEN 0 128 [::]:22 [::]:*

[root@master ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:f6:83:57 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.138/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.222.133/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fef6:8357/64 scope link

valid_lft forever preferred_lft forever

3: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

4: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc fq_codel master virbr0 state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

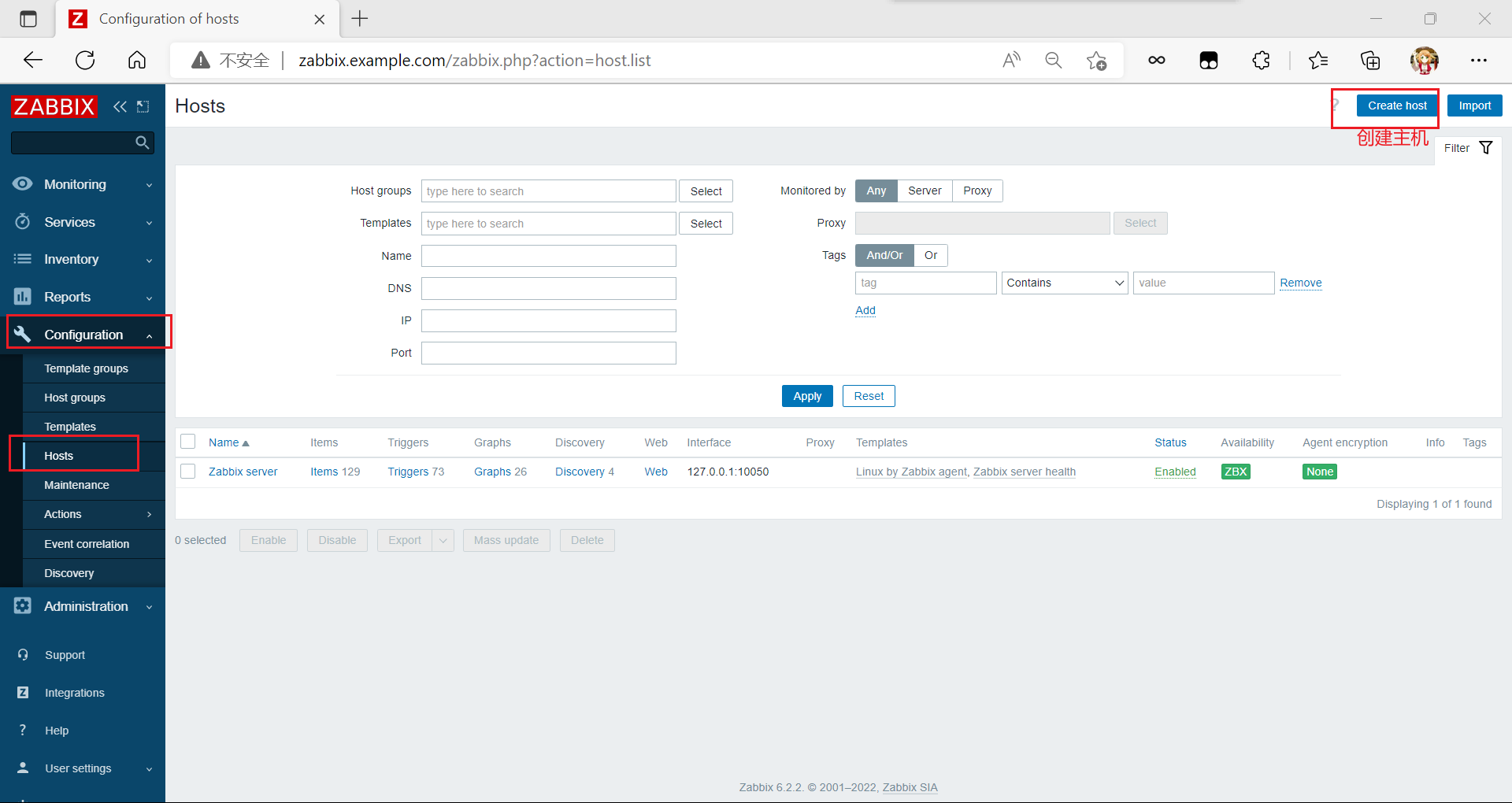

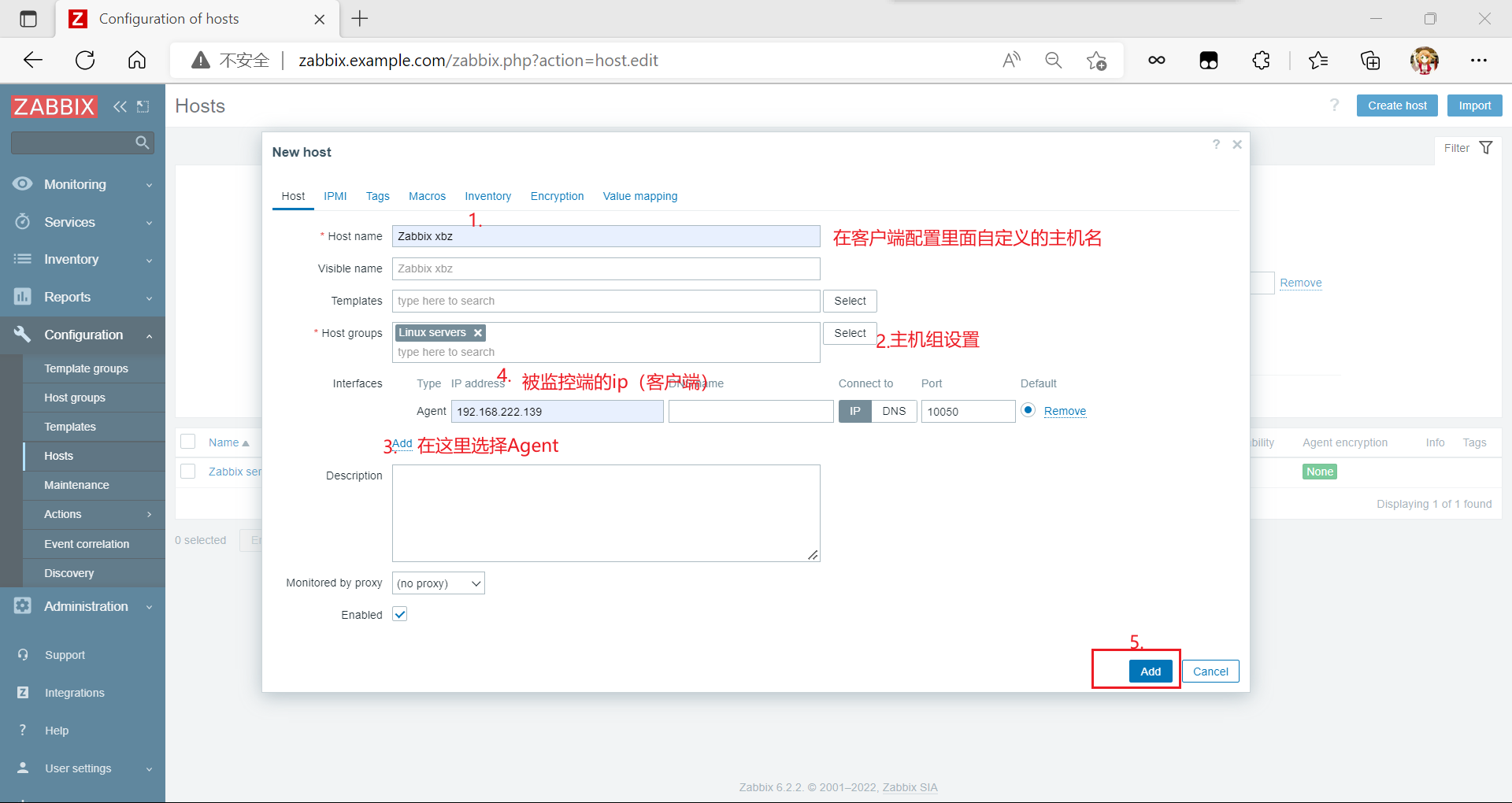

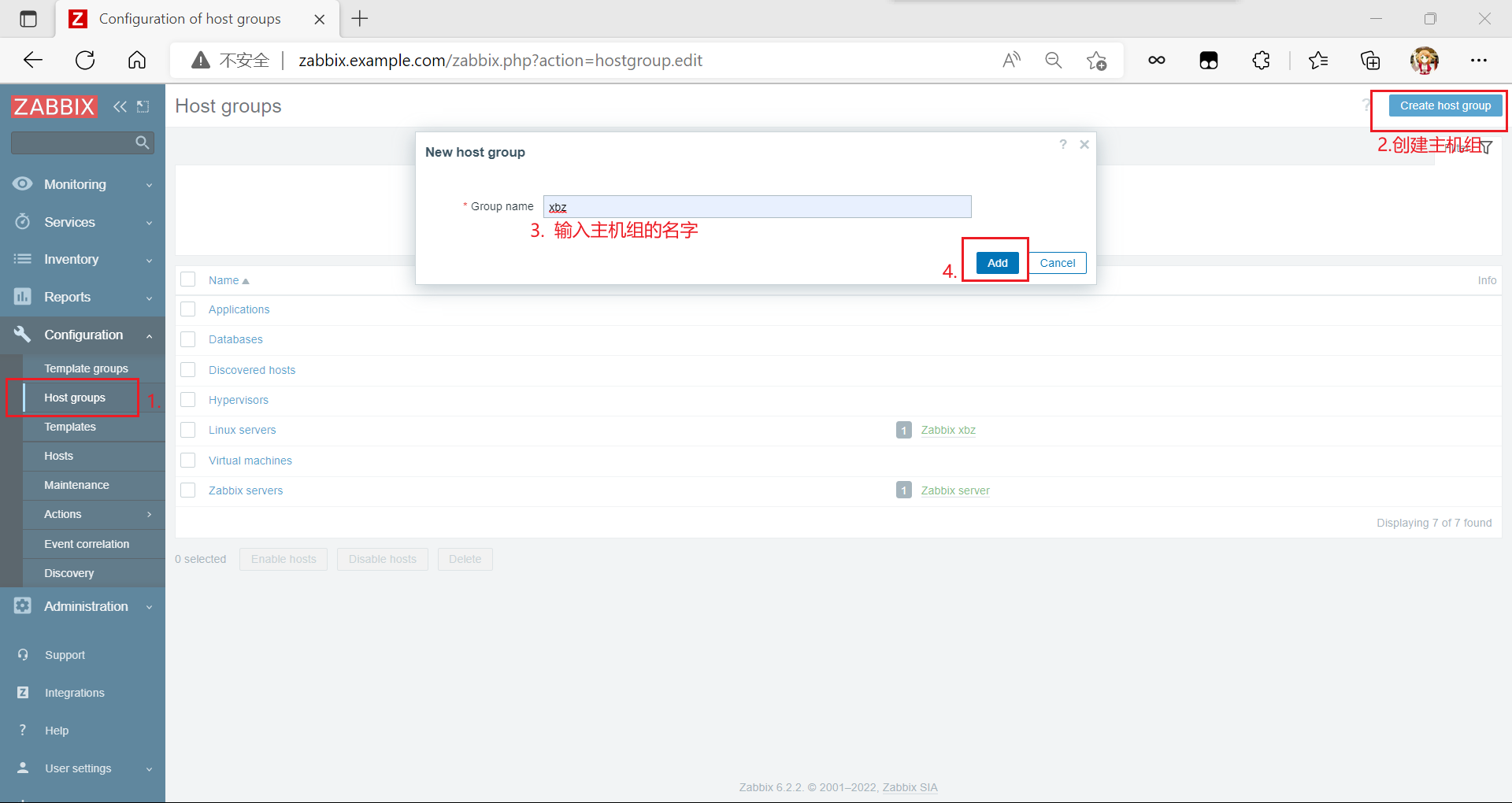

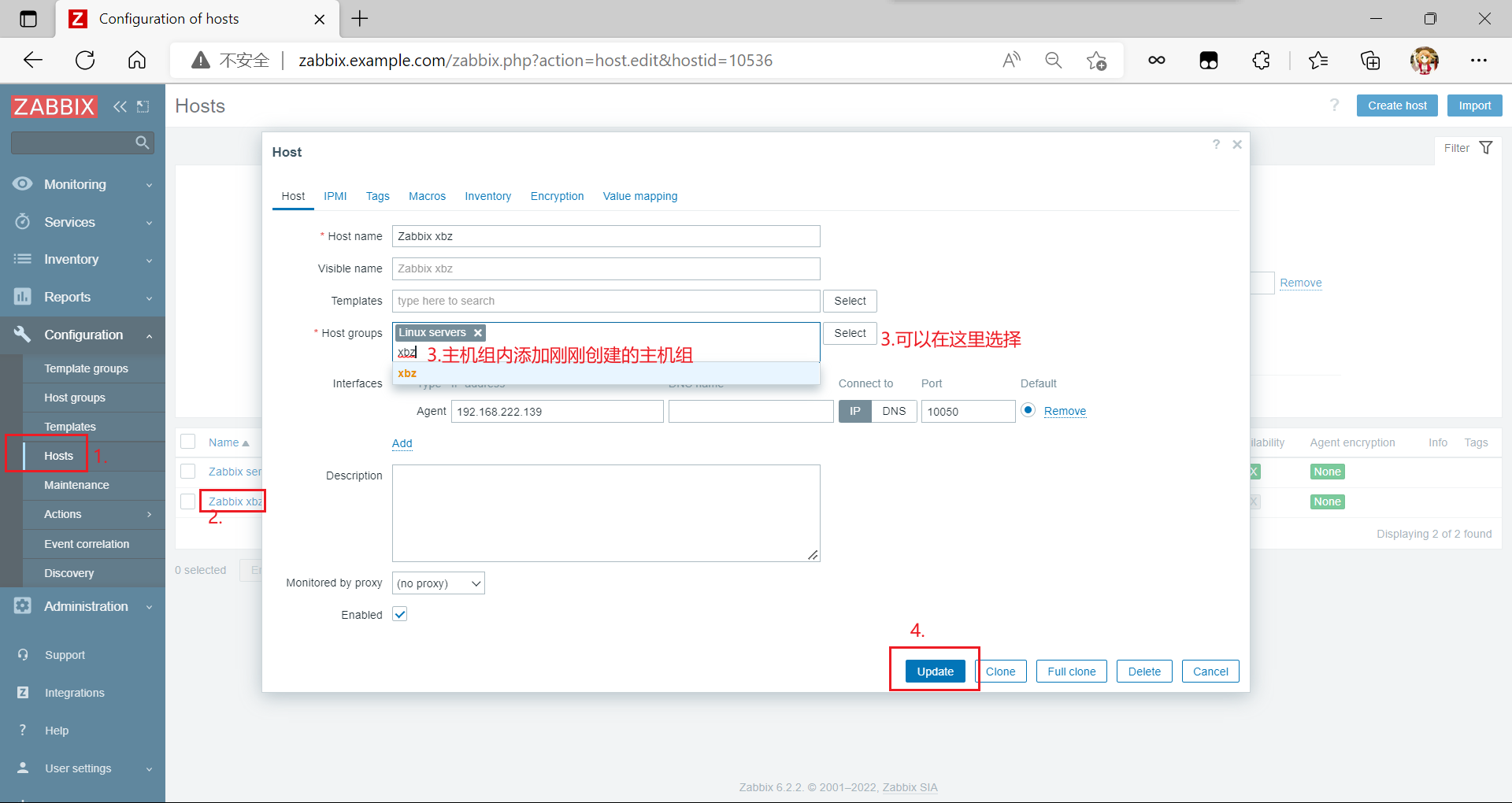

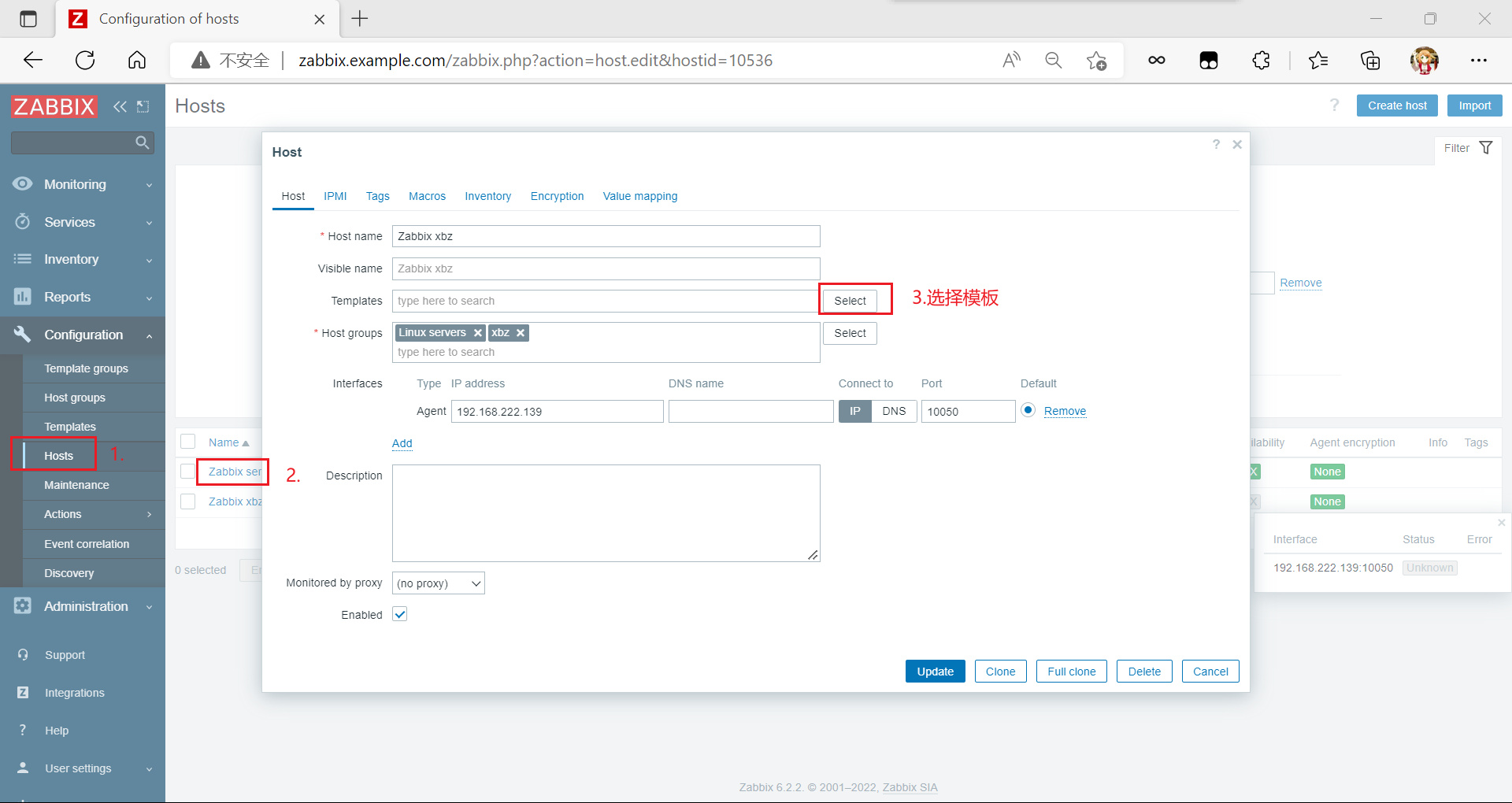

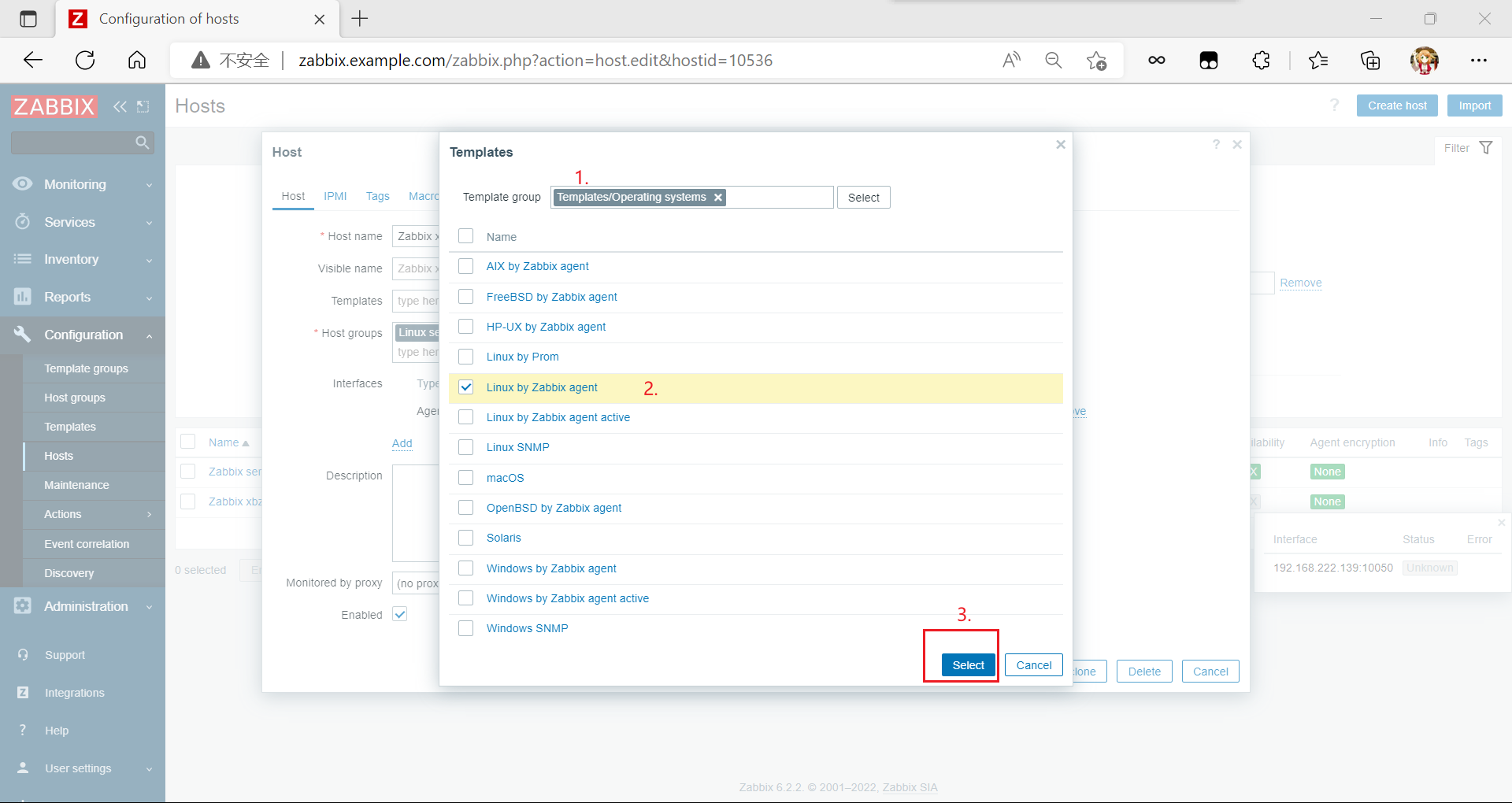

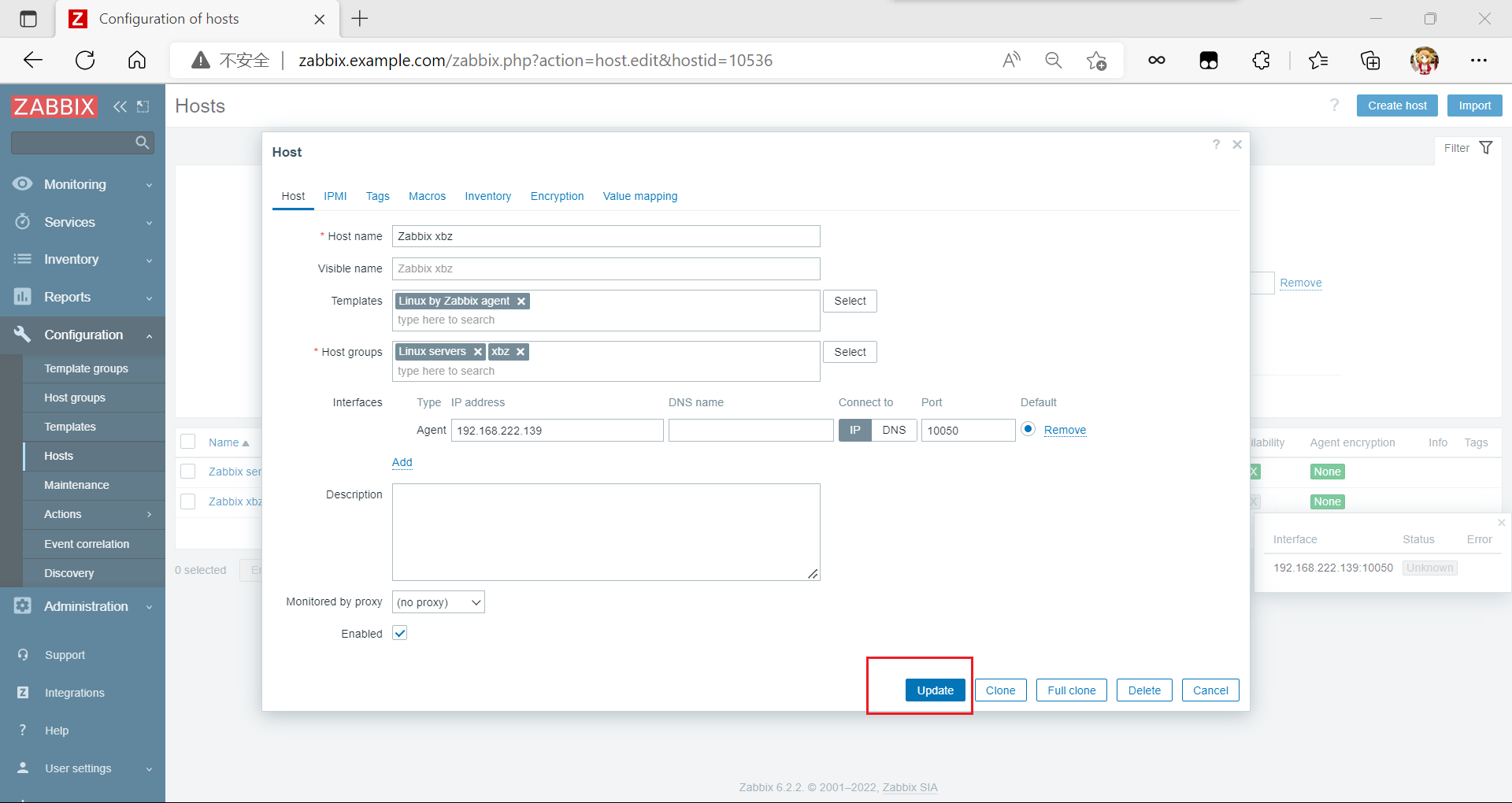

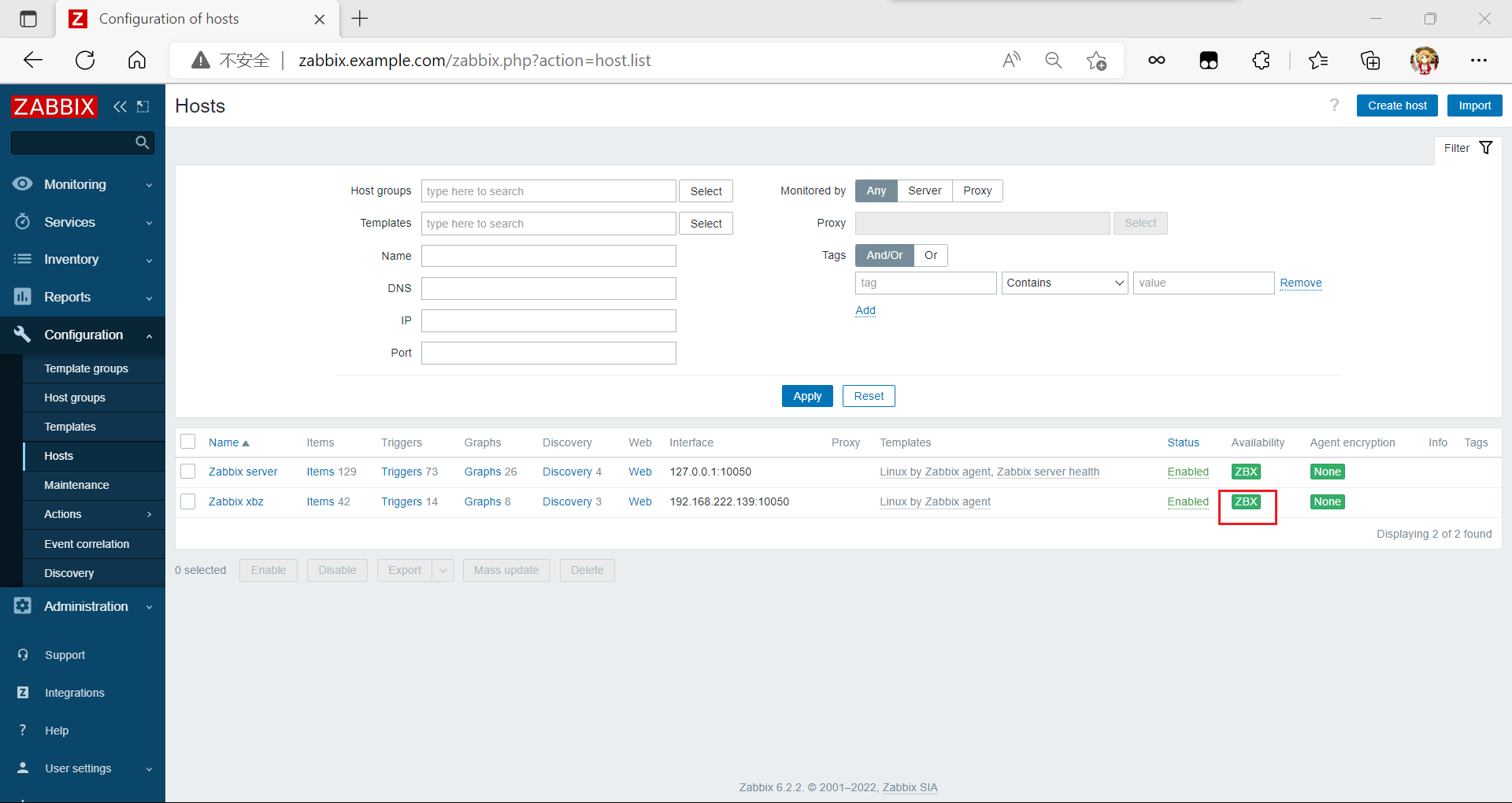

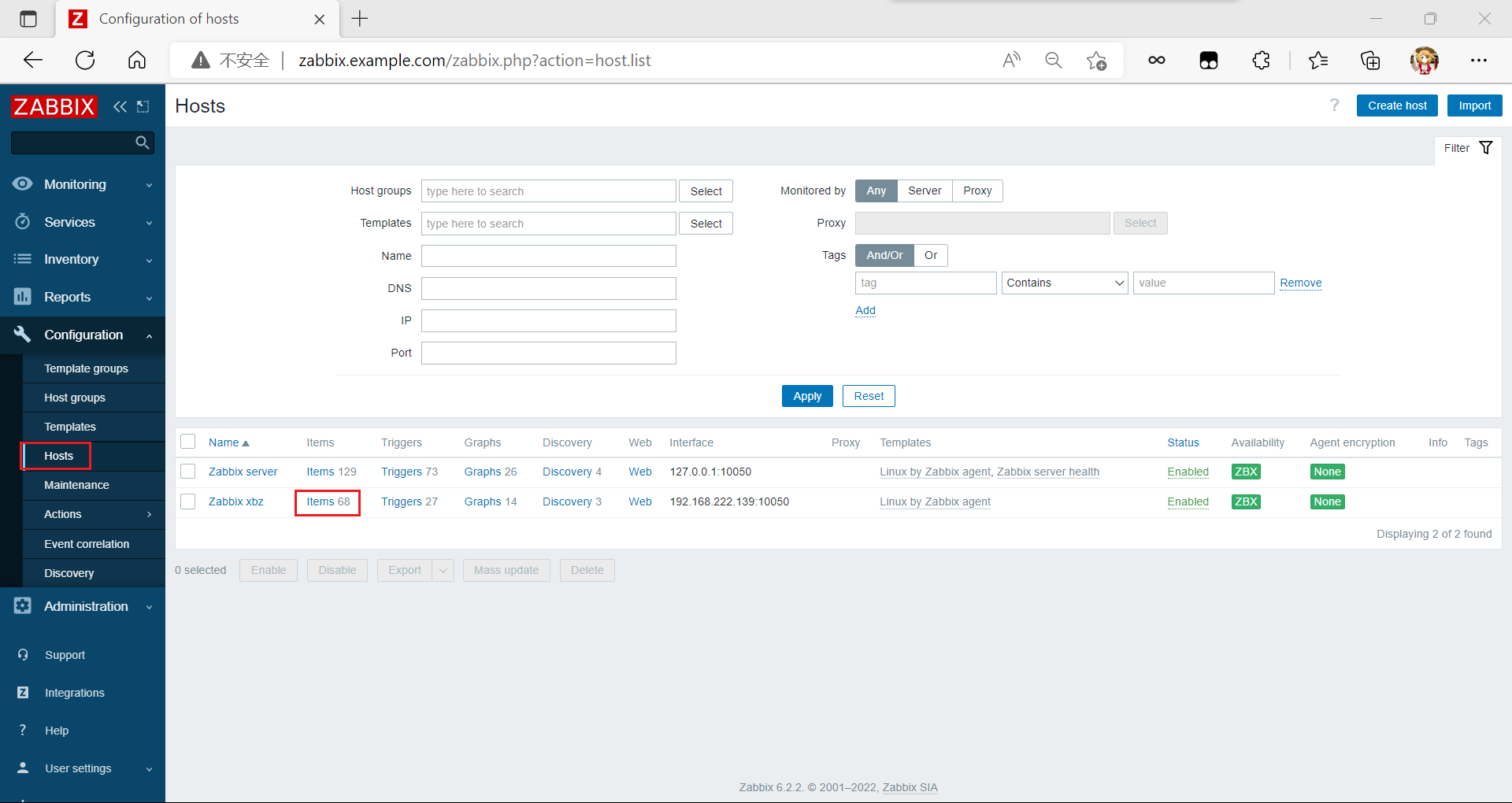

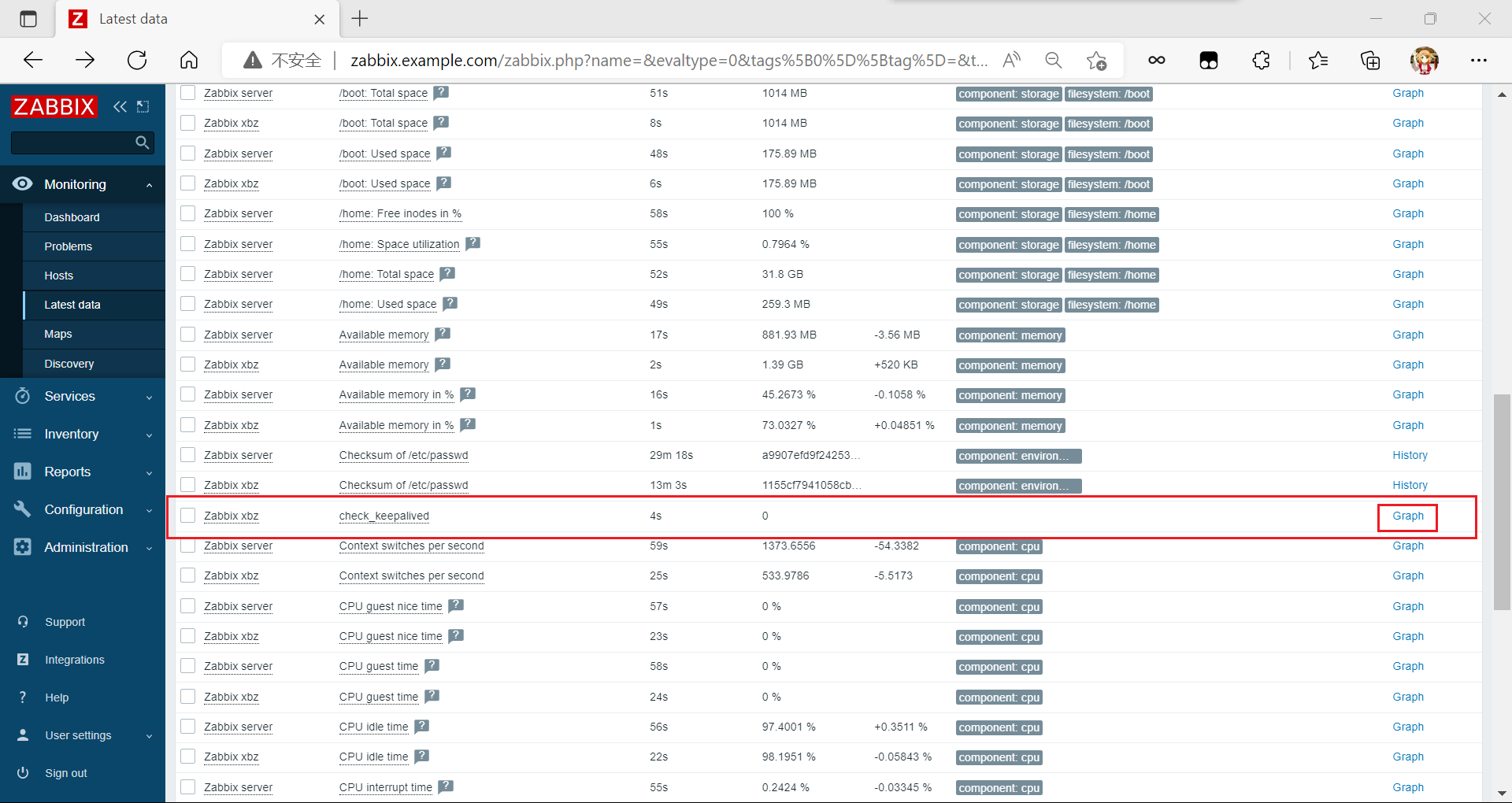

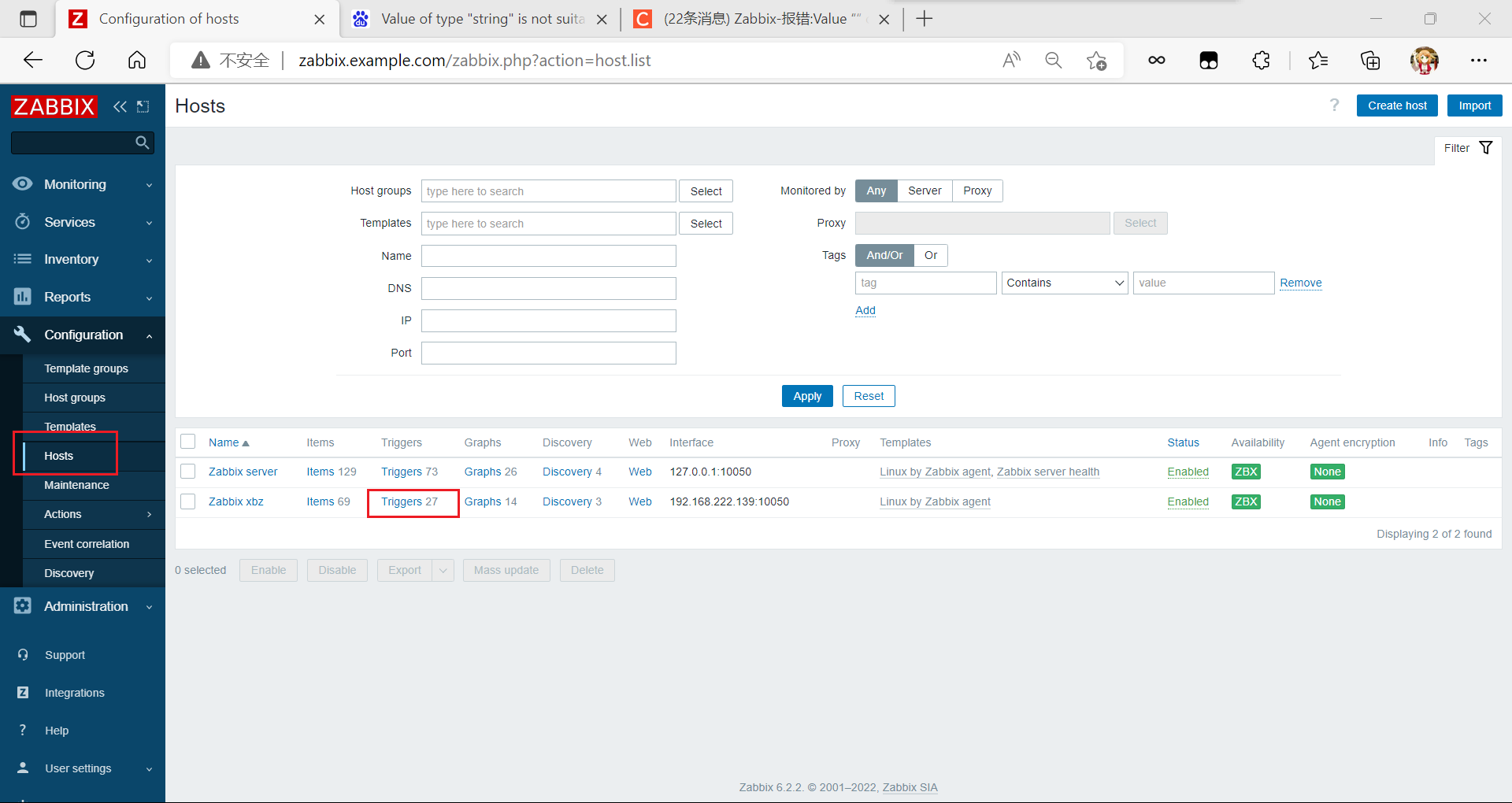

創建監控主機

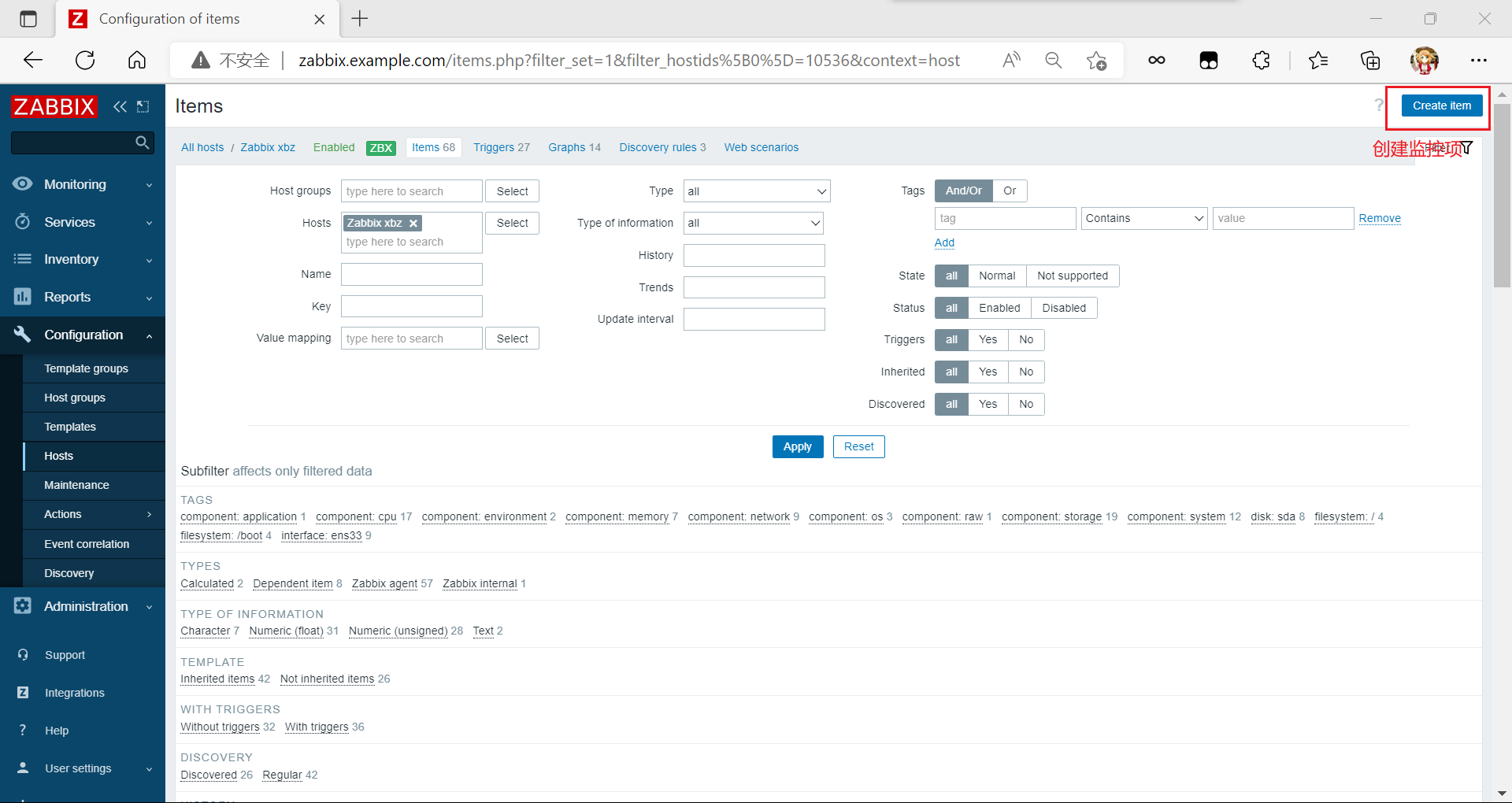

添加監控項

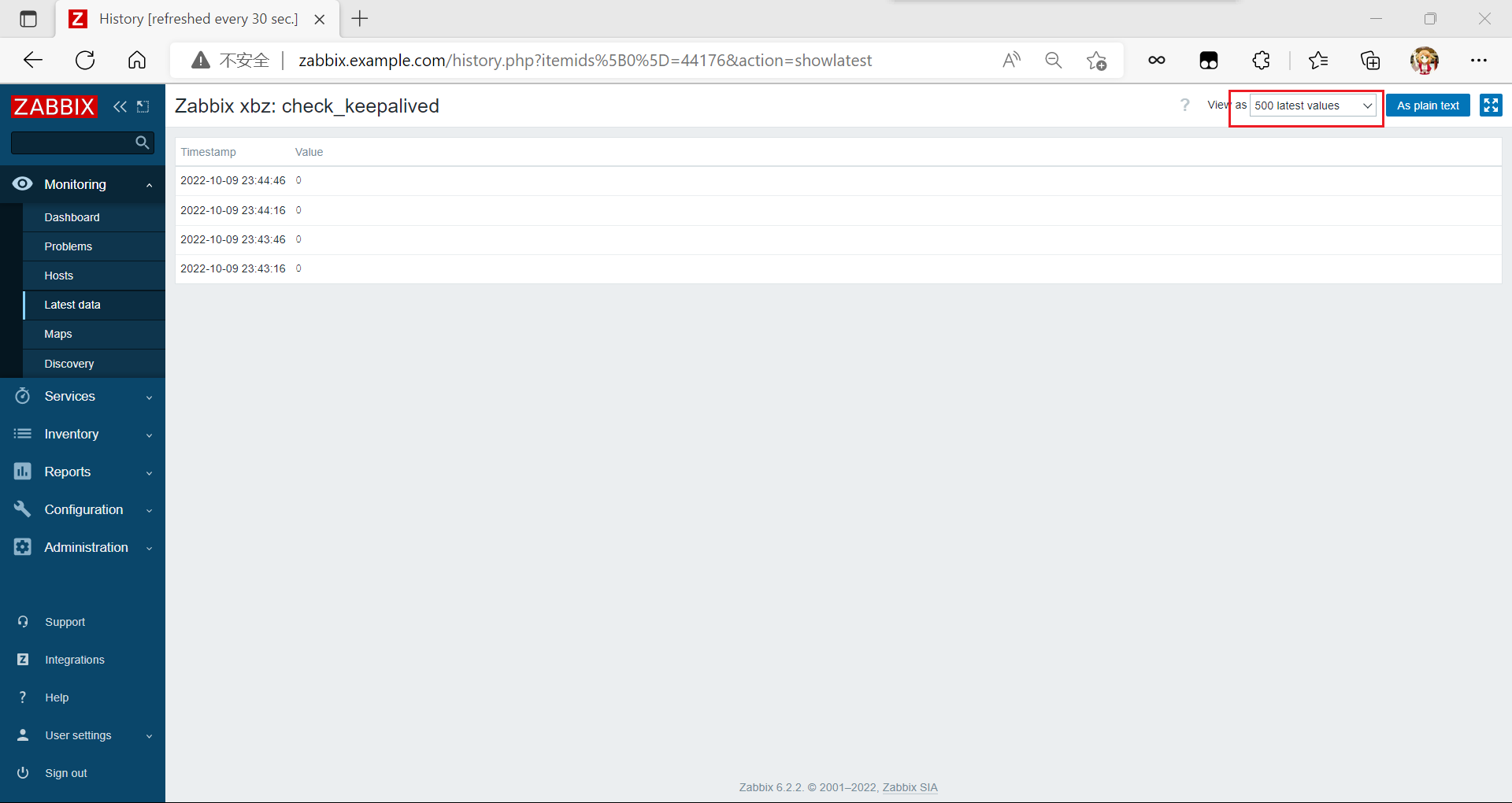

查看數據

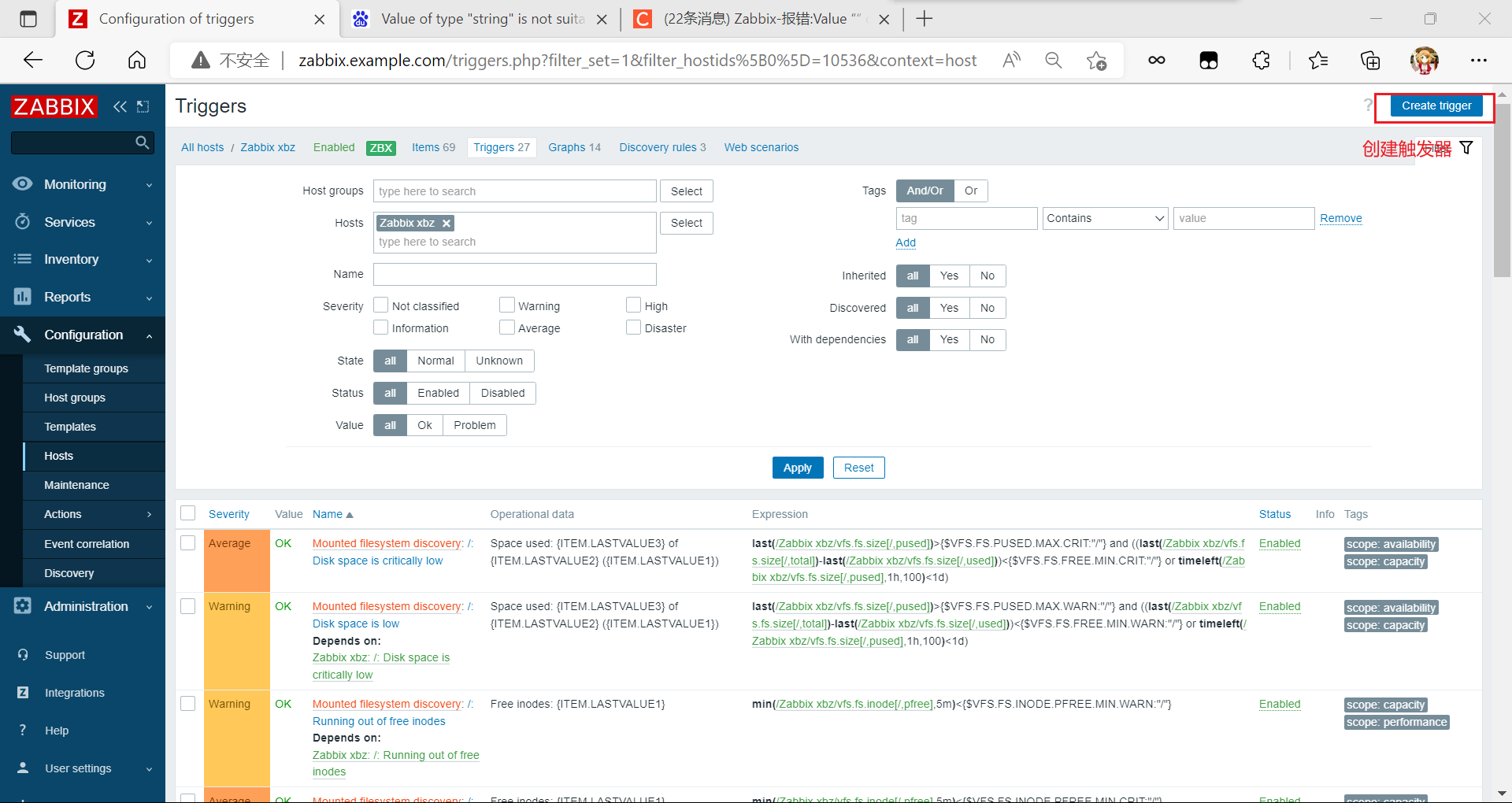

添加觸發器

測試

在master上面停止nginx開啟keepalived,backup上面開啟nginx,keepalived

模擬故障轉移

master

[root@master ~]# systemctl stop nginx.service

[root@master ~]# systemctl restart keepalived.service

backeup

[root@backup ~]# systemctl start nginx

[root@backup ~]# systemctl restart keepalived.service

查看狀態

master:

[root@master ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:f6:83:57 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.138/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fef6:8357/64 scope link

valid_lft forever preferred_lft forever

3: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

4: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc fq_codel master virbr0 state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

backup:

[root@backup ~]# ip a

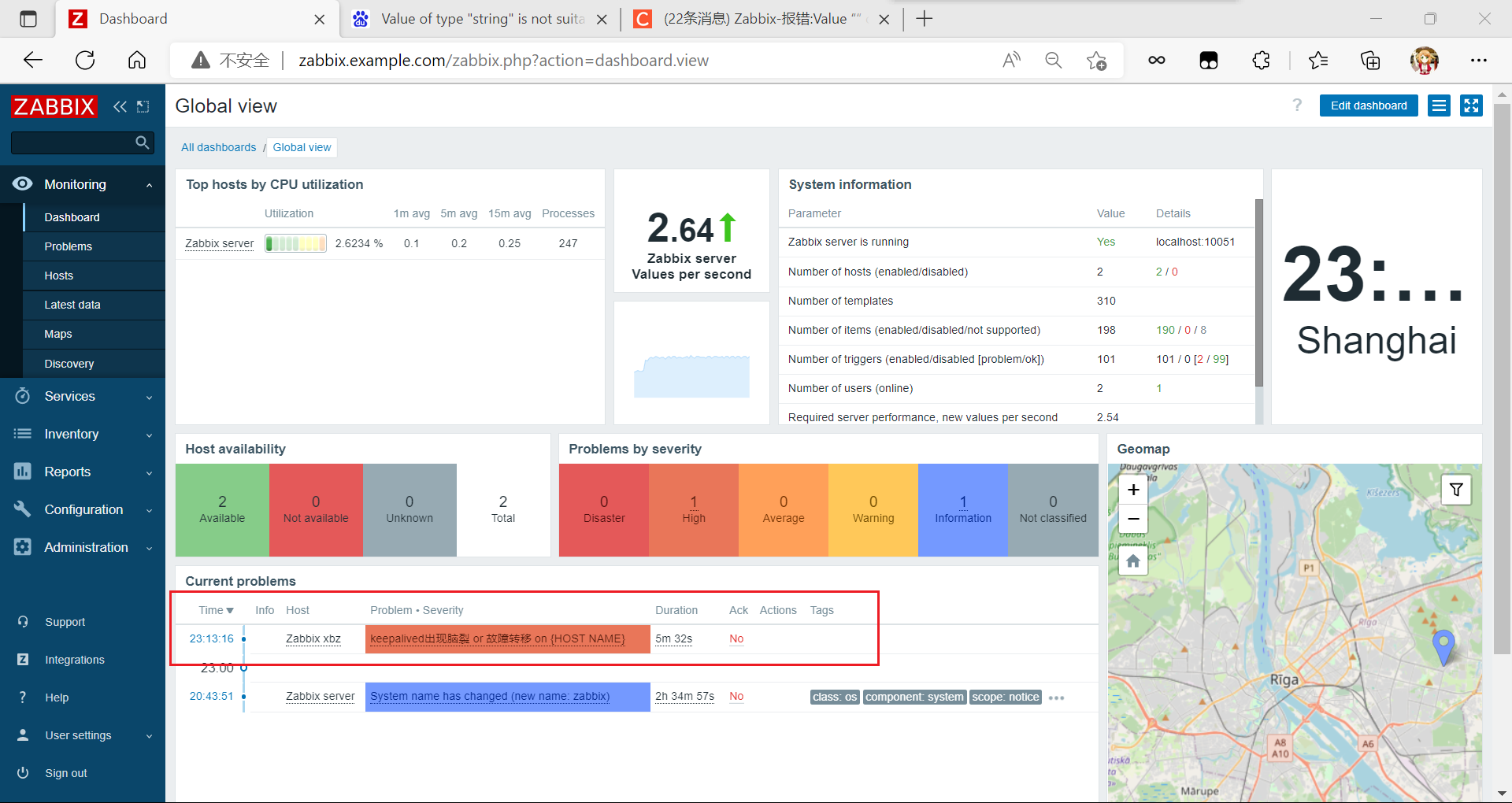

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:31:af:f9 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.139/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.222.133/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe31:aff9/64 scope link

valid_lft forever preferred_lft forever

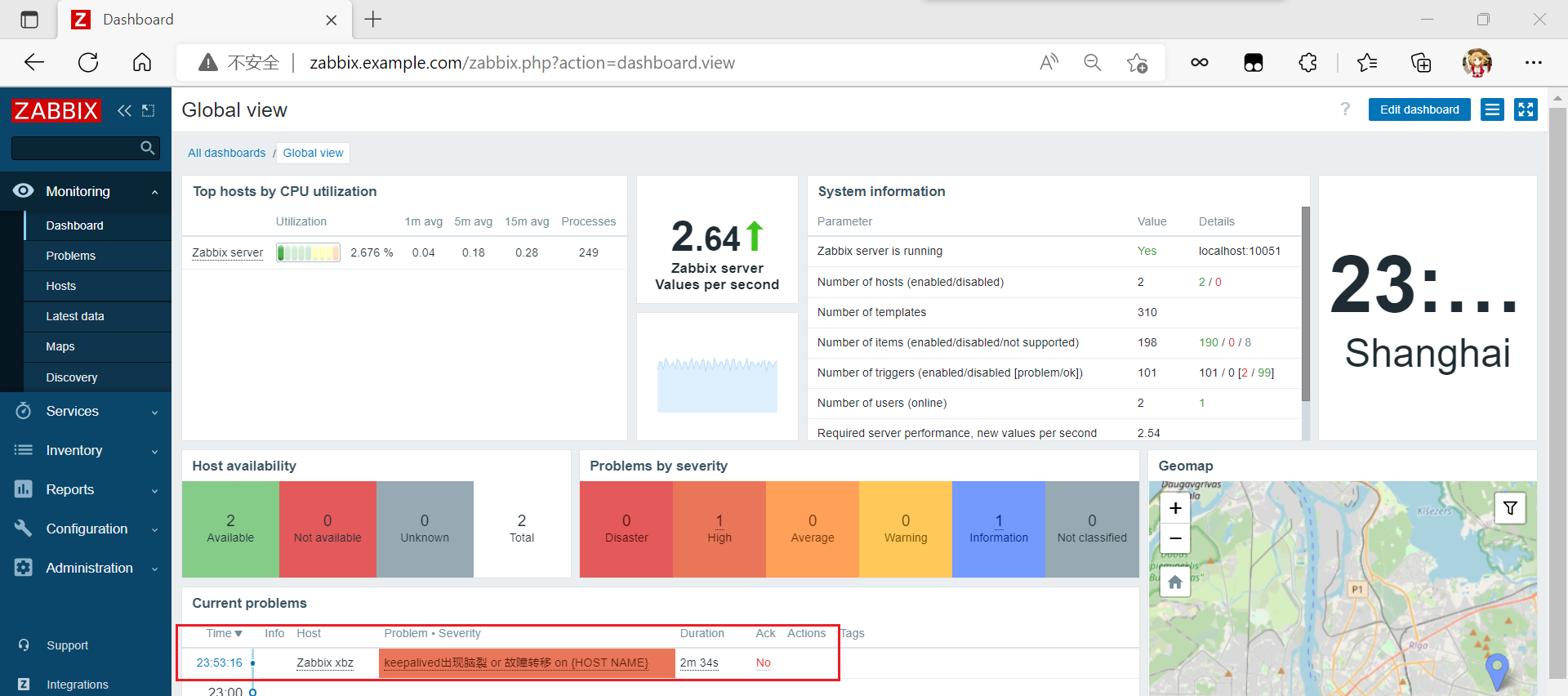

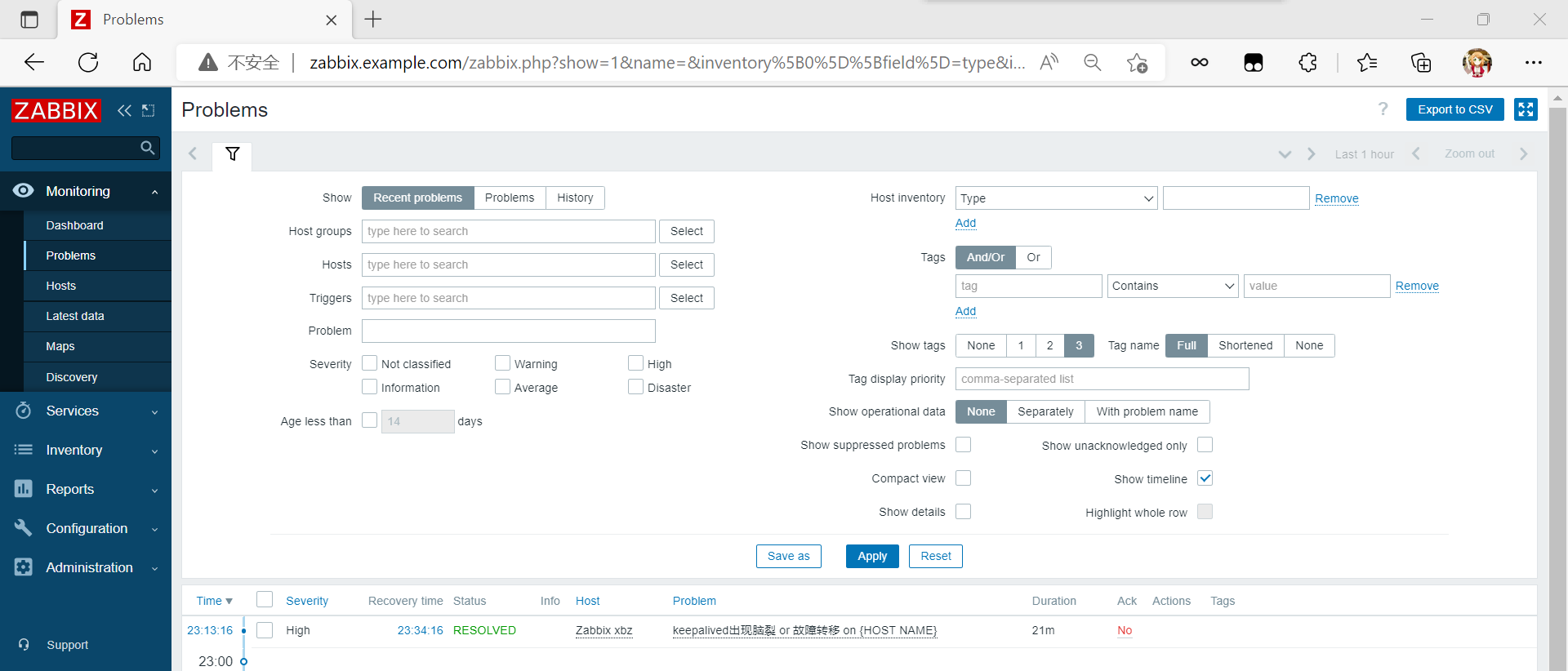

查看告警觸發

重新啟動master上面的nginx,keepalived

root@master ~]# systemctl restart nginx.service

[root@master ~]# systemctl restart keepalived.service

[root@master ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:f6:83:57 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.138/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.222.133/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fef6:8357/64 scope link

valid_lft forever preferred_lft forever

3: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

4: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc fq_codel master virbr0 state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

//此時沒有報警信息

模擬腦裂

更改master主機keepalived配置文件,將virtual_router_id進行更改,與backup裡面不一樣就可以

master

[root@master ~]# vim /etc/keepalived/keepalived.conf

virtual_router_id 55 //我這裡是將51改為了55

[root@master ~]# systemctl restart keepalived.service

//重啟keepalived

[root@master ~]# ip a //發現VIP還在

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:f6:83:57 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.138/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.222.133/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fef6:8357/64 scope link

valid_lft forever preferred_lft forever

3: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

4: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc fq_codel master virbr0 state DOWN group default qlen 1000

link/ether 52:54:00:db:51:2f brd ff:ff:ff:ff:ff:ff

[root@master ~]# ss -antl //nginx也在

State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

LISTEN 0 128 0.0.0.0:111 0.0.0.0:*

LISTEN 0 128 0.0.0.0:80 0.0.0.0:*

LISTEN 0 32 192.168.122.1:53 0.0.0.0:*

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 [::]:111 [::]:*

LISTEN 0 128 [::]:80 [::]:*

LISTEN 0 128 [::]:22 [::]:*

backup

[root@backup ~]# ip a //發現也有VIP

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:31:af:f9 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.139/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.222.133/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe31:aff9/64 scope link

valid_lft forever preferred_lft forever

[root@backup ~]# ss -antl //nginx也在

State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 0.0.0.0:10050 0.0.0.0:*

LISTEN 0 128 0.0.0.0:80 0.0.0.0:*

LISTEN 0 128 [::]:22 [::]:*

LISTEN 0 128 [::]:80 [::]:*

出現了報警信息