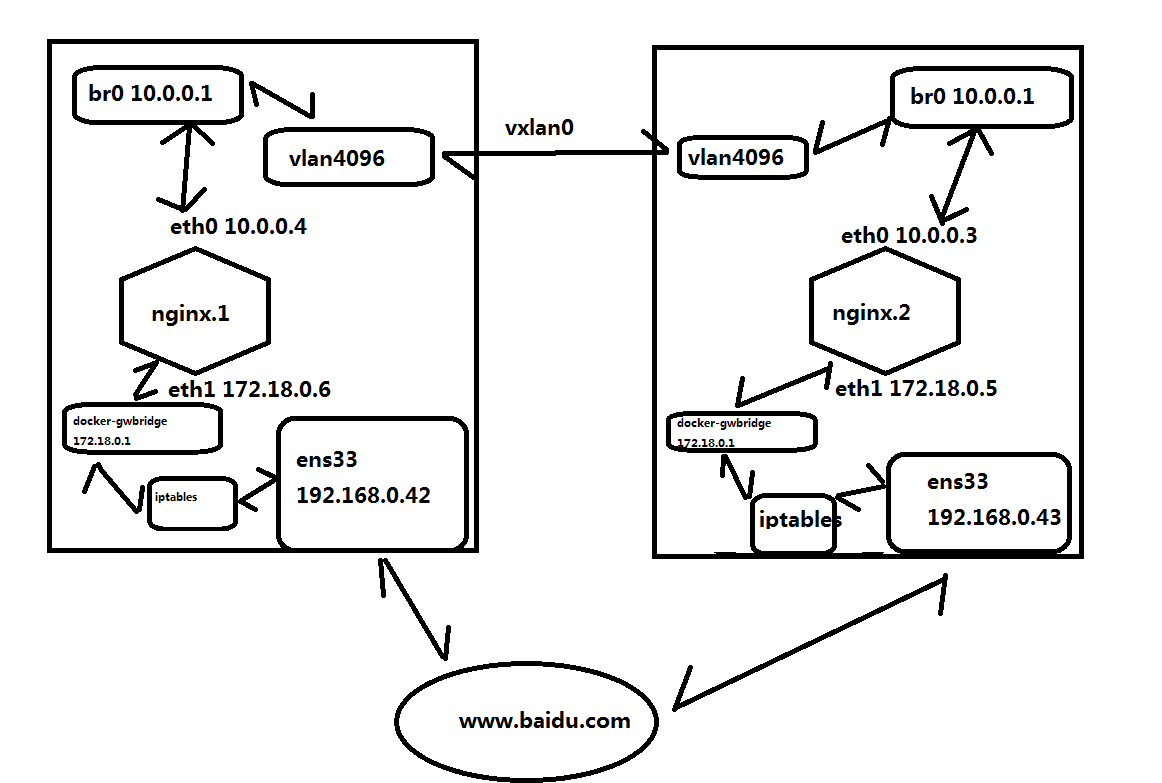

在管理節點上能夠訪問到容器服務的原因是通過訪問本機的80,通過iptables規則把流量轉發給docker_gwbridge,docker_gwbridge通過內核把流量轉發給ingress網路,因為ingress生效範圍是整個swarm,這意味著管理節點和work節點共用一個swarm的網路空間... ...

前文我聊到了docker machine的簡單使用和基本原理的說明,回顧請參考https://www.cnblogs.com/qiuhom-1874/p/13160915.html;今天我們來聊一聊docker集群管理工具docker swarm;docker swarm是docker 官方的集群管理工具,它可以讓跨主機節點來創建,管理docker 集群;它的主要作用就是可以把多個節點主機的docker環境整合成一個大的docker資源池;docker swarm面向的就是這個大的docker 資源池在上面管理容器;在前面我們都只是在單台主機上的創建,管理容器,但是在生產環境中通常一臺物理機上的容器實在是不能夠滿足當前業務的需求,所以docker swarm提供了一種集群解決方案,方便在多個節點上創建,管理容器;接下來我們來看看docker swarm集群的搭建過程吧;

docker swarm 在我們安裝好docker時就已經安裝好了,我們可以使用docker info來查看

[root@node1 ~]# docker info Client: Debug Mode: false Server: Containers: 0 Running: 0 Paused: 0 Stopped: 0 Images: 0 Server Version: 19.03.11 Storage Driver: overlay2 Backing Filesystem: xfs Supports d_type: true Native Overlay Diff: true Logging Driver: json-file Cgroup Driver: cgroupfs Plugins: Volume: local Network: bridge host ipvlan macvlan null overlay Log: awslogs fluentd gcplogs gelf journald json-file local logentries splunk syslog Swarm: inactive Runtimes: runc Default Runtime: runc Init Binary: docker-init containerd version: 7ad184331fa3e55e52b890ea95e65ba581ae3429 runc version: dc9208a3303feef5b3839f4323d9beb36df0a9dd init version: fec3683 Security Options: seccomp Profile: default Kernel Version: 3.10.0-693.el7.x86_64 Operating System: CentOS Linux 7 (Core) OSType: linux Architecture: x86_64 CPUs: 4 Total Memory: 3.686GiB Name: docker-node01 ID: 4HXP:YJ5W:4SM5:NAPM:NXPZ:QFIU:ARVJ:BYDG:KVWU:5AAJ:77GC:X7GQ Docker Root Dir: /var/lib/docker Debug Mode: false Registry: https://index.docker.io/v1/ Labels: provider=generic Experimental: false Insecure Registries: 127.0.0.0/8 Live Restore Enabled: false [root@node1 ~]#

提示:從上面的信息可以看到,swarm是處於非活躍狀態,這是因為我們還沒有初始化集群,所以對應的swarm選項的值是處於inactive狀態;

初始化集群

[root@docker-node01 ~]# docker swarm init --advertise-addr 192.168.0.41

Swarm initialized: current node (ynz304mbltxx10v3i15ldkmj1) is now a manager.

To add a worker to this swarm, run the following command:

docker swarm join --token SWMTKN-1-6difxlq3wc8emlwxzuw95gp8rmvbz2oq62kux3as0e4rbyqhk3-2m9x12n102ca4qlyjpseobzik 192.168.0.41:2377

To add a manager to this swarm, run 'docker swarm join-token manager' and follow the instructions.

[root@docker-node01 ~]#

提示:從上面反饋的信息可以看到,集群初始化成功,並且告訴我們當前節點為管理節點,如果想要其他節點加入到該集群,可以在對應節點上運行docker swarm join --token SWMTKN-1-6difxlq3wc8emlwxzuw95gp8rmvbz2oq62kux3as0e4rbyqhk3-2m9x12n102ca4qlyjpseobzik 192.168.0.41:2377 這個命令,就把對應節點當作work節點加入到該集群,如果想要以管理節點身份加入到集群,我們需要在當前終端運行docker swarm join-token manager命令

[root@docker-node01 ~]# docker swarm join-token manager

To add a manager to this swarm, run the following command:

docker swarm join --token SWMTKN-1-6difxlq3wc8emlwxzuw95gp8rmvbz2oq62kux3as0e4rbyqhk3-dqjeh8hp6cp99bksjc03b8yu3 192.168.0.41:2377

[root@docker-node01 ~]#

提示:我們執行docker swarm join-token manager命令,它返回了一個命令,並告訴我們添加一個管理節點,在對應節點上執行docker swarm join --token SWMTKN-1-6difxlq3wc8emlwxzuw95gp8rmvbz2oq62kux3as0e4rbyqhk3-dqjeh8hp6cp99bksjc03b8yu3 192.168.0.41:2377命令即可;

到此docker swarm集群就初始化完畢,接下來我們把其他節點加入到該集群

把docker-node02以work節點身份加入集群

[root@node2 ~]# docker swarm join --token SWMTKN-1-6difxlq3wc8emlwxzuw95gp8rmvbz2oq62kux3as0e4rbyqhk3-2m9x12n102ca4qlyjpseobzik 192.168.0.41:2377 This node joined a swarm as a worker. [root@node2 ~]#

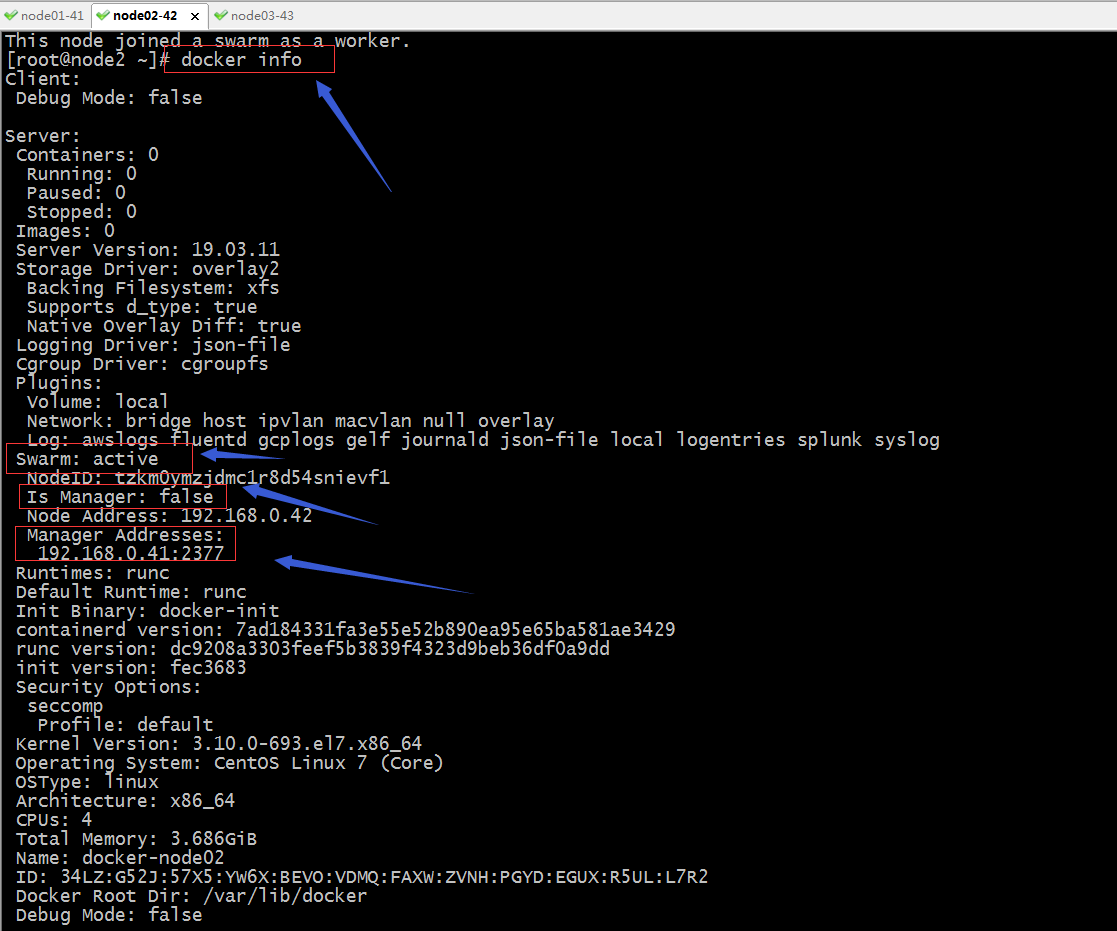

提示:沒有報錯就表示加入集群成功;我們可以使用docker info來查看當前的docker 環境詳細信息

提示:從上面的信息可以看到,在docker-node02這台主機上docker swarm 已經激活,並且可以看到管理節點的地址;除了以上方式可以確定docker-node02以及加入到集群;我們還可以在管理節點上運行docker node ls 查看集群節點信息;

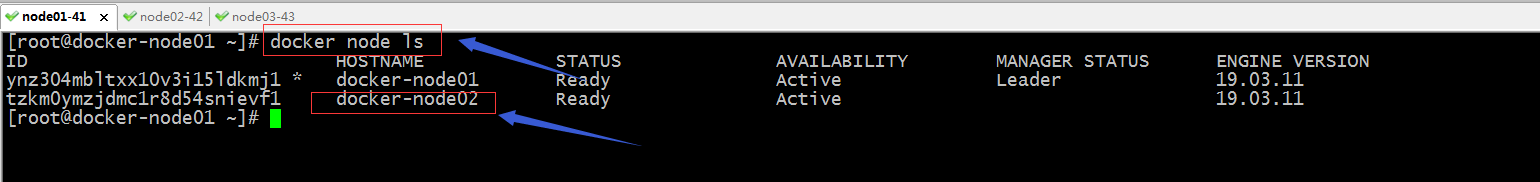

查看集群節點信息

提示:在管理節點上運行docker node ls 就可以列出當前集群里有多少節點已經成功加入進來;

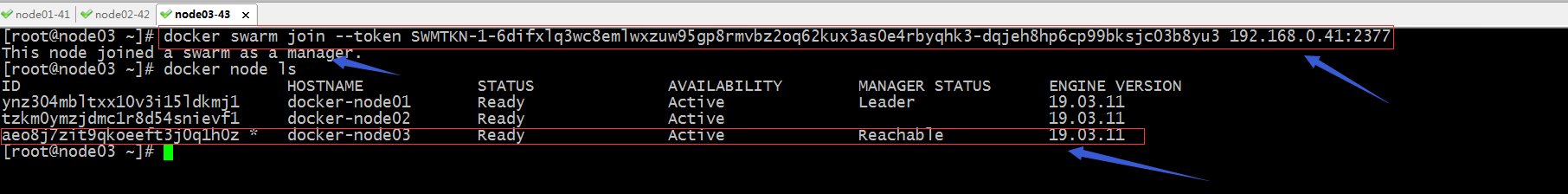

把docker-node03以管理節點身份加入到集群

提示:可以看到docker-node03已經是集群的管理節點,所以可以在docker-node03這個節點執行docker node ls 命令;到此docker swarm集群就搭建好了;接下來我們來說一說docker swarm集群的常用管理

有關節點相關管理命令

docker node ls :列出當前集群上的所有節點

[root@docker-node01 ~]# docker node ls ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS ENGINE VERSION ynz304mbltxx10v3i15ldkmj1 * docker-node01 Ready Active Leader 19.03.11 tzkm0ymzjdmc1r8d54snievf1 docker-node02 Ready Active 19.03.11 aeo8j7zit9qkoeeft3j0q1h0z docker-node03 Ready Active Reachable 19.03.11 [root@docker-node01 ~]#

提示:該命令只能在管理節點上執行;

docker node inspect :查看指定節點的詳細信息;

[root@docker-node01 ~]# docker node inspect docker-node01

[

{

"ID": "ynz304mbltxx10v3i15ldkmj1",

"Version": {

"Index": 9

},

"CreatedAt": "2020-06-20T05:57:17.57684293Z",

"UpdatedAt": "2020-06-20T05:57:18.18575648Z",

"Spec": {

"Labels": {},

"Role": "manager",

"Availability": "active"

},

"Description": {

"Hostname": "docker-node01",

"Platform": {

"Architecture": "x86_64",

"OS": "linux"

},

"Resources": {

"NanoCPUs": 4000000000,

"MemoryBytes": 3958075392

},

"Engine": {

"EngineVersion": "19.03.11",

"Labels": {

"provider": "generic"

},

"Plugins": [

{

"Type": "Log",

"Name": "awslogs"

},

{

"Type": "Log",

"Name": "fluentd"

},

{

"Type": "Log",

"Name": "gcplogs"

},

{

"Type": "Log",

"Name": "gelf"

},

{

"Type": "Log",

"Name": "journald"

},

{

"Type": "Log",

"Name": "json-file"

},

{

"Type": "Log",

"Name": "local"

},

{

"Type": "Log",

"Name": "logentries"

},

{

"Type": "Log",

"Name": "splunk"

},

{

"Type": "Log",

"Name": "syslog"

},

{

"Type": "Network",

"Name": "bridge"

},

{

"Type": "Network",

"Name": "host"

},

{

"Type": "Network",

"Name": "ipvlan"

},

{

"Type": "Network",

"Name": "macvlan"

},

{

"Type": "Network",

"Name": "null"

},

{

"Type": "Network",

"Name": "overlay"

},

{

"Type": "Volume",

"Name": "local"

}

]

},

"TLSInfo": {

"TrustRoot": "-----BEGIN CERTIFICATE-----\nMIIBaTCCARCgAwIBAgIUeBd/eSZ7WaiyLby9o1yWpjps3gwwCgYIKoZIzj0EAwIw\nEzERMA8GA1UEAxMIc3dhcm0tY2EwHhcNMjAwNjIwMDU1MjAwWhcNNDAwNjE1MDU1\nMjAwWjATMREwDwYDVQQDEwhzd2FybS1jYTBZMBMGByqGSM49AgEGCCqGSM49AwEH\nA0IABMsYxnGoPbM4gqb23E1TvOeQcLcY56XysLuF8tYKm56GuKpeD/SqXrUCYqKZ\nHV+WSqcM0fD1g+mgZwlUwFzNxhajQjBAMA4GA1UdDwEB/wQEAwIBBjAPBgNVHRMB\nAf8EBTADAQH/MB0GA1UdDgQWBBTV64kbvS83eRHyI6hdJeEIv3GmrTAKBggqhkjO\nPQQDAgNHADBEAiBBB4hLn0ijybJWH5j5rtMdAoj8l/6M3PXERnRSlhbcawIgLoby\newMHCnm8IIrUGe7s4CZ07iHG477punuPMKDgqJ0=\n-----END CERTIFICATE-----\n",

"CertIssuerSubject": "MBMxETAPBgNVBAMTCHN3YXJtLWNh",

"CertIssuerPublicKey": "MFkwEwYHKoZIzj0CAQYIKoZIzj0DAQcDQgAEyxjGcag9sziCpvbcTVO855BwtxjnpfKwu4Xy1gqbnoa4ql4P9KpetQJiopkdX5ZKpwzR8PWD6aBnCVTAXM3GFg=="

}

},

"Status": {

"State": "ready",

"Addr": "192.168.0.41"

},

"ManagerStatus": {

"Leader": true,

"Reachability": "reachable",

"Addr": "192.168.0.41:2377"

}

}

]

[root@docker-node01 ~]#

docker node ps :列出指定節點上運行容器的清單

[root@docker-node01 ~]# docker node ps ID NAME IMAGE NODE DESIRED STATE CURRENT STATE ERROR PORTS [root@docker-node01 ~]# docker node ps docker-node01 ID NAME IMAGE NODE DESIRED STATE CURRENT STATE ERROR PORTS [root@docker-node01 ~]#

提示:類似docker ps 命令,我上面沒有運行容器,所以看不到對應信息;預設不指定節點名稱表示查看當前節點上的運行容器清單;

docker node rm :刪除指定節點

[root@docker-node01 ~]# docker node ls ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS ENGINE VERSION ynz304mbltxx10v3i15ldkmj1 * docker-node01 Ready Active Leader 19.03.11 tzkm0ymzjdmc1r8d54snievf1 docker-node02 Ready Active 19.03.11 aeo8j7zit9qkoeeft3j0q1h0z docker-node03 Ready Active Reachable 19.03.11 [root@docker-node01 ~]# docker node rm docker-node03 Error response from daemon: rpc error: code = FailedPrecondition desc = node aeo8j7zit9qkoeeft3j0q1h0z is a cluster manager and is a member of the raft cluster. It must be demoted to worker before removal [root@docker-node01 ~]# docker node rm docker-node02 Error response from daemon: rpc error: code = FailedPrecondition desc = node tzkm0ymzjdmc1r8d54snievf1 is not down and can't be removed [root@docker-node01 ~]#

提示:刪除節點前必須滿足,被刪除的節點不是管理節點,其次就是要刪除的節點必須是down狀態;

docker swarm leave:離開當前集群

[root@docker-node03 ~]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES e7958ffa16cd nginx "/docker-entrypoint.…" 28 seconds ago Up 26 seconds 80/tcp n1 [root@docker-node03 ~]# docker swarm leave Error response from daemon: You are attempting to leave the swarm on a node that is participating as a manager. Removing this node leaves 1 managers out of 2. Without a Raft quorum your swarm will be inaccessible. The only way to restore a swarm that has lost consensus is to reinitialize it with `--force-new-cluster`. Use `--force` to suppress this message. [root@docker-node03 ~]# docker swarm leave -f Node left the swarm. [root@docker-node03 ~]#

提示:管理節點預設是不允許離開集群的,如果強制使用-f選項離開集群,會導致在其他管理節點無法正常管理集群;

[root@docker-node01 ~]# docker node ls Error response from daemon: rpc error: code = Unknown desc = The swarm does not have a leader. It's possible that too few managers are online. Make sure more than half of the managers are online. [root@docker-node01 ~]#

提示:我們在docker-node01上現在就不能使用docker node ls 來查看集群節點列表了;解決辦法重新初始化集群;

[root@docker-node01 ~]# docker node ls

Error response from daemon: rpc error: code = Unknown desc = The swarm does not have a leader. It's possible that too few managers are online. Make sure more than half of the managers are online.

[root@docker-node01 ~]# docker swarm init --advertise-addr 192.168.0.41

Error response from daemon: This node is already part of a swarm. Use "docker swarm leave" to leave this swarm and join another one.

[root@docker-node01 ~]# docker swarm init --force-new-cluster

Swarm initialized: current node (ynz304mbltxx10v3i15ldkmj1) is now a manager.

To add a worker to this swarm, run the following command:

docker swarm join --token SWMTKN-1-6difxlq3wc8emlwxzuw95gp8rmvbz2oq62kux3as0e4rbyqhk3-2m9x12n102ca4qlyjpseobzik 192.168.0.41:2377

To add a manager to this swarm, run 'docker swarm join-token manager' and follow the instructions.

[root@docker-node01 ~]# docker node ls

ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS ENGINE VERSION

ynz304mbltxx10v3i15ldkmj1 * docker-node01 Ready Active Leader 19.03.11

tzkm0ymzjdmc1r8d54snievf1 docker-node02 Unknown Active 19.03.11

aeo8j7zit9qkoeeft3j0q1h0z docker-node03 Down Active 19.03.11

rm3j7cjvmoa35yy8ckuzoay46 docker-node03 Unknown Active 19.03.11

[root@docker-node01 ~]#

提示:重新初始化集群不能使用docker swarm init --advertise-addr 192.168.0.41這種方式初始化,必須使用docker swarm init --force-new-cluster,該命令表示使用從當前狀態強制創建一個集群;現在我們就可以使用docker node rm 把down狀態的節點從集群刪除;

刪除down狀態的節點

[root@docker-node01 ~]# docker node ls ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS ENGINE VERSION ynz304mbltxx10v3i15ldkmj1 * docker-node01 Ready Active Leader 19.03.11 tzkm0ymzjdmc1r8d54snievf1 docker-node02 Ready Active 19.03.11 aeo8j7zit9qkoeeft3j0q1h0z docker-node03 Down Active 19.03.11 rm3j7cjvmoa35yy8ckuzoay46 docker-node03 Down Active 19.03.11 [root@docker-node01 ~]# docker node rm aeo8j7zit9qkoeeft3j0q1h0z rm3j7cjvmoa35yy8ckuzoay46 aeo8j7zit9qkoeeft3j0q1h0z rm3j7cjvmoa35yy8ckuzoay46 [root@docker-node01 ~]# docker node ls ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS ENGINE VERSION ynz304mbltxx10v3i15ldkmj1 * docker-node01 Ready Active Leader 19.03.11 tzkm0ymzjdmc1r8d54snievf1 docker-node02 Ready Active 19.03.11 [root@docker-node01 ~]#

docker node promote:把指定節點提升為管理節點

[root@docker-node01 ~]# docker node ls ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS ENGINE VERSION ynz304mbltxx10v3i15ldkmj1 * docker-node01 Ready Active Leader 19.03.11 tzkm0ymzjdmc1r8d54snievf1 docker-node02 Ready Active 19.03.11 [root@docker-node01 ~]# docker node promote docker-node02 Node docker-node02 promoted to a manager in the swarm. [root@docker-node01 ~]# docker node ls ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS ENGINE VERSION ynz304mbltxx10v3i15ldkmj1 * docker-node01 Ready Active Leader 19.03.11 tzkm0ymzjdmc1r8d54snievf1 docker-node02 Ready Active Reachable 19.03.11 [root@docker-node01 ~]#

docker node demote:把指定節點降級為work節點

[root@docker-node01 ~]# docker node ls ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS ENGINE VERSION ynz304mbltxx10v3i15ldkmj1 * docker-node01 Ready Active Leader 19.03.11 tzkm0ymzjdmc1r8d54snievf1 docker-node02 Ready Active Reachable 19.03.11 [root@docker-node01 ~]# docker node demote docker-node02 Manager docker-node02 demoted in the swarm. [root@docker-node01 ~]# docker node ls ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS ENGINE VERSION ynz304mbltxx10v3i15ldkmj1 * docker-node01 Ready Active Leader 19.03.11 tzkm0ymzjdmc1r8d54snievf1 docker-node02 Ready Active 19.03.11 [root@docker-node01 ~]#

docker node update:更新指定節點

[root@docker-node01 ~]# docker node ls ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS ENGINE VERSION ynz304mbltxx10v3i15ldkmj1 * docker-node01 Ready Active Leader 19.03.11 tzkm0ymzjdmc1r8d54snievf1 docker-node02 Ready Active 19.03.11 [root@docker-node01 ~]# docker node update docker-node01 --availability drain docker-node01 [root@docker-node01 ~]# docker node ls ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS ENGINE VERSION ynz304mbltxx10v3i15ldkmj1 * docker-node01 Ready Drain Leader 19.03.11 tzkm0ymzjdmc1r8d54snievf1 docker-node02 Ready Active 19.03.11 [root@docker-node01 ~]#

提示:以上命令把docker-node01的availability屬性更改為drain,這樣更改後docker-node01的資源就不會被調度到用來運行容器;

為docker swarm集群添加圖形界面

[root@docker-node01 docker]# docker run --name v1 -d -p 8888:8080 -e HOST=192.168.0.41 -e PORT=8080 -v /var/run/docker.sock:/var/run/docker.sock docker-registry.io/test/visualizer Unable to find image 'docker-registry.io/test/visualizer:latest' locally latest: Pulling from test/visualizer cd784148e348: Pull complete f6268ae5d1d7: Pull complete 97eb9028b14b: Pull complete 9975a7a2a3d1: Pull complete ba903e5e6801: Pull complete 7f034edb1086: Pull complete cd5dbf77b483: Pull complete 5e7311667ddb: Pull complete 687c1072bfcb: Pull complete aa18e5d3472c: Pull complete a3da1957bd6b: Pull complete e42dbf1c67c4: Pull complete 5a18b01011d2: Pull complete Digest: sha256:54d65cbcbff52ee7d789cd285fbe68f07a46e3419c8fcded437af4c616915c85 Status: Downloaded newer image for docker-registry.io/test/visualizer:latest 3c15b186ff51848130393944e09a427bd40d2504c54614f93e28477a4961f8b6 [root@docker-node01 docker]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 3c15b186ff51 docker-registry.io/test/visualizer "npm start" 6 seconds ago Up 5 seconds (health: starting) 0.0.0.0:8888->8080/tcp v1 [root@docker-node01 docker]#

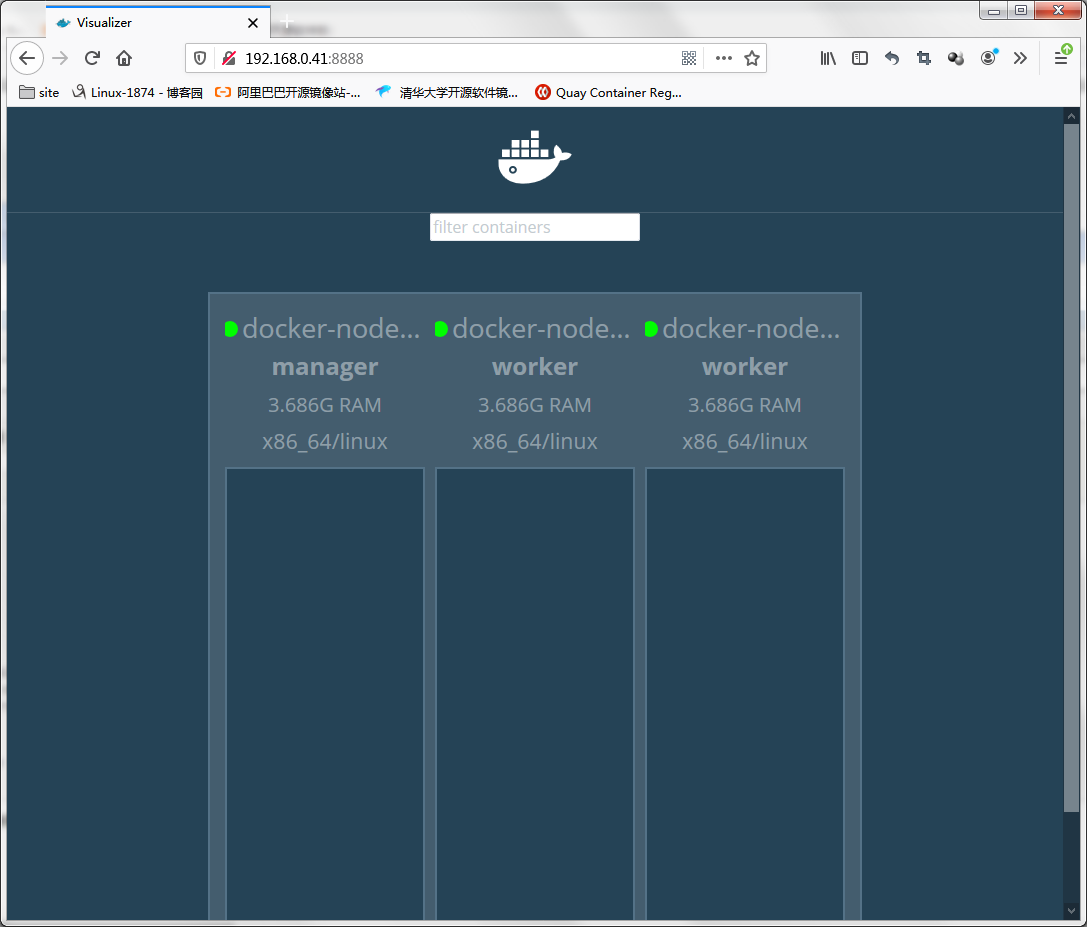

提示:我上面的命令是從私有倉庫中下載的鏡像,原因是互聯網下載太慢了,所以我提前下載好,放在私有倉庫中;有關私有倉庫的搭建使用,請參考https://www.cnblogs.com/qiuhom-1874/p/13061984.html或者https://www.cnblogs.com/qiuhom-1874/p/13058338.html;在管理節點上運行visualizer容器後,我們就可以直接訪問該管理節點地址的8888埠,就可以看到當前容器的情況;如下圖

提示:從上面的信息可以看到當前集群有一個管理節點和兩個work節點;現目前集群里沒有運行任何容器;

在docker swarm運行服務

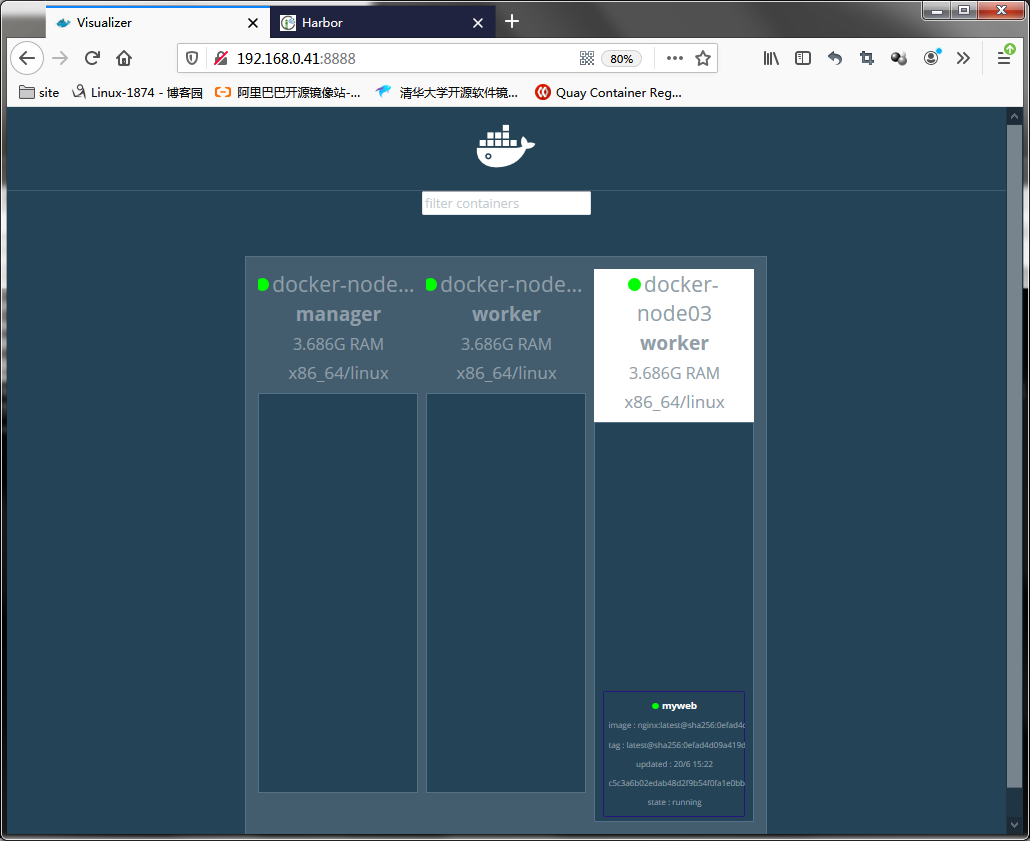

[root@docker-node01 ~]# docker service create --name myweb docker-registry.io/test/nginx:latest i0j6wvvtfe1360ibj04jxulmd overall progress: 1 out of 1 tasks 1/1: running [==================================================>] verify: Service converged [root@docker-node01 ~]# docker service ls ID NAME MODE REPLICAS IMAGE PORTS i0j6wvvtfe13 myweb replicated 1/1 docker-registry.io/test/nginx:latest [root@docker-node01 ~]# docker service ps myweb ID NAME IMAGE NODE DESIRED STATE CURRENT STATE ERROR PORTS 99y8towew77e myweb.1 docker-registry.io/test/nginx:latest docker-node03 Running Running 1 minutes ago [root@docker-node01 ~]#

提示:docker service create 表示在當前swarm集群環境中創建一個服務;以上命令表示在swarm集群上創建一個名為myweb的服務,用docker-registry.io/test/nginx:latest鏡像;預設情況下只啟動一個副本;

提示:可以看到當前集群中運行了一個myweb的容器,並且運行在docker-node03這台主機上;

在swarm 集群上創建多個副本服務

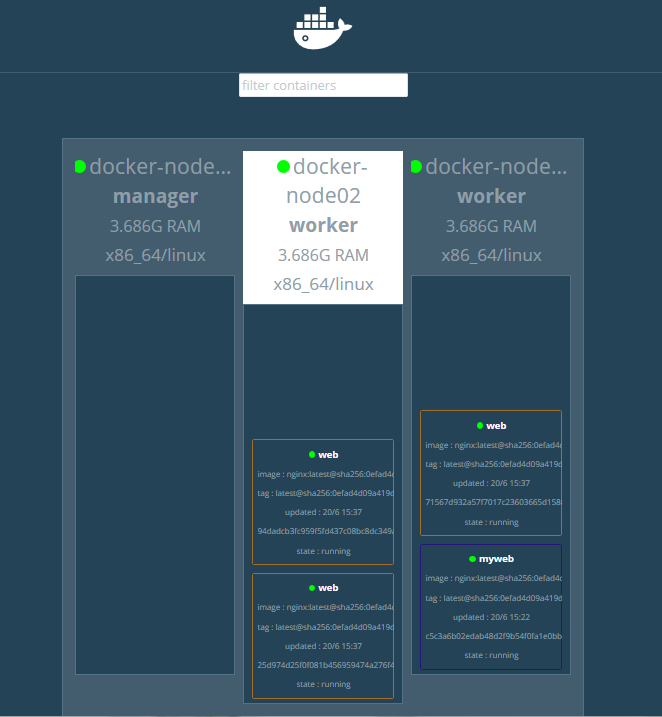

[root@docker-node01 ~]# docker service create --replicas 3 --name web docker-registry.io/test/nginx:latest mbiap412jyugfpi4a38mb5i1k overall progress: 3 out of 3 tasks 1/3: running [==================================================>] 2/3: running [==================================================>] 3/3: running [==================================================>] verify: Service converged [root@docker-node01 ~]# docker service ls ID NAME MODE REPLICAS IMAGE PORTS i0j6wvvtfe13 myweb replicated 1/1 docker-registry.io/test/nginx:latest mbiap412jyug web replicated 3/3 docker-registry.io/test/nginx:latest [root@docker-node01 ~]#docker service ps web ID NAME IMAGE NODE DESIRED STATE CURRENT STATE ERROR PORTS 1rt0e7u4senz web.1 docker-registry.io/test/nginx:latest docker-node02 Running Running 28 seconds ago 31ll0zu7udld web.2 docker-registry.io/test/nginx:latest docker-node02 Running Running 28 seconds ago l9jtbswl2x22 web.3 docker-registry.io/test/nginx:latest docker-node03 Running Running 32 seconds ago [root@docker-node01 ~]#

提示:--replicas選項用來指定期望運行的副本數量,該選項會在集群上創建我們指定數量的副本,即便我們集群中有節點宕機,它始終會創建我們指定數量的容器在集群上運行著;

測試:把docker-node03關機,看看我們運行的服務是否會遷移到節點2上呢?

docker-node03關機前

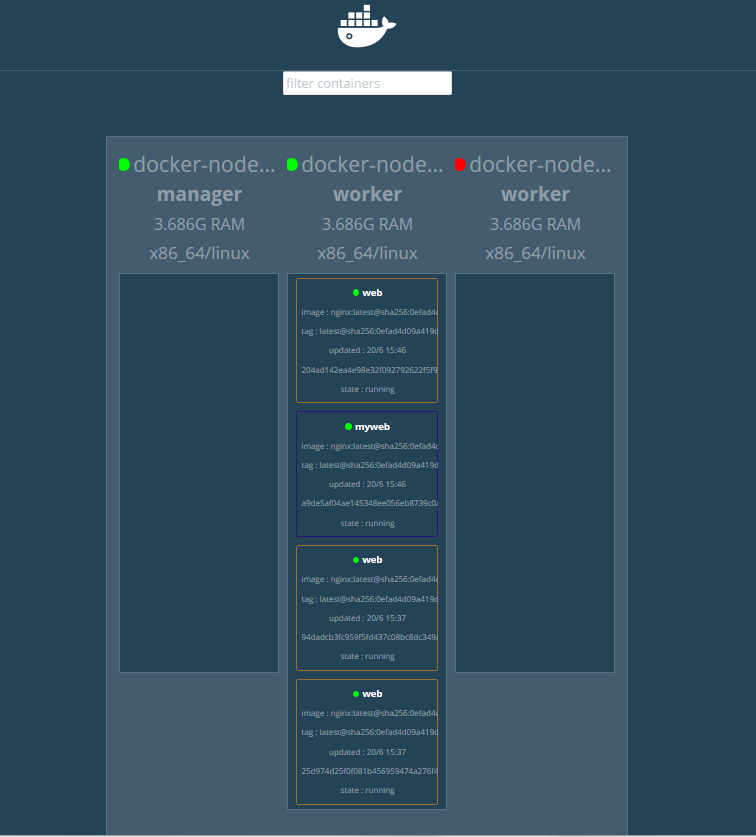

docker-node03關機後

提示:從上面的截圖可以看到,當節點3宕機後,節點3上跑的所有容器,會全部遷移到節點2上來;這就是創建容器時用--replicas選項的作用;總結一點,創建服務使用副本模式,該服務所在節點故障,它會把對應節點上的服務遷移到其他節點上;這裡需要提醒一點的是,只要集群上的服務副本滿足我們指定的replicas的數量,即便故障的節點恢復了,它是不會把服務遷移回來的;

[root@docker-node01 ~]# docker service ps web ID NAME IMAGE NODE DESIRED STATE CURRENT STATE ERROR PORTS 1rt0e7u4senz web.1 docker-registry.io/test/nginx:latest docker-node02 Running Running 15 minutes ago 31ll0zu7udld web.2 docker-registry.io/test/nginx:latest docker-node02 Running Running 15 minutes ago t3gjvsgtpuql web.3 docker-registry.io/test/nginx:latest docker-node02 Running Running 6 minutes ago l9jtbswl2x22 \_ web.3 docker-registry.io/test/nginx:latest docker-node03 Shutdown Shutdown 23 seconds ago [root@docker-node01 ~]#

提示:我們在管理節點查看服務列表,可以看到它遷移服務就是把對應節點上的副本停掉,然後在其他節點創建一個新的副本;

服務伸縮

[root@docker-node01 ~]# docker service ls ID NAME MODE REPLICAS IMAGE PORTS i0j6wvvtfe13 myweb replicated 1/1 docker-registry.io/test/nginx:latest mbiap412jyug web replicated 3/3 docker-registry.io/test/nginx:latest [root@docker-node01 ~]# docker service scale myweb=3 web=5 myweb scaled to 3 web scaled to 5 overall progress: 3 out of 3 tasks 1/3: running [==================================================>] 2/3: running [==================================================>] 3/3: running [==================================================>] verify: Service converged overall progress: 5 out of 5 tasks 1/5: running [==================================================>] 2/5: running [==================================================>] 3/5: running [==================================================>] 4/5: running [==================================================>] 5/5: running [==================================================>] verify: Service converged [root@docker-node01 ~]# docker service ls ID NAME MODE REPLICAS IMAGE PORTS i0j6wvvtfe13 myweb replicated 3/3 docker-registry.io/test/nginx:latest mbiap412jyug web replicated 5/5 docker-registry.io/test/nginx:latest [root@docker-node01 ~]# docker service ps myweb web ID NAME IMAGE NODE DESIRED STATE CURRENT STATE ERROR PORTS j7w490h2lons myweb.1 docker-registry.io/test/nginx:latest docker-node02 Running Running 12 minutes ago 1rt0e7u4senz web.1 docker-registry.io/test/nginx:latest docker-node02 Running Running 21 minutes ago 99y8towew77e myweb.1 docker-registry.io/test/nginx:latest docker-node03 Shutdown Shutdown 5 minutes ago en5rk0jf09wu myweb.2 docker-registry.io/test/nginx:latest docker-node03 Running Running 31 seconds ago 31ll0zu7udld web.2 docker-registry.io/test/nginx:latest docker-node02 Running Running 21 minutes ago h1hze7h819ca myweb.3 docker-registry.io/test/nginx:latest docker-node03 Running Running 30 seconds ago t3gjvsgtpuql web.3 docker-registry.io/test/nginx:latest docker-node02 Running Running 12 minutes ago l9jtbswl2x22 \_ web.3 docker-registry.io/test/nginx:latest docker-node03 Shutdown Shutdown 5 minutes ago od3ti2ixpsgc web.4 docker-registry.io/test/nginx:latest docker-node03 Running Running 31 seconds ago n1vur8wbmkgz web.5 docker-registry.io/test/nginx:latest docker-node03 Running Running 31 seconds ago [root@docker-node01 ~]#

提示:docker service scale 命令用來指定服務的副本數量,從而實現動態伸縮;

服務暴露

[root@docker-node01 ~]# docker service ls ID NAME MODE REPLICAS IMAGE PORTS i0j6wvvtfe13 myweb replicated 3/3 docker-registry.io/test/nginx:latest mbiap412jyug web replicated 5/5 docker-registry.io/test/nginx:latest [root@docker-node01 ~]# docker service update --publish-add 80:80 myweb myweb overall progress: 3 out of 3 tasks 1/3: running [==================================================>] 2/3: running [==================================================>] 3/3: running [==================================================>] verify: Service converged [root@docker-node01 ~]#

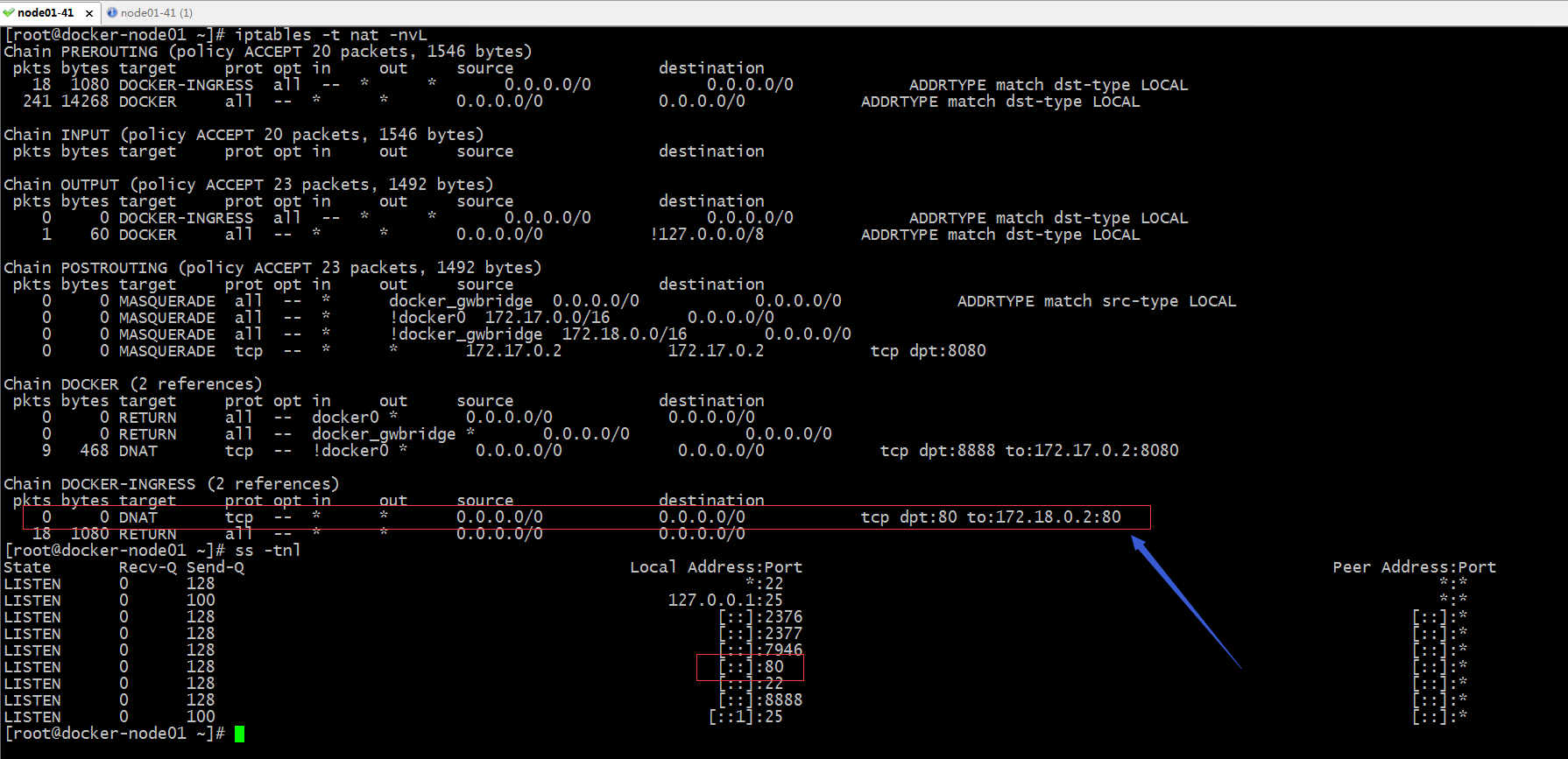

提示:docker swarm集群中的服務暴露和docker裡面的埠暴露原理是一樣的,都是通過iptables 規則表或LVS規則實現的;

提示:我們可以在管理節點上看到對應80埠已經處於監聽狀態,並且在iptables規則表中多了一項訪問本機80埠都DNAT到172.18.0.2的80上了;其實不光是在管理節點,在work節點上相應的iptables規則也都發生了變化;如下

提示:從上面的規則來看,我們訪問節點地址的80埠,都會DNAT到172.18.0.2的80;

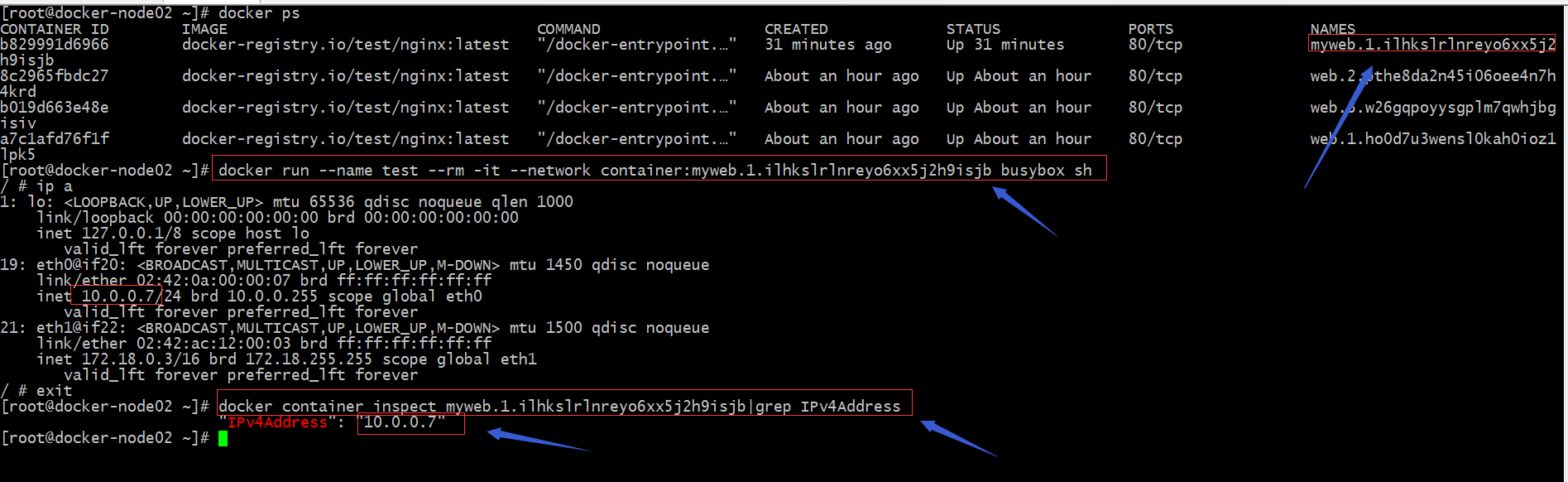

提示:從上面是顯示結果看,我們不難得知在docker-node02運行myweb容器的內部地址是10.0.0.7,那為什麼我們訪問172.18.0.2是能夠訪問到容器內部的服務呢?

測試:我們在docker-node02追蹤查看nginx容器的訪問日誌,看看到容器的IP地址是那個?

[root@docker-node02 ~]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 2134e1b2c689 docker-registry.io/test/nginx:latest "/docker-entrypoint.…" 24 minutes ago Up 24 minutes 80/tcp nginx.1.ych7y3ugxp6o592pbz5k2i412 [root@docker-node02 ~]# docker logs -f nginx.1.ych7y3ugxp6o592pbz5k2i412 /docker-entrypoint.sh: /docker-entrypoint.d/ is not empty, will attempt to perform configuration /docker-entrypoint.sh: Looking for shell scripts in /docker-entrypoint.d/ /docker-entrypoint.sh: Launching /docker-entrypoint.d/10-listen-on-ipv6-by-default.sh 10-listen-on-ipv6-by-default.sh: Getting the checksum of /etc/nginx/conf.d/default.conf 10-listen-on-ipv6-by-default.sh: Enabled listen on IPv6 in /etc/nginx/conf.d/default.conf /docker-entrypoint.sh: Launching /docker-entrypoint.d/20-envsubst-on-templates.sh /docker-entrypoint.sh: Configuration complete; ready for start up 10.0.0.3 - - [21/Jun/2020:02:37:11 +0000] "GET / HTTP/1.1" 200 612 "-" "curl/7.29.0" "-" 172.18.0.1 - - [21/Jun/2020:02:38:35 +0000] "GET / HTTP/1.1" 200 612 "-" "curl/7.29.0" "-" 10.0.0.2 - - [21/Jun/2020:02:53:32 +0000] "GET / HTTP/1.1" 200 612 "-" "curl/7.29.0" "-" 10.0.0.2 - - [21/Jun/2020:02:53:58 +0000] "GET / HTTP/1.1" 200 612 "-" "curl/7.29.0" "-" ^C [root@docker-node02 ~]#

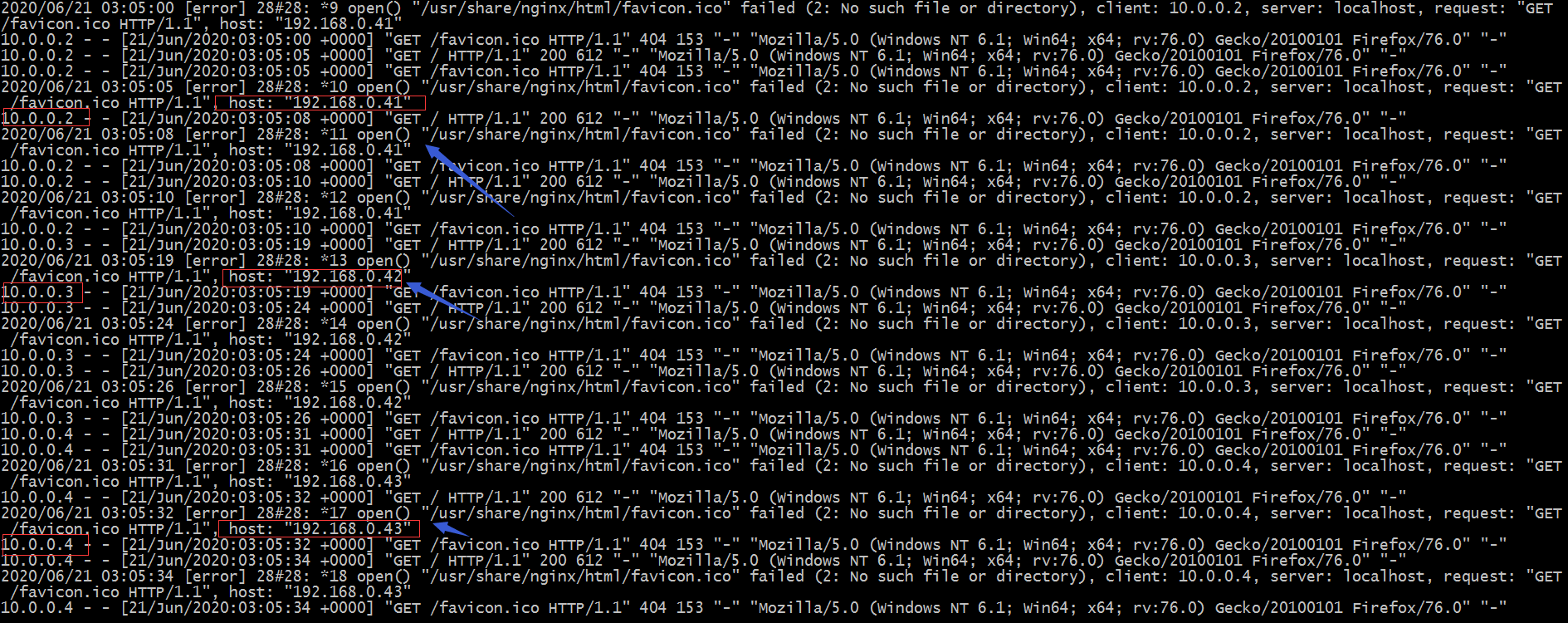

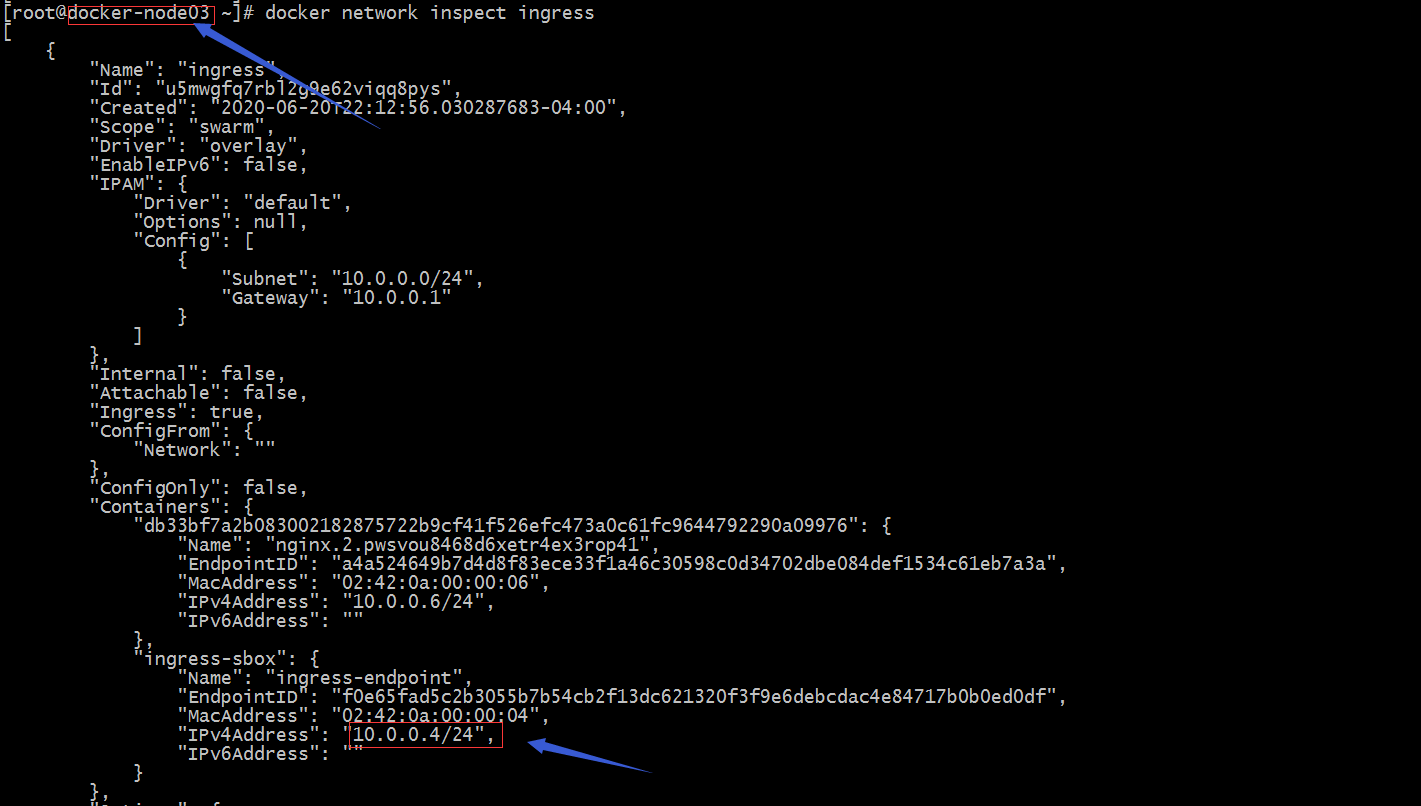

提示:我們在管理節點上訪問172.18.0.2在node2節點上看到的日誌是10.0.0.2的ip訪問到nginx服務;這是為什麼呢?其實原因就是在每個節點上都有一個ingress-sbox容器,該容器的地址就10.0.0.2;不同節點上的ingress-sbox的地址都不同,所以我們訪問不同節點地址,在nginx上看到地址也就不同;如下圖所示

提示:訪問不同的節點地址,在nginx日誌上記錄的IP各不相同

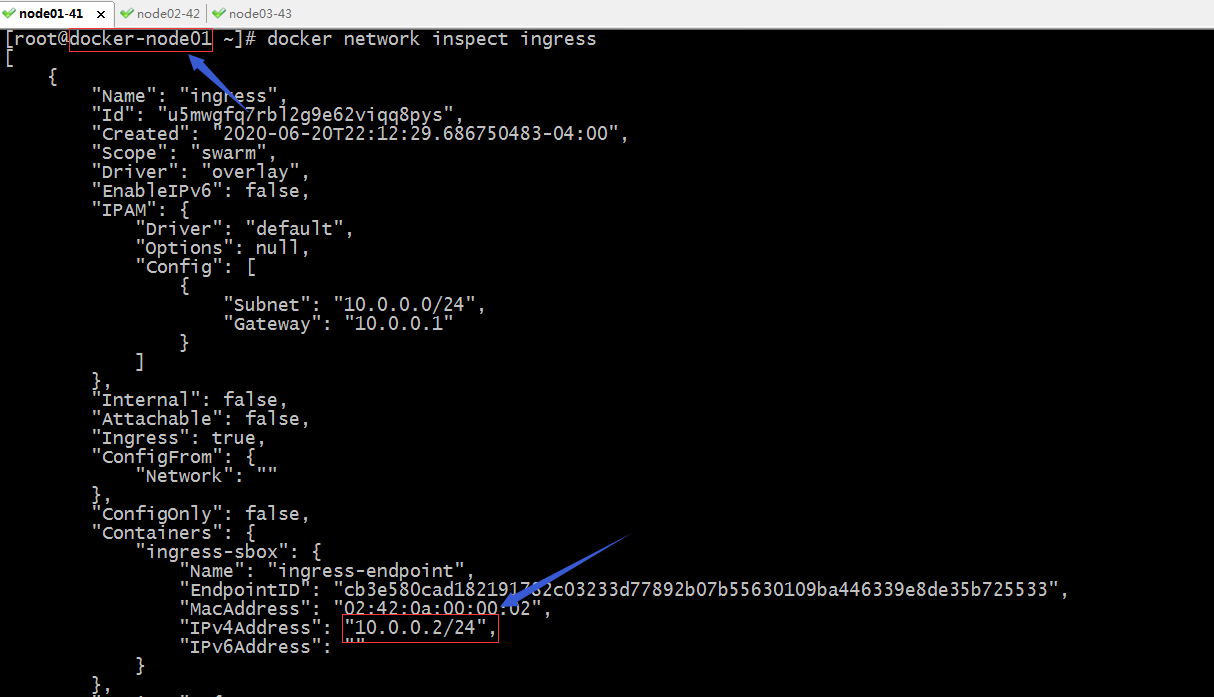

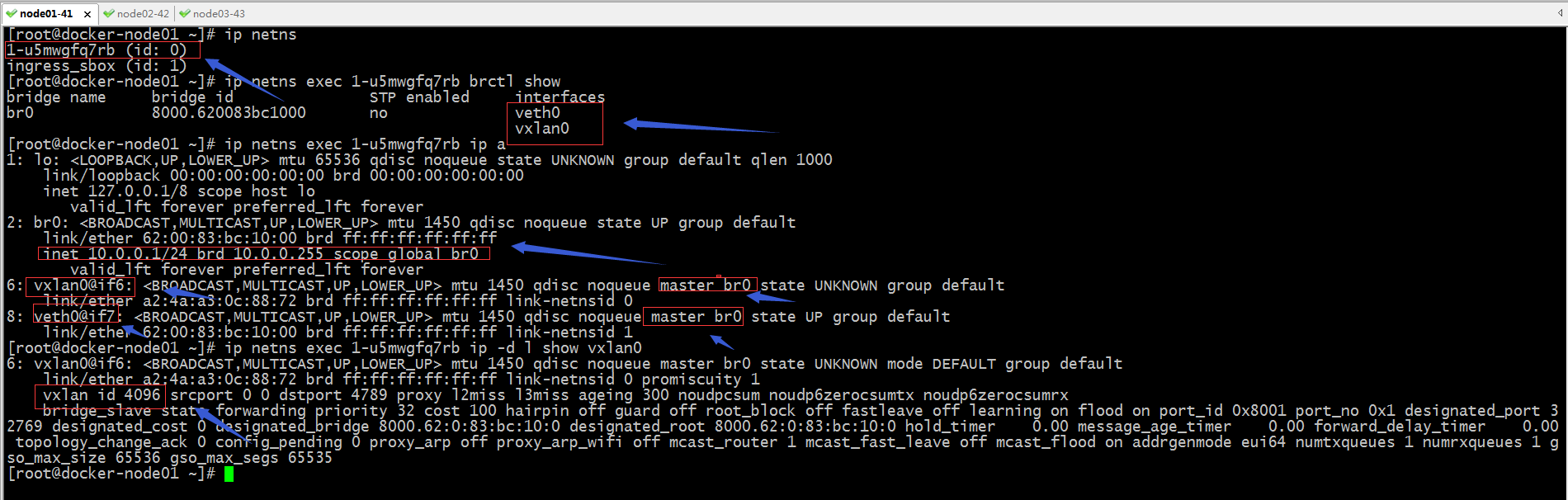

提示:從上面的截圖可以瞭解到每個節點的ingress-sbox容器的地址各不相同,但他們都把網關指向10.0.0.1,這意味著各個節點容器通信就可以基於這個網關來進行,從而實現了swarm集群上的容器間通信能夠基於ingress網路進行;現在還有一個問題就是172.18.0.0/18的網路是怎麼和10.0.0.0/24的網路通信的?

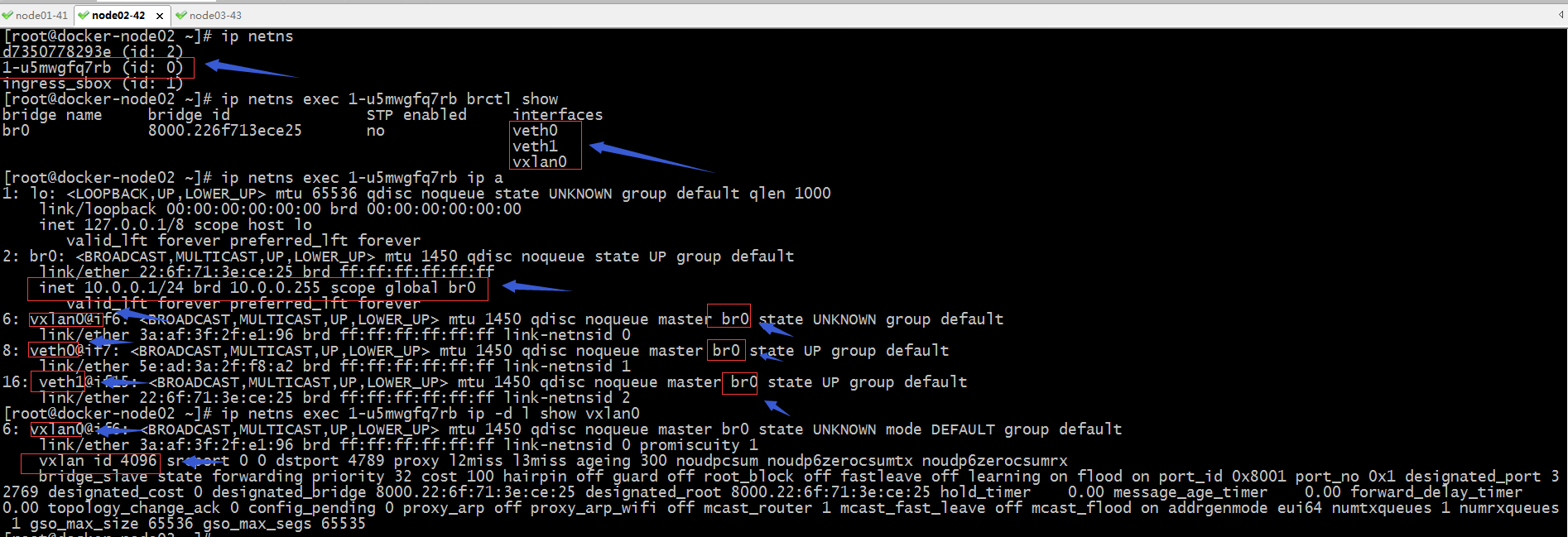

提示:從上面的截圖可以看到,在管理節點上有兩個網路名稱空間,一個id為0,而id為0的網路名稱空間中有veth0和vxlan0這兩個網卡;而veth0和vxlan0都是橋接到br0上的,br0的地址就是10.0.0.1/24;vxlan的vlan id為4096;結合上面nginx的日誌,不難想到

我們訪問管理節點上的80,通過iptables規則把流量轉發給docker-gwbridge網路上;現在我們還不清楚docker-gwbridge網路上那個名稱空間的網路,但是我們清楚知道在容器內部有兩張網卡,一張是eth0,一張是eth1,而eth1就是橋接到docker-gwbridge網路上,這也就意味著容docker-gwbridge網路的名稱空間和容器內部的eth1網路名稱空間相同;

提示:從上面的截圖看,1-u5mwgfq7rb這個名稱的網路名稱空間有三張網卡,分別是eth0,eth1和vxlan0,它們都是橋接在br0這個網卡上;而上面管理節點也在1-u5mwgfq7rb這個網路名稱空間,並且它們中的vxlan0的vlan id都是4096,這意味著管理節點上的vxlan0可以同node2上的vxlan0直接通信(相同網路名稱空間中的相同VLAN id是可以直接通信的),而vxlan0又是直接橋接到br0這塊網卡,所以我們在nginx日誌中能夠看到ingress-sbox容器的地址在訪問nginx;這其中的原因是ingress-sbox的網關就是br0;其實node3也是相同邏輯,不同節點上的容器間通信都是走vxlan0,與外部通信走eth1---->然後通過SNAT走docker-gwbridge---->物理網卡出去;

提示:一個容器上有兩個網路,一個是eth0 ingress網路,一個是eth1屬於docker-gwbridge網路,兩者都屬於同一容器中的網路名稱空間,所以我們訪問172.18.0.2就會通過ingress-sbox容器把源地址更改為docker-gwbridge上的ingress-sbox的地址,從而我們在看nginx日誌,就會看到10.0.0.2的地址;ingress-sbox容器作用我們可以理解為做SNAT的作用;

測試:訪問管理節點的80服務看看是否能夠訪問到nginx提供的頁面呢?

[root@docker-node02 ~]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES b829991d6966 docker-registry.io/test/nginx:latest "/docker-entrypoint.…" About an hour ago Up About an hour 80/tcp myweb.1.ilhkslrlnreyo6xx5j2h9isjb 8c2965fbdc27 docker-registry.io/test/nginx:latest "/docker-entrypoint.…" 2 hours ago Up 2 hours 80/tcp web.2.pthe8da2n45i06oee4n7h4krd b019d663e48e docker-registry.io/test/nginx:latest "/docker-entrypoint.…" 2 hours ago Up 2 hours 80/tcp web.3.w26gqpoyysgplm7qwhjbgisiv a7c1afd76f1f docker-registry.io/test/nginx:latest "/docker-entrypoint.…" 2 hours ago Up 2 hours 80/tcp web.1.ho0d7u3wensl0kah0ioz1lpk5 [root@docker-node02 ~]# docker exec -it myweb.1.ilhkslrlnreyo6xx5j2h9isjb bash root@b829991d6966:/# cd /usr/share/nginx/html/ root@b829991d6966:/usr/share/nginx/html# ls 50x.html index.html root@b829991d6966:/usr/share/nginx/html# echo "this is docker-node02 index page" >index.html root@b829991d6966:/usr/share/nginx/html# cat index.html this is docker-node02 index page root@b829991d6966:/usr/share/nginx/html#

提示:以上是在docker-node02節點上對運行的nginx容器的主頁進行了修改,接下我們訪問管理節點的80埠,看看是否能夠訪問得到work節點上的容器,它們會有什麼效果?是輪詢?還是一直訪問一個容器?

提示:可以看到我們訪問管理節點的80埠,會輪詢的訪問到work節點上的容器;用瀏覽器測試可能存在緩存的問題,我們可以用curl命令測試比較準確;如下

[root@docker-node03 ~]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES f43fdb9ec7fc docker-registry.io/test/nginx:latest "/docker-entrypoint.…" 2 hours ago Up 2 hours 80/tcp myweb.3.pgdjutofb5thlk02aj7387oj0 4470785f3d00 docker-registry.io/test/nginx:latest "/docker-entrypoint.…" 2 hours ago Up 2 hours 80/tcp myweb.2.uwxbe182qzq00qgfc7odcmx87 7493dcac95ba docker-registry.io/test/nginx:latest "/docker-entrypoint.…" 2 hours ago Up 2 hours 80/tcp web.4.rix50fhlmg6m9txw9urk66gvw 118880d300f4 docker-registry.io/test/nginx:latest "/docker-entrypoint.…" 2 hours ago Up 2 hours 80/tcp web.5.vo7c7vjgpf92b0ryelb7eque0 [root@docker-node03 ~]# docker exec -it myweb.2.uwxbe182qzq00qgfc7odcmx87 bash root@4470785f3d00:/# cd /usr/share/nginx/html/ root@4470785f3d00:/usr/share/nginx/html# echo "this is myweb.2 index page" > index.html root@4470785f3d00:/usr/share/nginx/html# cat index.html this is myweb.2 index page root@4470785f3d00:/usr/share/nginx/html# exit exit [root@docker-node03 ~]# docker exec -it myweb.3.pgdjutofb5thlk02aj7387oj0 bash root@f43fdb9ec7fc:/# cd /usr/share/nginx/html/ root@f43fdb9ec7fc:/usr/share/nginx/html# echo "this is myweb.3 index page" >index.html root@f43fdb9ec7fc:/usr/share/nginx/html# cat index.html this is myweb.3 index page root@f43fdb9ec7fc:/usr/share/nginx/html# exit exit [root@docker-node03 ~]#

提示:為了訪問方便看得出效果,我們把myweb.2和myweb.3的主頁都更改了內容

[root@docker-node01 ~]# for i in {1..10} ; do curl 192.168.0.41; done

this is myweb.3 index page

this is docker-node02 index page

this is myweb.2 index page

this is myweb.3 index page

this is docker-node02 index page

this is myweb.2 index page

this is myweb.3 index page

this is docker-node02 index page

this is myweb.2 index page

this is myweb.3 index page

[root@docker-node01 ~]#

提示:通過上面的測試,我們在使用--publish-add 暴露服務時,就相當於在管理節點創建了一個load balance;