最近有個需求,需要整合所有店鋪的數據做一個離線式分析系統,曾經都是按照店鋪分庫分表來給各自商家通過highchart多維度展示自家的店鋪經營 狀況,我們知道這是一個以店鋪為維度的切分,非常適合目前的線上業務,這回老闆提需求了,曾經也是一位數據分析師,sql自然就溜溜的,所以就來了 一個以買家維度展示 ...

最近有個需求,需要整合所有店鋪的數據做一個離線式分析系統,曾經都是按照店鋪分庫分表來給各自商家通過highchart多維度展示自家的店鋪經營

狀況,我們知道這是一個以店鋪為維度的切分,非常適合目前的線上業務,這回老闆提需求了,曾經也是一位數據分析師,sql自然就溜溜的,所以就來了

一個以買家維度展示用戶畫像,從而更好的做數據推送和用戶行為分析,因為是離線式分析,目前還沒研究spark,impala,drill了。

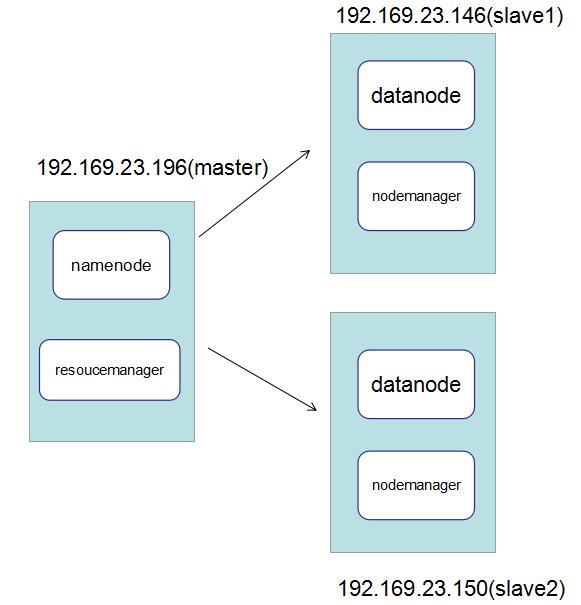

一:搭建hadoop集群

hadoop的搭建是一個比較繁瑣的過程,採用3台Centos,廢話不過多,一圖勝千言。。。

二: 基礎配置

1. 關閉防火牆

[root@localhost ~]# systemctl stop firewalld.service #關閉防火牆 [root@localhost ~]# systemctl disable firewalld.service #禁止開機啟動 [root@localhost ~]# firewall-cmd --state #查看防火牆狀態 not running [root@localhost ~]#

2. 配置SSH免登錄

不管在開啟還是關閉hadoop的時候,hadoop內部都要通過ssh進行通訊,所以需要配置一個ssh公鑰免登陸,做法就是將一個centos的公鑰copy到另一

台centos的authorized_keys文件中。

<1>: 在196上生成公鑰私鑰 ,從下圖中可以看到通過ssh-keygen之後會生成 id_rsa 和 id_rsa.pub 兩個文件,這裡我們

關心的是公鑰id_rsa.pub。

[root@localhost ~]# ssh-keygen -t rsa -P '' Generating public/private rsa key pair. Enter file in which to save the key (/root/.ssh/id_rsa): Created directory '/root/.ssh'. Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: 40:72:cc:f4:c3:e7:15:c9:9f:ee:f8:48:ec:22:be:a1 [email protected] The key's randomart image is: +--[ RSA 2048]----+ | .++ ... | | +oo o. | | . + . .. . | | . + . o | | S . . | | . . | | . oo | | ....o... | | E.oo .o.. | +-----------------+ [root@localhost ~]# ls /root/.ssh/id_rsa /root/.ssh/id_rsa [root@localhost ~]# ls /root/.ssh id_rsa id_rsa.pub

<2> 通過scp複製命令 將公鑰copy到 146 和 150主機。

[root@master ~]# scp /root/.ssh/id_rsa.pub root@192.168.23.146:/root/.ssh/authorized_keys root@192.168.23.146's password: id_rsa.pub 100% 408 0.4KB/s 00:00 [root@master ~]# scp /root/.ssh/id_rsa.pub root@192.168.23.150:/root/.ssh/authorized_keys root@192.168.23.150's password: id_rsa.pub 100% 408 0.4KB/s 00:00 [root@master ~]#

<3> 做host映射,主要給幾台機器做別名映射,方便管理。

[root@master ~]# cat /etc/hosts 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 192.168.23.196 master 192.168.23.150 slave1 192.168.23.146 slave2 [root@master ~]#

<4> java安裝環境

hadoop是java寫的,所以需要安裝java環境,具體怎麼安裝,大家可以網上搜一下,先把centos自帶的openjdk卸載掉,最後在profile中配置一下。

[root@master ~]# cat /etc/profile # /etc/profile # System wide environment and startup programs, for login setup # Functions and aliases go in /etc/bashrc # It's NOT a good idea to change this file unless you know what you # are doing. It's much better to create a custom.sh shell script in # /etc/profile.d/ to make custom changes to your environment, as this # will prevent the need for merging in future updates. pathmunge () { case ":${PATH}:" in *:"$1":*) ;; *) if [ "$2" = "after" ] ; then PATH=$PATH:$1 else PATH=$1:$PATH fi esac } if [ -x /usr/bin/id ]; then if [ -z "$EUID" ]; then # ksh workaround EUID=`id -u` UID=`id -ru` fi USER="`id -un`" LOGNAME=$USER MAIL="/var/spool/mail/$USER" fi # Path manipulation if [ "$EUID" = "0" ]; then pathmunge /usr/sbin pathmunge /usr/local/sbin else pathmunge /usr/local/sbin after pathmunge /usr/sbin after fi HOSTNAME=`/usr/bin/hostname 2>/dev/null` HISTSIZE=1000 if [ "$HISTCONTROL" = "ignorespace" ] ; then export HISTCONTROL=ignoreboth else export HISTCONTROL=ignoredups fi export PATH USER LOGNAME MAIL HOSTNAME HISTSIZE HISTCONTROL # By default, we want umask to get set. This sets it for login shell # Current threshold for system reserved uid/gids is 200 # You could check uidgid reservation validity in # /usr/share/doc/setup-*/uidgid file if [ $UID -gt 199 ] && [ "`id -gn`" = "`id -un`" ]; then umask 002 else umask 022 fi for i in /etc/profile.d/*.sh ; do if [ -r "$i" ]; then if [ "${-#*i}" != "$-" ]; then . "$i" else . "$i" >/dev/null fi fi done unset i unset -f pathmunge export JAVA_HOME=/usr/big/jdk1.8 export HADOOP_HOME=/usr/big/hadoop export PATH=$JAVA_HOME/bin:$JAVA_HOME/jre/bin:$HADOOP_HOME/sbin:$HADOOP_HOME/bin:$PATH [root@master ~]#

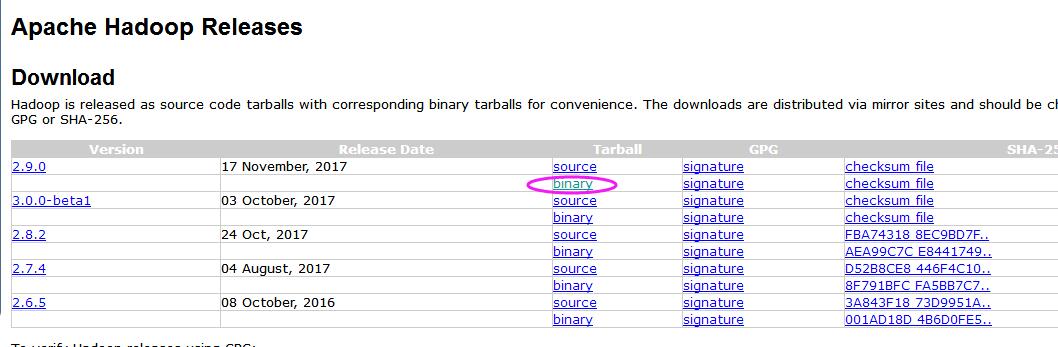

二: hadoop安裝包

1. 大家可以到官網上找一下安裝鏈接:http://hadoop.apache.org/releases.html, 我這裡選擇的是最新版的2.9.0,binary安裝。

2. 然後就是一路命令安裝【看清楚目錄哦。。。沒有的話自己mkdir】

[root@localhost big]# pwd /usr/big [root@localhost big]# ls hadoop-2.9.0 hadoop-2.9.0.tar.gz [root@localhost big]# tar -xvzf hadoop-2.9.0.tar.gz

3. 對core-site.xml ,hdfs-site.xml,mapred-site.xml,yarn-site.xml,slaves,hadoop-env.sh的配置,路徑都在etc目錄下,

這也是最麻煩的。。。

[root@master hadoop]# pwd

/usr/big/hadoop/etc/hadoop

[root@master hadoop]# ls

capacity-scheduler.xml hadoop-policy.xml kms-log4j.properties slaves

configuration.xsl hdfs-site.xml kms-site.xml ssl-client.xml.example

container-executor.cfg httpfs-env.sh log4j.properties ssl-server.xml.example

core-site.xml httpfs-log4j.properties mapred-env.cmd yarn-env.cmd

hadoop-env.cmd httpfs-signature.secret mapred-env.sh yarn-env.sh

hadoop-env.sh httpfs-site.xml mapred-queues.xml.template yarn-site.xml

hadoop-metrics2.properties kms-acls.xml mapred-site.xml

hadoop-metrics.properties kms-env.sh mapred-site.xml.template

[root@master hadoop]#

<1> core-site.xml 下的配置中,我指定了hadoop的基地址,namenode的埠號,namenode的地址。

<?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <!-- Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. See accompanying LICENSE file. --> <!-- Put site-specific property overrides in this file. --> <configuration> <property> <name>hadoop.tmp.dir</name> <value>/usr/hadoop</value> <description>A base for other temporary directories.</description> </property> <!-- file system properties --> <property> <name>fs.default.name</name> <value>hdfs://192.168.23.196:9000</value> </property> <property> <name>dfs.name.dir</name> <value>/usr/hadoop/namenode</value> <description>A base for other temporary directories.</description> </property> </configuration>

<2> hdfs-site.xml 這個文件主要用來配置datanode的存放路徑,以及datanode的副本。

<?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <!-- Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. See accompanying LICENSE file. --> <!-- Put site-specific property overrides in this file. --> <configuration> <property> <name>dfs.replication</name> <value>2</value> </property> <property> <name>dfs.data.dir</name> <value>/usr/hadoop/datanode</value> </property> </configuration>

3. 這裡配置一下jobtrace埠號

<?xml version="1.0"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <!-- Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. See accompanying LICENSE file. --> <!-- Put site-specific property overrides in this file. --> <configuration> <property> <name>mapreduce.job.tracker</name> <value>hdfs://192.168.23.196:8001</value> <final>true</final> </property> </configuration>

4. yarn-site.xml文件配置

<configuration> <!-- Site specific YARN configuration properties --> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> <property> <name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name> <value>org.apache.hadoop.mapred.ShuffleHandler</value> </property> <property> <name>yarn.nodemanager.resource.memory-mb</name> <value>20480</value> </property> <property> <name>yarn.scheduler.minimum-allocation-mb</name> <value>2048</value> </property> <property> <name>yarn.nodemanager.vmem-pmem-ratio</name> <value>2.1</value> </property> </configuration>

5. 在etc的slaves文件中,追加我們在host中配置的salve1和slave2,這樣啟動的時候,hadoop才能知道slave的位置。

[root@master hadoop]# cat slaves

slave1

slave2

[root@master hadoop]# pwd

/usr/big/hadoop/etc/hadoop

[root@master hadoop]#

6. 在hadoop-env.sh中配置java的路徑,其實就是把 /etc/profile的配置copy一下,追加到文件末尾。

[root@master hadoop]# vim hadoop-env.sh

export JAVA_HOME=/usr/big/jdk1.8

不過這裡還有一個坑,hadoop在計算時,預設的heap-size是512M,這就容易導致在大數據計算時,堆棧溢出,這裡將512改成2048。

export HADOOP_NFS3_OPTS="$HADOOP_NFS3_OPTS"

export HADOOP_PORTMAP_OPTS="-Xmx2048m $HADOOP_PORTMAP_OPTS"

# The following applies to multiple commands (fs, dfs, fsck, distcp etc)

export HADOOP_CLIENT_OPTS="$HADOOP_CLIENT_OPTS"

# set heap args when HADOOP_HEAPSIZE is empty

if [ "$HADOOP_HEAPSIZE" = "" ]; then

export HADOOP_CLIENT_OPTS="-Xmx2048m $HADOOP_CLIENT_OPTS"

fi

7. 不要忘了在/usr目錄下創建文件夾哦,然後在/etc/profile中配置hadoop的路徑。

/usr/hadoop

/usr/hadoop/namenode

/usr/hadoop/datanode

export JAVA_HOME=/usr/big/jdk1.8

export HADOOP_HOME=/usr/big/hadoop

export PATH=$JAVA_HOME/bin:$JAVA_HOME/jre/bin:$HADOOP_HOME/sbin:$HADOOP_HOME/bin:$PATH

8. 將196上配置好的整個hadoop文件夾通過scp到 146 和150 伺服器上的/usr/big目錄下,後期大家也可以通過svn進行hadoop文件夾的

管理,這樣比較方便。

scp -r /usr/big/hadoop [email protected]:/usr/big

scp -r /usr/big/hadoop [email protected]:/usr/big

三:啟動hadoop

1. 啟動之前通過hadoop namede -format 格式化一下hadoop dfs。

[root@master hadoop]# hadoop namenode -format

DEPRECATED: Use of this script to execute hdfs command is deprecated.

Instead use the hdfs command for it.

17/11/24 20:13:19 INFO namenode.NameNode: STARTUP_MSG:

/************************************************************

STARTUP_MSG: Starting NameNode

STARTUP_MSG: host = master/192.168.23.196

STARTUP_MSG: args = [-format]

STARTUP_MSG: version = 2.9.0

2. 在master機器上start-all.sh 啟動hadoop集群。

[root@master hadoop]# start-all.sh

This script is Deprecated. Instead use start-dfs.sh and start-yarn.sh

Starting namenodes on [master]

root@master's password:

master: starting namenode, logging to /usr/big/hadoop/logs/hadoop-root-namenode-master.out

slave1: starting datanode, logging to /usr/big/hadoop/logs/hadoop-root-datanode-slave1.out

slave2: starting datanode, logging to /usr/big/hadoop/logs/hadoop-root-datanode-slave2.out

Starting secondary namenodes [0.0.0.0]

[email protected]'s password:

0.0.0.0: starting secondarynamenode, logging to /usr/big/hadoop/logs/hadoop-root-secondarynamenode-master.out

starting yarn daemons

starting resourcemanager, logging to /usr/big/hadoop/logs/yarn-root-resourcemanager-master.out

slave1: starting nodemanager, logging to /usr/big/hadoop/logs/yarn-root-nodemanager-slave1.out

slave2: starting nodemanager, logging to /usr/big/hadoop/logs/yarn-root-nodemanager-slave2.out

[root@master hadoop]# jps

8851 NameNode

9395 ResourceManager

9655 Jps

9146 SecondaryNameNode

[root@master hadoop]#

通過jps可以看到,在master中已經開啟了NameNode 和 ResouceManager,那麼接下來,大家也可以到slave1和slave2機器上看一下是不是把NodeManager

和 DataNode都開起來了。。。

[root@slave1 hadoop]# jps

7112 NodeManager

7354 Jps

6892 DataNode

[root@slave1 hadoop]#

[root@slave2 hadoop]# jps

7553 NodeManager

7803 Jps

7340 DataNode

[root@slave2 hadoop]#

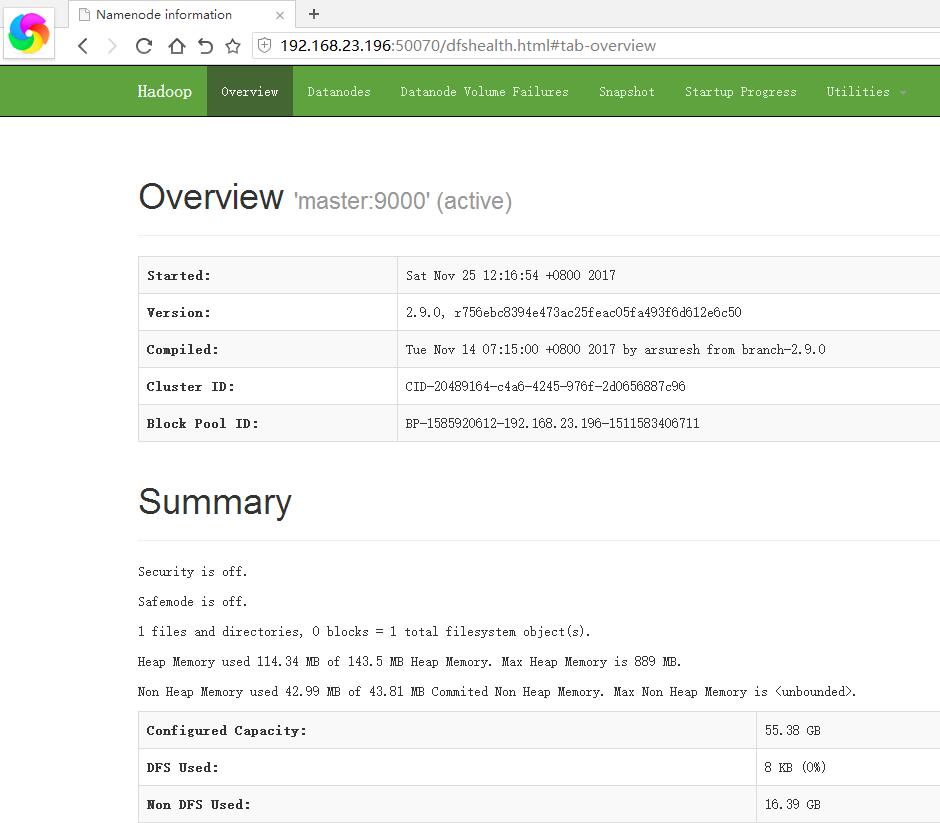

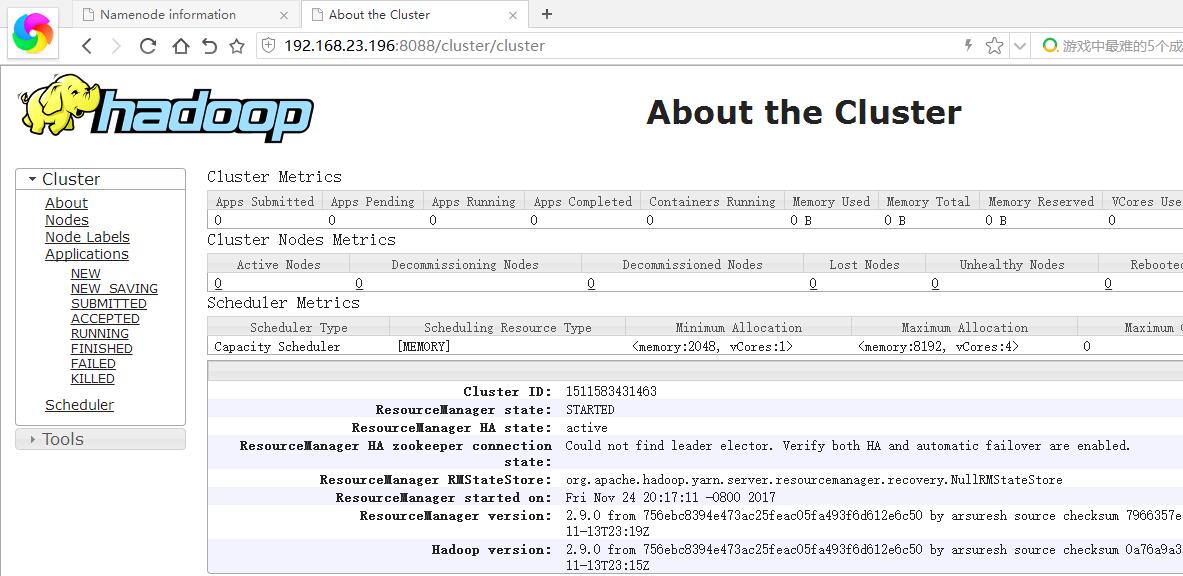

四:搭建完成,查看結果

通過下麵的tlnp命令,可以看到50070埠和8088埠打開,一個是查看datanode,一個是查看mapreduce任務。

[root@master hadoop]# netstat -tlnp

五:最後通過hadoop自帶的wordcount來結束本篇的搭建過程。

在hadoop的share目錄下有一個wordcount的測試程式,主要用來統計單詞的個數,hadoop/share/hadoop/mapreduce/hadoop-mapreduce-

examples-2.9.0.jar。

1. 我在/usr/soft下通過程式生成了一個39M的2.txt文件(全是隨機漢字哦。。。)

[root@master soft]# ls -lsh 2.txt

39M -rw-r--r--. 1 root root 39M Nov 24 00:32 2.txt

[root@master soft]#

2. 在hadoop中創建一個input文件夾,然後在把2.txt上傳過去

[root@master soft]# hadoop fs -mkdir /input

[root@master soft]# hadoop fs -put /usr/soft/2.txt /input

[root@master soft]# hadoop fs -ls /

Found 1 items

drwxr-xr-x - root supergroup 0 2017-11-24 20:30 /input

3. 執行wordcount的mapreduce任務

[root@master soft]# hadoop jar /usr/big/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.9.0.jar wordcount /input/2.txt /output/v1

17/11/24 20:32:21 INFO Configuration.deprecation: session.id is deprecated. Instead, use dfs.metrics.session-id

17/11/24 20:32:21 INFO jvm.JvmMetrics: Initializing JVM Metrics with processName=JobTracker, sessionId=

17/11/24 20:32:21 INFO input.FileInputFormat: Total input files to process : 1

17/11/24 20:32:21 INFO mapreduce.JobSubmitter: number of splits:1

17/11/24 20:32:21 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_local1430356259_0001

17/11/24 20:32:22 INFO mapreduce.Job: The url to track the job: http://localhost:8080/

17/11/24 20:32:22 INFO mapreduce.Job: Running job: job_local1430356259_0001

17/11/24 20:32:22 INFO mapred.LocalJobRunner: OutputCommitter set in config null

17/11/24 20:32:22 INFO output.FileOutputCommitter: File Output Committer Algorithm version is 1

17/11/24 20:32:22 INFO output.FileOutputCommitter: FileOutputCommitter skip cleanup _temporary folders under output directory:false, ignore cleanup failures: false

17/11/24 20:32:22 INFO mapred.LocalJobRunner: OutputCommitter is org.apache.hadoop.mapreduce.lib.output.FileOutputCommitter

17/11/24 20:32:22 INFO mapred.LocalJobRunner: Waiting for map tasks

17/11/24 20:32:22 INFO mapred.LocalJobRunner: Starting task: attempt_local1430356259_0001_m_000000_0

17/11/24 20:32:22 INFO output.FileOutputCommitter: File Output Committer Algorithm version is 1

17/11/24 20:32:22 INFO output.FileOutputCommitter: FileOutputCommitter skip cleanup _temporary folders under output directory:false, ignore cleanup failures: false

17/11/24 20:32:22 INFO mapred.Task: Using ResourceCalculatorProcessTree : [ ]

17/11/24 20:32:22 INFO mapred.MapTask: Processing split: hdfs://192.168.23.196:9000/input/2.txt:0+40000002

17/11/24 20:32:22 INFO mapred.MapTask: (EQUATOR) 0 kvi 26214396(104857584)

17/11/24 20:32:22 INFO mapred.MapTask: mapreduce.task.io.sort.mb: 100

17/11/24 20:32:22 INFO mapred.MapTask: soft limit at 83886080

17/11/24 20:32:22 INFO mapred.MapTask: bufstart = 0; bufvoid = 104857600

17/11/24 20:32:22 INFO mapred.MapTask: kvstart = 26214396; length = 6553600

17/11/24 20:32:22 INFO mapred.MapTask: Map output collector class = org.apache.hadoop.mapred.MapTask$MapOutputBuffer

17/11/24 20:32:23 INFO mapreduce.Job: Job job_local1430356259_0001 running in uber mode : false

17/11/24 20:32:23 INFO mapreduce.Job: map 0% reduce 0%

17/11/24 20:32:23 INFO input.LineRecordReader: Found UTF-8 BOM and skipped it

17/11/24 20:32:27 INFO mapred.MapTask: Spilling map output

17/11/24 20:32:27 INFO mapred.MapTask: bufstart = 0; bufend = 27962024; bufvoid = 104857600

17/11/24 20:32:27 INFO mapred.MapTask: kvstart = 26214396(104857584); kvend = 12233388(48933552); length = 13981009/6553600

17/11/24 20:32:27 INFO mapred.MapTask: (EQUATOR) 38447780 kvi 9611940(38447760)

17/11/24 20:32:32 INFO mapred.MapTask: Finished spill 0

17/11/24 20:32:32 INFO mapred.MapTask: (RESET) equator 38447780 kv 9611940(38447760) kvi 6990512(27962048)

17/11/24 20:32:33 INFO mapred.MapTask: Spilling map output

17/11/24 20:32:33 INFO mapred.MapTask: bufstart = 38447780; bufend = 66409804; bufvoid = 104857600

17/11/24 20:32:33 INFO mapred.MapTask: kvstart = 9611940(38447760); kvend = 21845332(87381328); length = 13981009/6553600

17/11/24 20:32:33 INFO mapred.MapTask: (EQUATOR) 76895558 kvi 19223884(76895536)

17/11/24 20:32:34 INFO mapred.LocalJobRunner: map > map

17/11/24 20:32:34 INFO mapreduce.Job: map 67% reduce 0%

17/11/24 20:32:38 INFO mapred.MapTask: Finished spill 1

17/11/24 20:32:38 INFO mapred.MapTask: (RESET) equator 76895558 kv 19223884(76895536) kvi 16602456(66409824)

17/11/24 20:32:39 INFO mapred.LocalJobRunner: map > map

17/11/24 20:32:39 INFO mapred.MapTask: Starting flush of map output

17/11/24 20:32:39 INFO mapred.MapTask: Spilling map output

17/11/24 20:32:39 INFO mapred.MapTask: bufstart = 76895558; bufend = 100971510; bufvoid = 104857600

17/11/24 20:32:39 INFO mapred.MapTask: kvstart = 19223884(76895536); kvend = 7185912(28743648); length = 12037973/6553600

17/11/24 20:32:40 INFO mapred.LocalJobRunner: map > sort

17/11/24 20:32:43 INFO mapred.MapTask: Finished spill 2

17/11/24 20:32:43 INFO mapred.Merger: Merging 3 sorted segments

17/11/24 20:32:43 INFO mapred.Merger: Down to the last merge-pass, with 3 segments left of total size: 180000 bytes

17/11/24 20:32:43 INFO mapred.Task: Task:attempt_local1430356259_0001_m_000000_0 is done. And is in the process of committing

17/11/24 20:32:43 INFO mapred.LocalJobRunner: map > sort

17/11/24 20:32:43 INFO mapred.Task: Task 'attempt_local1430356259_0001_m_000000_0' done.

17/11/24 20:32:43 INFO mapred.LocalJobRunner: Finishing task: attempt_local1430356259_0001_m_000000_0

17/11/24 20:32:43 INFO mapred.LocalJobRunner: map task executor complete.

17/11/24 20:32:43 INFO mapred.LocalJobRunner: Waiting for reduce tasks

17/11/24 20:32:43 INFO mapred.LocalJobRunner: Starting task: attempt_local1430356259_0001_r_000000_0

17/11/24 20:32:43 INFO output.FileOutputCommitter: File Output Committer Algorithm version is 1

17/11/24 20:32:43 INFO output.FileOutputCommitter: FileOutputCommitter skip cleanup _temporary folders under output directory:false, ignore cleanup failures: false

17/11/24 20:32:43 INFO mapred.Task: Using ResourceCalculatorProcessTree : [ ]

17/11/24 20:32:43 INFO mapred.ReduceTask: Using ShuffleConsumerPlugin: org.apache.hadoop.mapreduce.task.reduce.Shuffle@f8eab6f

17/11/24 20:32:43 INFO mapreduce.Job: map 100% reduce 0%

17/11/24 20:32:43 INFO reduce.MergeManagerImpl: MergerManager: memoryLimit=1336252800, maxSingleShuffleLimit=334063200, mergeThreshold=881926912, ioSortFactor=10, memToMemMergeOutputsThreshold=10

17/11/24 20:32:43 INFO reduce.EventFetcher: attempt_local1430356259_0001_r_000000_0 Thread started: EventFetcher for fetching Map Completion Events

17/11/24 20:32:43 INFO reduce.LocalFetcher: localfetcher#1 about to shuffle output of map attempt_local1430356259_0001_m_000000_0 decomp: 60002 len: 60006 to MEMORY

17/11/24 20:32:43 INFO reduce.InMemoryMapOutput: Read 60002 bytes from map-output for attempt_local1430356259_0001_m_000000_0

17/11/24 20:32:43 INFO reduce.MergeManagerImpl: closeInMemoryFile -> map-output of size: 60002, inMemoryMapOutputs.size() -> 1, commitMemory -> 0, usedMemory ->60002

17/11/24 20:32:43 INFO reduce.EventFetcher: EventFetcher is interrupted.. Returning

17/11/24 20:32:43 INFO mapred.LocalJobRunner: 1 / 1 copied.

17/11/24 20:32:43 INFO reduce.MergeManagerImpl: finalMerge called with 1 in-memory map-outputs and 0 on-disk map-outputs

17/11/24 20:32:43 INFO mapred.Merger: Merging 1 sorted segments

17/11/24 20:32:43 INFO mapred.Merger: Down to the last merge-pass, with 1 segments left of total size: 59996 bytes

17/11/24 20:32:43 INFO reduce.MergeManagerImpl: Merged 1 segments, 60002 bytes to disk to satisfy reduce memory limit

17/11/24 20:32:43 INFO reduce.MergeManagerImpl: Merging 1 files, 60006 bytes from disk

17/11/24 20:32:43 INFO reduce.MergeManagerImpl: Merging 0 segments, 0 bytes from memory into reduce

17/11/24 20:32:43 INFO mapred.Merger: Merging 1 sorted segments

17/11/24 20:32:43 INFO mapred.Merger: Down to the last merge-pass, with 1 segments left of total size: 59996 bytes

17/11/24 20:32:43 INFO mapred.LocalJobRunner: 1 / 1 copied.

17/11/24 20:32:43 INFO Configuration.deprecation: mapred.skip.on is deprecated. Instead, use mapreduce.job.skiprecords

17/11/24 20:32:44 INFO mapred.Task: Task:attempt_local1430356259_0001_r_000000_0 is done. And is in the process of committing

17/11/24 20:32:44 INFO mapred.LocalJobRunner: 1 / 1 copied.

17/11/24 20:32:44 INFO mapred.Task: Task attempt_local1430356259_0001_r_000000_0 is allowed to commit now

17/11/24 20:32:44 INFO output.FileOutputCommitter: Saved output of task 'attempt_local1430356259_0001_r_000000_0' to hdfs://192.168.23.196:9000/output/v1/_temporary/0/task_local1430356259_0001_r_000000

17/11/24 20:32:44 INFO mapred.LocalJobRunner: reduce > reduce

17/11/24 20:32:44 INFO mapred.Task: Task 'attempt_local1430356259_0001_r_000000_0' done.

17/11/24 20:32:44 INFO mapred.LocalJobRunner: Finishing task: attempt_local1430356259_0001_r_000000_0

17/11/24 20:32:44 INFO mapred.LocalJobRunner: reduce task executor complete.

17/11/24 20:32:44 INFO mapreduce.Job: map 100% reduce 100%

17/11/24 20:32:44 INFO mapreduce.Job: Job job_local1430356259_0001 completed successfully

17/11/24 20:32:44 INFO mapreduce.Job: Counters: 35

File System Counters

FILE: Number of bytes read=1087044

FILE: Number of bytes written=2084932

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=80000004

HDFS: Number of bytes written=54000

HDFS: Number of read operations=13

HDFS: Number of large read operations=0

HDFS: Number of write operations=4

Map-Reduce Framework

Map input records=1

Map output records=10000000

Map output bytes=80000000

Map output materialized bytes=60006

Input split bytes=103

Combine input records=10018000

Combine output records=24000

Reduce input groups=6000

Reduce shuffle bytes=60006

Reduce input records=6000

Reduce output records=6000

Spilled Records=30000

Shuffled Maps =1

Failed Shuffles=0

Merged Map outputs=1

GC time elapsed (ms)=1770

Total committed heap usage (bytes)=1776287744

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=40000002

File Output Format Counters

Bytes Written=54000

4. 最後我們到/output/v1下麵去看一下最終生成的結果,由於生成的漢字太多,我這裡只輸出了一部分

[root@master soft]# hadoop fs -ls /output/v1

Found 2 items

-rw-r--r-- 2 root supergroup 0 2017-11-24 20:32 /output/v1/_SUCCESS

-rw-r--r-- 2 root supergroup 54000 2017-11-24 20:32 /output/v1/part-r-00000

[root@master soft]# hadoop fs -ls /output/v1/part-r-00000

-rw-r--r-- 2 root supergroup 54000 2017-11-24 20:32 /output/v1/part-r-00000

[root@master soft]# hadoop fs -tail /output/v1/part-r-00000

1609

攟 1685

攠 1636

攡 1682

攢 1657

攣 1685

攤 1611

攥 1724

攦 1732

攧 1657

攨 1767

攩 1768

攪 1624

好了,搭建的過程確實是麻煩,關於hive的搭建,我們放到後面的博文中去說吧。。。希望本篇對你有幫助。